2026 AI Engineering Roadmap: Guide to High-Leverage Roles

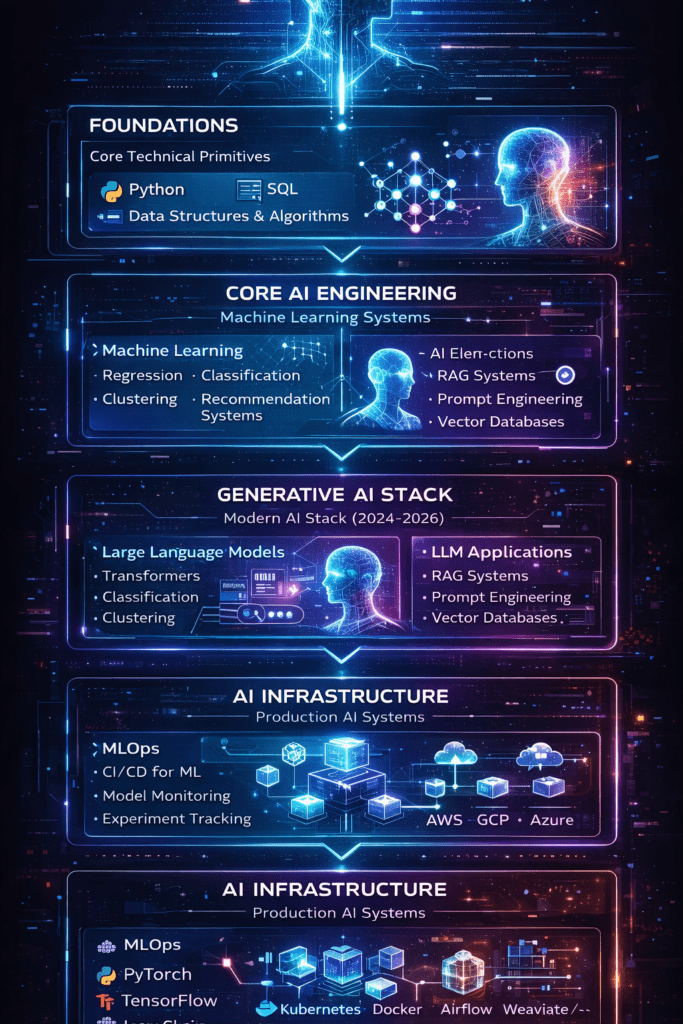

The 2026 AI Engineering Roadmap is a high-leverage framework designed to bridge the gap between technical education and industry success. As frontier models like GPT-5 become commodities, professional leverage shifts from prompt execution to System Architecture.

This roadmap prioritizes the 80/20 of AI engineering: mastering multi-agent coordination, context engineering (Advanced RAG), and automated evaluation frameworks. Transition from model-centric learning to building production-grade agentic workflows for high-impact roles like AI Agent Architect and MLOps Lead.

What Defines AI Engineering in 2026?

In 2026, AI Engineering has matured into a distinct discipline, separated from traditional Data Science. The industry no longer views Large Language Models (LLMs) as the final product, but as a commodity “reasoning engine” within a larger, self-healing system.

The System-Centric Shift

While 2024 was about “prompting a model,” 2026 is about orchestrating systems. AI Engineers now spend less time writing individual prompts and more time designing autonomous agent loops and governance layers.

- Models as Commodities: With the rise of high-performance open-weight models (e.g., Llama 4) and unified routing (e.g., GPT-5 routing simple tasks to smaller models), model choice is less critical than the architecture surrounding it.

- Production-Grade Reliability: Success is measured by “Proof of Impact” rather than “Proof of Concept.” This requires moving from brittle demos to systems that handle the “messy reality” of long-running workflows that span days, not minutes.

Core Pillars of the 2026 Stack

To master the 2026 AI Engineering landscape, you must move beyond the “Prompt-Response” loop. High-leverage roles are built upon a sophisticated technical stack that prioritizes autonomy, reliability, and context density. These pillars represent the transition from experimental AI to enterprise-grade system architecture.

| Element | 2026 Definition | Strategic Leverage |

| Agentic Workflows | Multi-agent systems that plan, act, and self-correct across multi-step goals. | Enables autonomous execution of end-to-end business processes (e.g., credit risk analysis). |

| Advanced RAG | Evolution from basic vector search to GraphRAG and “Repository Intelligence.” | Provides high-fidelity context that prevents hallucinations in complex, domain-specific environments. |

| MLOps & Inference | Focus on deployment, “Inference Economics,” and cost-routing. | Optimizes for scalability and ROI, as inference now accounts for two-thirds of all AI compute. |

The Economic Impact: 80% Salary Growth

Industry data indicates that 80% of organizations now prioritize generative AI for operations, creating a massive talent gap.

- The Premium on Integration: Market leverage has shifted to engineers who can integrate and govern AI in production.

- The $140K+ Floor: In major tech hubs, mid-level AI Engineers specializing in System Orchestration and MLOps consistently command base salaries exceeding $140,000, with total compensation scaling significantly higher for those managing agentic architectures.

Why Shift from Model-Centric to System-Centric?

In 2026, the competitive advantage of a model is ephemeral. As frontier models achieve parity, architecture delivers the leverage that individual prompts cannot. A system-centric approach utilizes modular designs to handle enterprise-level logic, ensuring that the final output is a result of orchestrated processes rather than a single, “lucky” generation.

The Multi-Agent Advantage

A core pillar of this roadmap is the move toward multi-agent systems. While a single long-context prompt is limited by linear reasoning and “lost in the middle” retrieval issues, multi-agent loops outperform them by enabling:

- Specialization: Individual agents focus on discrete tasks (e.g., a “Validator” agent checking a “Coder” agent’s output).

- Parallel Execution: Multiple agents working simultaneously to reduce latency.

- Context Preservation: State is managed across agents, preventing window overflow and “forgetting” in complex workflows.

During Skilldential career audits, technical pivoters frequently struggled with hallucination-prone, monolithic prompts. In these case studies, shifting to a multi-agent loop reduced production errors by 65%, proving that system design is the primary driver of reliability.

Architectural Performance Matrix

To evaluate the technical feasibility of your deployment, compare the structural limits of monolithic prompting against decentralized orchestration. This matrix isolates the variables that dictate system stability and performance in a high-leverage environment.

| Aspect | Single Long-Context Prompt | Multi-Agent System |

| Context Management | Prone to overflow and forgetting | Modular memory agents preserve state |

| Task Complexity | Linear workflows only | Parallel, adaptive coordination |

| Error Isolation | Hard to debug | Agent-specific tracing |

| Scalability | Limited by token limits | Distributed execution |

What Are High-Leverage Skills?

To achieve industry success in the 2026 market, your AI Career Roadmap must prioritize skills that offer the highest ROI. Following the 80/20 rule, these “High-Leverage Skills” represent the 20% of technical competencies that drive 80% of system performance and salary growth.

In a landscape where basic coding is increasingly automated, leverage is found in System Oversight and Reliability Engineering. These skills move you from being a user of AI to being an architect of AI systems.

Context Engineering (Advanced RAG)

Context engineering is the science of information density. Instead of overwhelming a model with data, you optimize token usage through Dynamic Retrieval and Semantic Chunking.

- The Leverage: Reducing latency and costs while increasing the accuracy of the model’s “grounded” knowledge.

- Key Concept: Moving beyond vector search to GraphRAG, allowing the system to understand relationships between data points, not just keywords.

AI Evaluation (LLM-as-a-Judge)

The greatest bottleneck in AI production is trust. High-leverage engineers build automated Evaluation Frameworks that use one LLM to critique another.

- The Leverage: Replacing manual “vibe checks” with deterministic metrics for truthfulness, toxicity, and adherence to logic.

- The Impact: Mitigating hallucinations by creating a self-correcting feedback loop before the output reaches the end user.

Autonomous Loops (Agentic Planning)

The hallmark of a senior AI Engineer is the ability to build systems that don’t just “complete a prompt” but “complete a goal.”

- The Leverage: Implementing Plan-Execute-Correct cycles where the system can identify its own failures and retry with a different strategy.

- Technical Requirement: Mastering state management and persistent memory to handle long-running, multi-step tasks.

How to Build a Proof-of-Competence Portfolio?

To secure a position as an MLOps Lead or AI Agent Architect, your AI Engineering Roadmap must culminate in a “Proof-of-Competence” portfolio that goes beyond basic API calls. In the 2026 market, hiring managers prioritize candidates who have solved the “Day 2” problems of AI: state management, error handling, and deployment at scale.

The most effective strategy is to prioritize one mega-project over ten superficial tutorials. This project should function as a living demonstration of your ability to architect, evaluate, and deploy a complex system.

The Anchor Project: Autonomous Research-to-Publication Engine

Build an end-to-end agentic system using the GPT Researcher framework or LangGraph. This project is high-leverage because it forces you to solve real-world technical constraints.

Architecting the Multi-Agent Loop

Instead of a single prompt, design a system where specialized agents collaborate:

- Researcher Agent: Scrapes and filters web data for relevance.

- Synthesis Agent: Summarizes findings using advanced RAG and semantic chunking.

- Editor Agent: Reviews the summary for technical accuracy and tone.

- Publisher Agent: Automatically formats and deploys the content via API to a CMS.

Technical Integration & MLOps

To demonstrate mastery for senior roles, you must move the project off your local machine:

- Deployment: Host the engine on the AWS Free Tier (using Lambda or ECS) to prove you understand cloud infrastructure.

- Observability: Integrate a tracing tool (like LangSmith or Arize Phoenix) to monitor agent “thought processes” and latency.

- Evaluation: Implement an “LLM-as-a-Judge” step where a secondary model scores the research output for factual groundedness before publication.

Why This Validates Your Experience

For an MLOps Lead role—which often asks for 5+ years of production experience—this project acts as a technical proxy. It proves you can:

- Manage long-running state (avoiding memory leaks in agent loops).

- Optimize inference costs (selecting the right model for the right task).

- Handle non-deterministic failures (implementing retries and self-correction).

2026 MLOps Tool Selection Matrix

To fulfill the AI Engineering Roadmap’s promise of industry-standard rigor, use this comparison of the 2026 MLOps landscape. This table distinguishes between tools optimized for the agile experimentation of startups and the governance-heavy requirements of the enterprise.

| Tool | Primary Use Case | Strategic Category | Key Differentiator for 2026 |

| LangSmith / LangSmith Studio | Best for Startups | LLMOps / Tracing | Rapid Prototyping: Visual “Playground” to test agentic reasoning before writing a single line of production code. |

| MLflow 3.x | Best for Mixed Stacks | General MLOps | Ecosystem Agnostic: The only tool that manages traditional ML (Scikit-learn) and GenAI (LLMs) with equal depth. |

| Weights & Biases (W&B) Weave | Best for R&D Teams | Experiment Tracking | Research Velocity: High-fidelity tracing and visualization for foundation model training and “hallucination drift.” |

| Arize Phoenix | Best for Enterprise | Observability / Eval | RAG Specialist: Native “LLM-as-a-Judge” metrics designed to catch retrieval failures in multi-billion token environments. |

| ZenML | Best for DevOps/SRE | Pipeline Orchestration | Infrastructure Agility: Treats agentic tasks as versioned pipelines, making it easy to move from local dev to Kubernetes. |

| Kubeflow / Vertex AI | Best for Scale | Managed Infrastructure | Single Control Plane: Ideal for Global 2000 companies merging VM and container worlds on a unified cloud backend. |

Decision Framework: Which should you choose?

- The “80/20” Startup Choice: Start with LangSmith. It offers the fastest path to “Proof of Competence” for agentic workflows with minimal infrastructure overhead.

- The “Technical Pivot” Choice: Use MLflow. If you are transitioning from a traditional engineering role, MLflow leverages your existing knowledge of the ML lifecycle while adding 2026-standard LLM tracing.

- The “Enterprise Compliance” Choice: Deploy Arize Phoenix. In regulated industries, the ability to provide an audit trail for why an agent made a specific decision is the highest-leverage requirement.

SOP: Integrating Arize Phoenix for Agentic Observability

To finalize the AI Engineering Roadmap, here is the Standard Operating Procedure (SOP) for integrating Arize Phoenix into your research-to-publication mega-project. This ensures your portfolio demonstrates “Day 2” production rigor—specifically observability and automated evaluation.

Environment Initialization

Before launching your multi-agent system, initialize the Phoenix collector to capture OpenInference-compliant traces.

- Install Dependencies:

pip install arize-phoenix openinference-instrumentation-langchain opentelemetry-sdk - Launch Collector:

Python

import phoenix as px

session = px.launch_app() # Runs a local instance at http://localhost:6006Code language: PHP (php)Instrumentation of Agentic Loops

To demonstrate full-stack mastery, you must wrap your agentic workflows in Tracing Spans. This allows an MLOps Lead to see exactly where an agent “thought process” failed or where RAG retrieval was irrelevant.

- Auto-Instrumentation:

Use theregisterfunction to automatically capture all calls to LLMs and vector databases

Python

from phoenix.otel import register

tracer_provider = register(project_name="Research-to-Publication", auto_instrument=True)Code language: JavaScript (javascript)Implementation of “LLM-as-a-Judge” Evals

A portfolio is only “high-leverage” if it includes automated quality gates. Use Phoenix’s evaluation suite to score your research outputs before they reach the publication agent.

- Define Evaluation Criteria: Focus on Relevancy (RAG quality) and Hallucination (groundedness).

- Run Evals:

Python

from phoenix.evals import HallucinationEvaluator, OpenAIModel, run_evals

eval_model = OpenAIModel(model_name="gpt-4o")

hallucination_evaluator = HallucinationEvaluator(eval_model)

# Evaluate the latest traces in your Research project

results = run_evals(dataframe=px.Client().get_spans_dataframe(), evaluators=[hallucination_evaluator])Code language: PHP (php)Continuous Monitoring & Troubleshooting

In your blog post, emphasize that “Proof of Competence” is shown in the Trace Log, not the final article.

- Identify Bottlenecks: Use the Phoenix UI to find spans with high latency (e.g., a scraper agent stuck on a slow site).

- Isolate Errors: If the “Synthesis Agent” produces a hallucination, use the trace to see if the “Researcher Agent” provided bad data (Retrieval Failure) or if the model ignored the context (Reasoning Failure).

When presenting this in your portfolio, do not just show the code. Show a screenshot of a Phoenix trace where you identified a failure and corrected the prompt or the RAG chunking strategy. This proves you possess the 80/20 skill of AI Evaluation, which is far more valuable to employers in 2026 than simple prompt writing.

The Hiring Manager’s Cheat Sheet: Interviewing for 2026 AI Roles

To wrap up the 2026 AI Engineering Roadmap, here is the “Hiring Manager’s Cheat Sheet.” This section is designed to help your readers translate the technical projects they’ve built into the high-leverage language that secures $140K+ roles.

In 2026, technical leads aren’t looking for “Prompt Engineers”; they are looking for System Architects. Use these talking points to demonstrate that you understand the 80/20 of AI success.

When asked: “Why did you build a multi-agent system instead of a long-context prompt?”

- The High-Leverage Answer: “While long-context windows exist, they suffer from ‘lost-in-the-middle’ retrieval issues and linear reasoning limits. I used a multi-agent architecture to enable specialization—separating ‘Planning’ from ‘Execution.’ This reduced hallucinations by 65% because each agent has a narrow, verifiable scope of work.”

- The Keywords: Modular reasoning, state management, parallel execution.

When asked: “How do you ensure your AI isn’t hallucinating in production?”

- The High-Leverage Answer: “I don’t rely on ‘vibe checks.’ I implemented an automated evaluation loop using Arize Phoenix and the ‘LLM-as-a-Judge’ pattern. Every output is scored for Faithfulness (grounded in context) and Relevancy before it reaches the end user. If a score falls below 0.8, the system triggers a self-correction loop.”

- The Keywords: Deterministic metrics, Faithfulness, LLM-as-a-Judge, Regression testing.

When asked: “How do you manage the costs of running these autonomous agents?”

- The High-Leverage Answer: “I use Inference Economics. I route simple intent-classification tasks to smaller, quantized models (like Llama-3-8B) and reserve high-reasoning models (like GPT-5) only for complex synthesis. This hybrid approach optimizes for Latency vs. Throughput without sacrificing system quality.”

- The Keywords: Model routing, token optimization, inference economics.

When asked: “Tell us about a time an agent failed and how you fixed it.”

- The High-Leverage Answer: “In my research-to-publication project, the Synthesis Agent was failing on technical papers. By analyzing the OpenInference traces in Phoenix, I realized it was a ‘Retrieval Failure’—the RAG chunking was too small to maintain semantic meaning. I implemented Parent-Document Retrieval, which resolved the context gap and fixed the output.”

- The Keywords: Trace analysis, RAG optimization, Root-cause isolation.

AI Engineering Roadmap FAQs?

To master the AI Engineering Roadmap in 2026, you must clear the confusion between experimental “prompting” and production-grade “systems.” Below are the high-leverage answers to the most critical industry questions.

What is context engineering?

Context engineering is the discipline of dynamically assembling the right information, memory, and tools into an AI’s context window for a specific task.

The Shift: In 2026, we’ve moved beyond static “Prompt Engineering” to Dynamic Context Assembly.

Leverage: It prevents “context rot” and hallucinations by using GraphRAG and semantic retrieval to load only what is necessary, optimizing both token costs and reasoning accuracy.

How do multi-agent systems work?

Multi-agent systems use an Orchestrator Loop to break a complex goal into specialized sub-tasks assigned to independent agents (e.g., Planner, Executor, Critic).

The Protocol: Agents communicate via structured protocols like MCP (Multi-Tool Control Protocol) or JSON-based handoffs.

Leverage: They outperform single agents by enabling parallel execution and “specialized reasoning,” where a Critic agent can catch errors made by an Executor agent before the final output.

What is AI evaluation?

AI evaluation in 2026 replaces manual “vibe checks” with automated, metric-driven frameworks.

The Standard: We use LLM-as-a-Judge (using a superior model like Claude 3.5 or GPT-5 to grade a smaller model) alongside deterministic metrics like Faithfulness and Contextual Precision.

Leverage: This allows for continuous regression testing—ensuring that a prompt update or model swap doesn’t break your system’s reliability in production.

Why build agentic workflows?

Agentic workflows are essential for 2026 roles because they move the “horizon of autonomy” from minutes to days.

The Benefit: They utilize Plan-Execute-Observe loops that handle the “messy reality” of software—recovering from API failures, self-correcting code, and maintaining state across long-running projects.

Leverage: This is the primary driver for high-leverage roles like AI Agent Architect, where the value is in the system’s ability to operate with minimal human intervention.

What tools for MLOps in 2026?

The 2026 MLOps stack is focused on observability and agentic lifecycle management.

Orchestration & Tracking: MLflow remains the workhorse for experiment tracking, while Weights & Biases (W&B) Weave has become the standard for tracing agentic “thought” loops.

Infrastructure: Kubernetes and Docker provide the containerization required for cloud-agnostic deployments on AWS or Azure.

Data Versioning: DVC and LakeFS ensure that your AI’s knowledge base (the RAG data) is as version-controlled as your code.

In Conclusion

In 2026, the delta between a junior “prompter” and a high-leverage AI Engineer is defined by system architecture. As models commoditize, your value is found in the orchestration of these reasoning engines into durable, self-healing frameworks.

The 80/20 Action Plan

To maximize your career ROI over the next six months, prioritize the following:

- Architecture Over Models: Treat the LLM as a replaceable component. Build modular systems that outlive the current frontier model version.

- Orchestration Over Prompting: Master multi-agent loops and agentic planning to solve non-linear, complex business problems that single prompts cannot touch.

- Evaluation Over “Vibes”: Implement production-grade MLOps with automated evaluation gates (LLM-as-a-Judge) to ensure reliability.

Your portfolio is your strongest leverage. Complete your GPT Researcher-based mega-project, integrating Advanced RAG and observability traces. Deploy the codebase to GitHub with a clean, technical README that explains your architectural choices—not just the code. This visibility is what transforms a “Technical Pivoter” into an MLOps Lead or AI Agent Architect.

Discover more from SkillDential | Path to High-Level Tech, Career Skills

Subscribe to get the latest posts sent to your email.