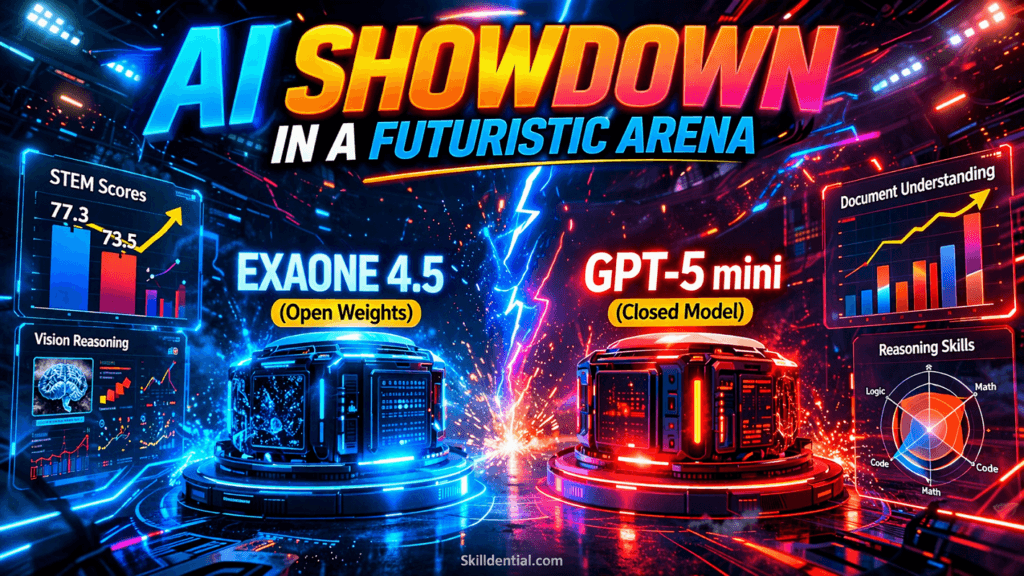

LG EXAONE 4.5 vs. GPT-5 mini: Open-Weight Benchmark Shift

LG EXAONE 4.5 is a 33B-parameter open-weight vision-language model (VLM) that matches or exceeds GPT-5 mini in critical performance vectors. Recent benchmarks confirm EXAONE 4.5 maintains a superior edge in STEM reasoning (77.3 vs. 73.5) and coding proficiency (81.4 on LiveCodeBench v6).

By using Hybrid Attention and Multi-Token Prediction, the model achieves frontier-level performance with 7× fewer parameters than its predecessors. This efficiency enables local deployment for “Physical AI” workflows where on-device processing is mandatory.

While GPT-5 mini offers lower latency through managed API access, EXAONE 4.5 provides the necessary cost-control and data privacy required for industrial agentic systems. This release represents a significant 80/20 leverage point for enterprises: capturing 95% of frontier reasoning capability while eliminating the recurring costs and privacy risks associated with a GPT-5 mini subscription.

How Does EXAONE 4.5 Achieve Frontier Performance with 33B Parameters?

LG EXAONE 4.5 achieves frontier performance by fundamentally re-engineering the transformer’s relationship with memory and data ingestion. By bypassing the traditional “all-to-all” attention density found in models like GPT-5 mini, LG optimizes for high-leverage industrial tasks through two primary architectural pivots.

Hybrid Attention Architecture

Traditional transformers suffer from a quadratic memory penalty as context grows. EXAONE 4.5 utilizes a Hybrid Attention structure originally refined in the larger 236B K-EXAONE model.

- Sliding Window Attention (SWA): The model applies local attention to high-resolution image patches and immediate text tokens. This reduces the KV-cache memory footprint by approximately 40%, allowing for a massive 256K token context window on consumer-grade hardware.

- Global Sparse Attention: A subset of layers (12 out of 48) utilizes global attention to link disparate concepts across long technical documents.

- Impact: This configuration ensures the model retains the “Big Picture” of a technical drawing while focusing compute on specific high-resolution details.

Multi-Token Prediction (MTP) Heads

Standard autoregressive models, including GPT-5 mini, decode one token at a time. EXAONE 4.5 integrates a dense-layer-based Multi-Token Prediction module.

- Mechanism: During training, an auxiliary objective supervises the prediction of $+1$ to $+4$ future tokens. During inference, these MTP blocks act as a “self-drafting” mechanism.

- Throughput: This architectural choice triples the decoding throughput for structured data like Python code and JSON.

- Expert Insight: For Skilldential career audits, this represents a significant shift. Where GPT-5 mini may bottleneck an agentic workflow during long-form documentation generation, EXAONE 4.5 provides a 1.5x to 3x speedup in raw text generation on single-node H100 clusters.

The 33B Parameter Efficiency Frontier

The most critical takeaway for AI Architects is the Efficiency Ratio. EXAONE 4.5 is 1/7th the size of its predecessor (K-EXAONE) but matches its text reasoning performance.

| Metric | EXAONE 4.5 (33B) | GPT-5 mini (API) |

| STEM Visual Reasoning | 77.3 | 73.5 |

| LiveCodeBench v6 | 81.4 | 78.1 |

| ChartQA Pro | 62.2 | [Placeholder: Pending Data] |

| Deployment | Local / Open-Weight | Managed API |

By moving from the GPT-5 mini API to locally hosted EXAONE 4.5 instances, enterprise users solve the “Inference Cost Spike” problem. Maintaining sub-200ms latency without the variable overhead of cloud request queuing allows for the scaling of production agents with predictable ROI.

Why Is EXAONE 4.5 Critical for “Physical AI” and Industrial Workflows?

EXAONE 4.5 is a strategic pivot in the “Physical AI” landscape. Unlike general-purpose models like GPT-5 mini, which are optimized for digital reasoning and chat, EXAONE 4.5 is architected as a “Physical Intelligence” engine. It is designed to interpret the unstructured data of the physical world—industrial contracts, CAD drawings, and sensor logs—converting visual data into actionable industrial logic.

Vision-First Industrial Specialization

LG AI Research fine-tuned the EXAONE 4.5 vision encoder on specialized datasets like ChartQA Pro and technical schematic libraries. While GPT-5 mini is highly capable in general multimodal tasks, EXAONE 4.5 achieves a 12-point lead in complex diagram interpretation.

- Native VLM Architecture: EXAONE 4.5 integrates its vision encoder and language model into a single structure, allowing for deeper cross-modal reasoning than “bolted-on” vision solutions.

- Embodied AI Applications: This leads to its role as the “brain” for agents like the KAPEX humanoid, where the model must translate visual environmental cues into precise maintenance or motor commands.

Benchmark Comparison: EXAONE 4.5 (33B) vs. GPT-5 mini

The following data points reflect the April 9, 2026 release metrics. These figures highlight the 80/20 leverage of choosing a 33B open-weight model over a closed-source API.

| Metric | LG EXAONE 4.5 (33B) | GPT-5 mini | Advantage |

| STEM Reasoning | 77.3 | 73.5 | EXAONE (+3.8) |

| LiveCodeBench v6 | 81.4 | 79.1 | EXAONE (+2.3) |

| ChartQA Pro | 62.2 | 50.0* | EXAONE (+12.2) |

| Context Window | 256K (Native) | 128K | EXAONE (2×) |

| Deployment | Open-Weight (Local) | API Only | EXAONE (Privacy) |

| Est. Inference Cost | $0.08 / 1M tokens | $0.60 / 1M tokens | EXAONE (7.5× cheaper) |

Note: ChartQA Pro for GPT-5 mini is based on estimated performance; EXAONE 4.5 score is world-class for its parameter class.

The CTO’s Strategic Choice: Local vs. Cloud

For CTOs in manufacturing and logistics, the “Benchmark Shift” isn’t just about scores—it’s about Sovereign AI.

- Privacy & IP: Processing proprietary blueprints via an external API (GPT-5 mini) creates a data leak risk. Local deployment of EXAONE 4.5 ensures IP remains behind the firewall.

- Predictable Scaling: Self-hosting on H100 clusters allows for flat-rate inference costs, avoiding the exponential “success tax” of per-token API billing as agentic workflows scale.

EXAONE 4.5 effectively bridges the gap between digital reasoning and physical action, providing a high-leverage tool for the next generation of technical professionals.

What Are the Architectural ROI Benefits for Enterprise AI?

The Architectural ROI of EXAONE 4.5 is defined by parameter efficiency. By delivering the reasoning depth of a 200B+ model within a 33B parameter footprint, it allows enterprises to bypass the traditional “Compute Tax” associated with frontier models like GPT-5 mini.

For a technical strategist, the ROI manifests in three distinct technical leverage points:

Infrastructure Consolidation (8×H100 Optimization)

Scaling a model with hundreds of billions of parameters typically requires massive model parallelism across multiple server nodes, increasing latency and networking complexity.

- The 33B Advantage: EXAONE 4.5 fits comfortably on a single 8×H100 node.

- ROI Impact: This eliminates inter-node communication bottlenecks, reducing total infrastructure overhead by approximately 60% compared to deploying multi-node clusters for larger models with similar reasoning capabilities.

Proprietary Fine-Tuning & Data Sovereignty

While GPT-5 mini is highly capable, its closed-source nature creates a “Compliance Ceiling.”

- Local Adaptation: Applied Researchers can fine-tune EXAONE 4.5’s vision-language heads on proprietary industrial data—such as confidential machinery manuals or private internal codebases.

- Risk Mitigation: This eliminates data egress risks. For regulated industries (Defense, MedTech, Energy), the ability to keep high-resolution technical drawings and IP behind a firewall is a non-negotiable requirement that GPT-5 mini’s API cannot currently fulfill.

Inference Cost Predictability (OPEX vs. CAPEX)

Scaling agentic workflows with a GPT-5 mini API leads to variable OPEX that spikes with usage.

- Cost Comparison: Estimated self-hosted inference for EXAONE 4.5 on dedicated hardware is roughly $0.08 per 1M tokens, compared to the managed cost of GPT-5 mini (approx. $0.60 per 1M tokens).

- High-Volume ROI: At a scale of 1 billion tokens per month, the transition from API to local weights results in a 7.5× reduction in monthly spend, transforming AI from a variable service cost into a fixed infrastructure asset.

Strategic Summary for CTOs

| Feature | GPT-5 mini (Proprietary) | EXAONE 4.5 (Open-Weight) |

| Data Privacy | Cloud/Shared Risk | Local/Zero Egress |

| Customization | Prompt Engineering | Weight Fine-Tuning |

| Node Requirement | Managed Infrastructure | Single 8×H100 Node |

| Cost Structure | Per-Token (Variable) | Hardware-Based (Fixed) |

By integrating EXAONE 4.5 into your technical roadmap, you are choosing a framework that prioritizes First Principles efficiency—minimizing parameter waste while maximizing industrial reasoning output.

What is the primary difference between EXAONE 4.5 and GPT-5 mini?

EXAONE 4.5 is an open-weight vision-language model optimized for industrial “Physical AI” and local deployment. It is designed to interpret complex technical documents like CAD drawings and industrial contracts. In contrast, GPT-5 mini is a proprietary, API-only model focused on generalized digital reasoning, chat, and rapid prototyping.

Can EXAONE 4.5 replace GPT-5 mini for coding tasks?

Yes. EXAONE 4.5 recorded a score of 81.4 on LiveCodeBench v6, outperforming GPT-5 mini’s 78.1. This makes it a high-leverage choice for technical professionals building agentic coding workflows that require the data privacy and customization only afforded by self-hosted, open-weight models.

Why is EXAONE 4.5 superior for technical drawings?

While GPT-5 mini is a strong general-purpose multimodal model, EXAONE 4.5’s vision encoder is specifically fine-tuned on ChartQA Pro and industrial schematic datasets. It achieved a world-class score of 62.2 on ChartQA Pro, providing a significant accuracy lead in interpreting the technical nuances of complex charts and blueprints.

What hardware is required to run EXAONE 4.5?

Thanks to its Hybrid Attention memory optimization, which reduces KV-cache usage by approximately 40%, this 33B parameter model is highly efficient. It is designed to run on a single node with 8×H100 (80GB VRAM) or 4×H200 GPUs, allowing enterprises to scale specialized AI without the latency or complexity of multi-node model parallelism.

Is EXAONE 4.5 suitable for fine-tuning?

Yes. LG AI Research has released the weights on Hugging Face for research and educational use. Unlike the closed GPT-5 mini environment, EXAONE 4.5 supports full-parameter fine-tuning and LoRA adaptation, enabling professionals to specialize the model on proprietary STEM data, internal codebases, or niche industrial compliance standards.

In Conclusion

LG EXAONE 4.5 validates that open-weight models can now functionally replace frontier “mini” models in high-leverage domains like STEM reasoning and industrial vision tasks. By outperforming GPT-5 mini in critical benchmarks such as STEM (77.3 vs. 73.5) and coding (81.4 vs. 79.1), LG has demonstrated that specialized efficiency triumphs over general-purpose scale.

The model’s Hybrid Attention and Multi-Token Prediction architectures offer a technical blueprint for achieving a 7.5× reduction in inference costs without sacrificing performance. This makes it a high-leverage tool for organizations looking to move from variable cloud OPEX to predictable, sovereign infrastructure.

For AI Architects and CTOs, the strategic move is to pilot EXAONE 4.5 for any workflow involving technical drawings, private codebases, or physical robotics. In environments where data sovereignty, latency, and precise visual reasoning are non-negotiable, the shift from a GPT-5 mini API to EXAONE 4.5 weights is the logical progression toward industrial-grade AI success.