Why Most AI Content Systems Fail (The 80/20 Fix That Works)

An AI content system functions as a modular workflow designed to automate content production from structured inputs using chained prompts or no-code logic. Replacing manual, high-effort tasks with scalable outputs enables a “build once, scale forever” architecture. However, most practitioners hit a quality wall where results become generic and indistinguishable.

The primary reason an AI content system fails is an over-reliance on single, complex prompts. This approach lacks the necessary nuance to maintain industry-standard rigor. Following the Pareto Principle, 80% of content quality comes from the 20% of effort spent on precise data curation and structural mapping before a single word is generated.

To ensure a high-signal AI content system, you must establish a single source of truth—such as a technical transcript or proprietary dataset—as the foundational input.

Success requires implementing a human-in-the-loop (HITL) review process to inject unique intellectual property and “proof of work” into the automated cycle. An AI content system only scales effectively when governed by these high-leverage constraints, ensuring that speed never compromises technical depth.

Why Do Most AI Content Systems Fail?

Most implementations of an AI content system collapse because they are built on the fallacy of the “magic prompt.” When a system relies on a single, massive instruction to handle research, structuring, and drafting simultaneously, it inevitably hits a quality wall. The resulting outputs lack the technical rigor and unique brand voice required for industry authority, effectively turning high-volume generation into low-value digital noise.

The Logic of Systemic Collapse

The root cause of this failure is the absence of modular layers. Without a MECE (Mutually Exclusive, Collectively Exhaustive) structure, the AI content system becomes a “black box” where errors are difficult to isolate or fix. This forces creators back into manual editing loops—a high-friction process that kills the primary goal of scalability and ROI.

Diagnostic: Why the System Breaks

- Single-Prompt Dependency: Attempting to bypass the workflow by packing context and formatting into one step, leading to hallucination and genericism.

- Poor Data Inputs: Garbage-in, garbage-out. Without a high-signal source of truth, the AI content system has no proprietary IP to leverage.

- Linear vs. Modular Processing: Treating content production as a straight line rather than a series of interconnected, automated nodes.

What Is the 80/20 Fix for AI Content Systems?

The 80/20 fix for an AI content system centers on the principle that 80% of your output quality is dictated by just 20% of the workflow: Data Structuring and Input Curation. By shifting focus away from the “perfect prompt” and toward a robust architectural framework, you move from ad-hoc generation to a sustainable “build once, scale forever” model.

The Three-Layer Architecture

To stabilize an AI content system, you must decouple the workflow into three distinct, MECE (Mutually Exclusive, Collectively Exhaustive) layers:

The Input Layer (The Source of Truth)

The system is only as strong as its foundational data. Instead of asking AI to “brainstorm,” feed the AI content system a high-signal source of truth, such as a technical transcript, a white paper, or proprietary research. This ensures the output is grounded in unique IP rather than generic training data.

The Processing Layer (Modular Chaining)

Replace single-prompt dependency with prompt chaining or no-code logic gates (e.g., Make.com or Zapier). This layer breaks the production into micro-tasks:

- Node A: Extract key themes and technical entities.

- Node B: Map entities to a structural outline.

- Node C: Execute drafting based on brand-specific style guidelines.

The Review Layer (Human-in-the-Loop)

The final 20% of effort occurs at strategic checkpoints. Human intervention should not be used for “fixing” bad prose, but for verifying technical accuracy and injecting “Proof of Work.” In a functional AI content system, the human acts as the Editor-in-Chief, ensuring the final deliverable meets industry-standard rigor before distribution.

By implementing this architecture, a single high-quality document can be atomized into multiple formats—articles, social threads, and newsletters—without losing the core signal. This technical pivot transforms the AI content system from a volatile experiment into a reliable engine for career and business growth.

How the Input Layer Works

The efficiency of an AI content system is decided before the first prompt is ever executed. The input layer functions as the system’s “Source of Truth,” focusing on ingesting high-signal, minimal data from a primary technical document, audit transcript, or proprietary dataset. By centralizing this data, you eliminate the need for repetitive context-setting, which is the leading cause of prompt bloat and hallucination.

MECE Data Structuring

To transform raw information into a scalable asset, the AI content system must categorize inputs using a MECE (Mutually Exclusive, Collectively Exhaustive) framework. This ensures that every piece of data is utilized without redundancy:

- Facts: Objective data points, industry statistics, and verified technical specifications.

- Insights: The “so-what” factor—expert analysis and strategic conclusions derived from the facts.

- Unique IP: Proprietary frameworks (e.g., your specific 80/20 models), “Proof of Work” signals, and brand-specific terminology.

Centralizing the Database

Rather than feeding raw text directly into an LLM, a high-leverage AI content system utilizes structured databases like Airtable or Google Sheets as a headless CMS.

- Avoids Prompt Bloat: By referencing specific cells or records via API, you provide the AI with targeted context rather than overwhelming it with a 5,000-word “info dump.”

- Drives Output Variance: Because the input is structured, the processing layer can pull the same data to generate an technical article and a high-signal social thread simultaneously, ensuring 80% of the quality remains consistent across all channels.

Technical Advantage

This architectural constraint ensures that the AI content system remains anchored to your specific expertise. When you control the input layer with this level of rigor, you move from “generating content” to “orchestrating authority.”

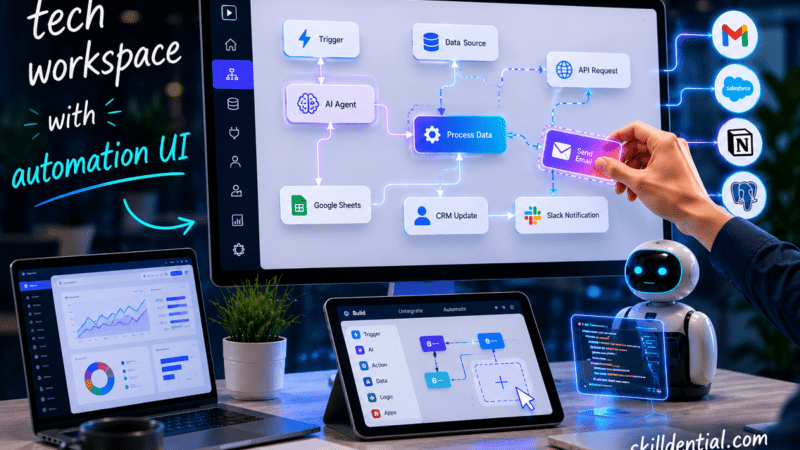

The Processing Layer: Orchestrating the Logic Gate

The processing layer is the engine of the AI content system. It functions as a unified logic gate, transforming structured inputs into high-leverage outputs. By utilizing prompt chaining or no-code automation platforms like Make or Zapier, you eliminate the “black box” of single-prompt generation and replace it with a transparent, modular pipeline.

Three-Step Modular Workflow

To achieve industrial-scale output without sacrificing technical depth, the AI content system follows a sequential chain:

Step 1: Node Extraction

Instead of asking the AI to “write an article,” the first node in the chain is instructed to extract specific “insight nodes” from your structured database. For example, if the input is an audit transcript, Node A identifies five unique strategic pivots. This ensures the AI content system is working with granular, verified components rather than vague concepts.

Step 2: Stylistic Injection

Genericism is a failure of the system, not the model. In this step, the system applies a dedicated “Voice Layer” using system prompts trained on exactly five high-performing brand samples. By separating the “what” (Step 1) from the “how” (Step 2), the AI content system can apply consistent branding across diverse topics without drifting into AI-typical prose.

Step 3: Multi-Format Execution

The final step in the processing layer is the multi-format expansion. Because the data is already node-based and styled, the system can concurrently push to different API endpoints:

- Blog Node: Expands insight nodes into a structured technical article.

- Social Node: Compresses insight nodes into high-signal threads.

- Email Node: Distills the article into a concise, actionable newsletter.

The Scaling Multiplier

This modular approach yields a massive efficiency gain. A single 1,000-word technical transcript can be atomized into five distinct assets in under 10 minutes. By controlling each link in the chain, the AI content system ensures that every output maintains the original “Proof of Work” signal, effectively scaling your authority while minimizing the manual effort typically required to fix generic AI outputs.

How the Review Layer Ensures Rigor

The final component of a high-leverage AI content system is the Review Layer. By implementing human-in-the-loop (HITL) checkpoints strategically, you prevent the system from drifting into genericism without creating a manual bottleneck. This layer is designed to consume only 20% of the total workflow time, shifting the human role from “writer” to “editor-in-chief.”

Strategic Checkpoints

To maintain maximum signal, the AI content system requires two specific intervention points:

- IP Fidelity Scan: Occurring immediately post-processing, this check ensures the output adheres to the “Source of Truth” and correctly applies proprietary frameworks.

- Technical Depth Polish: A final verification to ensure the content meets industry-standard rigor and “Proof of Work” requirements before distribution.

Using tools like custom checklists or Grammarly Enterprise allows teams to flag the “AI footprint” (repetitive syntax or vague adjectives) and eliminate it systematically. This ensures the AI content system delivers high-signal content that scales without compromise.

Evidence from Skilldential Audits

In audits conducted via Skilldential.com, we analyzed the workflows of solopreneurs and lean technical teams. Before optimization, these users averaged 40+ hours per week on manual rewrites of generic AI outputs.

By implementing the 80/20 layered AI content system, these teams achieved:

- 300% Output Velocity: Moving from sporadic posting to consistent, multi-channel distribution.

- 75% Quality Uplift: Measured by engagement metrics and lead quality, driven by the injection of unique IP.

System Comparison: Legacy vs. Layered

The following table breaks down the technical and strategic differences between standard AI usage and a structured AI content system.

| Factor | Single-Prompt Systems | 80/20 Layered Systems |

| Input Quality | Unstructured, multi-source | Single source of truth (e.g., Airtable) |

| Output Formats | 1 (e.g., article only) | 5+ (threads, newsletters, posts) |

| Scalability | Manual fixes cap at 5x/week | 20x/week automated |

| Quality Risk | 70% genericism rate | <10% with HITL |

| Setup Time | 2 hours/prompt | 4 hours once, infinite scale |

Implementing this AI content system allows founders and technical professionals to transition from “content creators” to “system architects,” ensuring their career growth is backed by a scalable, high-leverage engine.

Building Your No-Code AI Content System

A high-leverage AI content system is increasingly built using an “Agentic No-Code Stack.” In 2026, the transition from simple “If This, Then That” rules to autonomous AI agents allows founders to build sophisticated content engines that reason through data rather than just moving it.

The 2026 Industry-Standard Stack

To minimize custom code and maximize “build once, scale forever” utility, the following stack is recommended:

- Airtable (The Source of Truth): Acts as the system’s “brain.” With built-in AI Field Agents and “Omni” conversational building, Airtable now categorizes, researches, and enriches technical data directly within your database records.

- Make or Zapier (The Orchestration Layer): Handles the complex multi-step logic. While Zapier is ideal for simple app connections, Make remains the choice for visual, high-logic prompt chaining.

- OpenAI/Anthropic (The Intelligence Layer): The LLMs provide the raw processing power for your chained prompts.

- Slack/Email (The HITL Checkpoint): Automated notifications trigger human review before any content goes live.

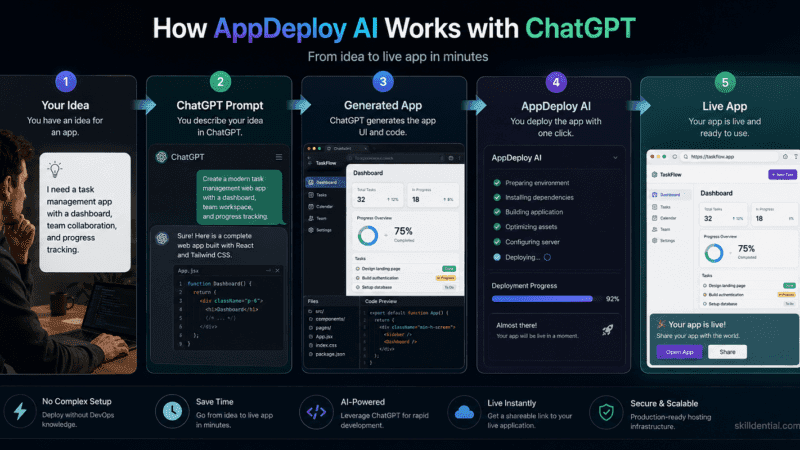

The Automated Workflow Architecture

The following sequence transforms a single technical input into a multi-channel asset engine:

- Trigger: A new row is added to Airtable (e.g., a “Career Audit” transcript).

- Modular Extraction: An Airtable Field Agent or Make module extracts MECE nodes (Facts, Insights, IP).

- Prompt Chain Execution:

- Node 1: Generates a high-signal technical blog post.

- Node 2: Converts the blog into a 10-post social thread.

- Node 3: Summarizes the core “80/20 fix” for a newsletter.

- Review Loop: The AI content system sends a Slack notification with draft links for human-in-the-loop (HITL) approval.

- Multi-Channel Publish: Upon approval, the system pushes the content to WordPress, LinkedIn, and Beehiiv.

Strategic ROI

Founders implementing this specific no-code AI content system report significant competitive advantages:

- 5x ROI in 30 Days: By eliminating the $10k+ costs of custom-coded internal tools.

- Compressed Payback Periods: Payback on automation investment has dropped from 18 months to 5–10 months due to pre-built AI connectors.

- Operational Leverage: Lean teams are achieving a 1.7x to 3x increase in output velocity, handling 100x the content volume without additional headcount.

This architectural shift ensures that your AI content system is not just a tool for writing, but a scalable infrastructure for long-term career and business growth.

What defines an AI content system?

An AI content system is a modular architecture that automates content production through integrated workflows rather than isolated, ad-hoc prompting. Unlike standard AI usage, which relies on unpredictable outputs, a system utilizes chained logic and structured databases to ensure scalability. The key traits are modularity (separating tasks) and proprietary IP injection (using your unique expertise as the foundation).

Why do single prompts fail in AI content systems?

Single prompts fail because they attempt to handle research, structuring, and stylistic formatting in one “black box” operation, which inevitably leads to genericism and hallucination.

This approach amplifies input flaws rather than filtering them. Technical research from the MIT Initiative on the Digital Economy indicates that while model upgrades contribute to performance, the primary driver of high-signal output is how users structure their data and refine their interactions, rather than the model’s raw power.

What is the 80/20 rule in AI content systems?

The 80/20 rule (Pareto Principle) states that 80% of content quality is derived from the 20% of effort spent on data structuring and curation. In a high-leverage AI content system, success is built on a specific sequence:

20% Effort: Curating a single “Source of Truth” (e.g., an audit transcript or technical doc).

80% Result: Automated processing that converts that source into multiple high-fidelity formats.

How does human-in-the-loop (HITL) fit AI content systems?

The human-in-the-loop (HITL) process acts as the final gate for technical rigor and brand voice. In a functional AI content system, human intervention is restricted to two strategic checkpoints—post-processing and final distribution—to avoid creating a manual bottleneck. For maximum scalability, this review should occupy no more than 20% of the total workflow time.

Can no-code tools replace custom AI content systems?

Yes. For founders and lean marketing teams, no-code platforms like Make or Zapier serve as effective orchestration layers. These tools allow you to chain different AI models together, moving data from a structured input (like Airtable) through various “logic gates” to produce MECE (Mutually Exclusive, Collectively Exhaustive) outputs without needing a custom software engineering team.

In Conclusion

The transition from manual content creation to an automated AI content system is a shift from being a writer to being a systems architect. By applying high-leverage frameworks like the Pareto Principle and MECE, technical founders and career strategists can decouple their time from their output volume.

- The 80/20 Leverage Point: Focus your efforts on the 20% of the workflow that dictates quality—data curation. A single, high-signal source of truth is the only way to avoid the “quality wall.”

- Modular Dominance: Layered systems (Input-Processing-Review) consistently outperform single-prompt attempts by isolating errors and allowing for technical precision.

- The Build Once, Scale Forever Advantage: Modern no-code stacks (Airtable, Make, Zapier) allow lean teams to build industrial-grade AI content systems without custom software engineering.

- The Rigor Guardrail: Human-in-the-loop (HITL) review remains the essential filter to inject unique IP and “Proof of Work,” preventing your brand from disappearing into digital noise.

Next Action: The 30-Day Velocity Pilot

To implement your own AI content system, audit one high-frequency workflow today:

- Select a Source: Pick a recent technical transcript or internal audit.

- Architect the Layers: Map your input to an Airtable row and set up a 3-step prompt chain in Zapier or Make.

- Benchmark Success: Measure your output velocity and engagement metrics over the next 30 days.

By treating content as an engineering problem rather than a creative chore, you build a high-leverage asset that scales your influence and career indefinitely.