Top 9 AI Orchestration Platforms to Automate Your Workflow

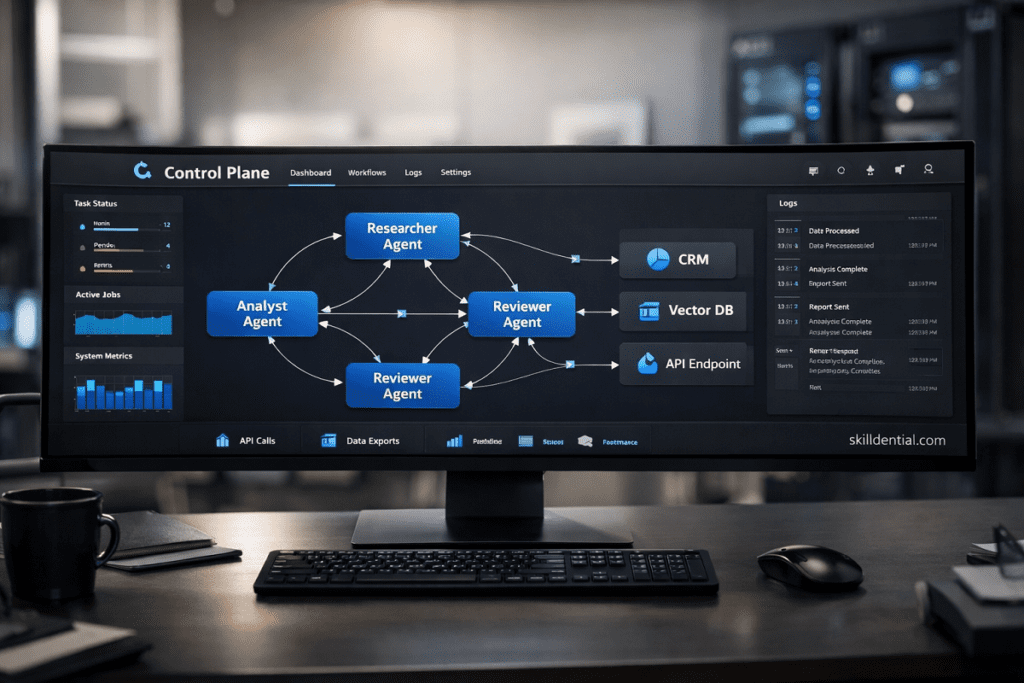

An AI orchestration platform is the critical infrastructure layer that coordinates models, data pipelines, and external tools into a unified, reliable system. Unlike standalone chatbots, an AI orchestration platform centralizes prompt management, long-term memory, and tool-calling logic to eliminate fragmented automations and manual scripting.

These platforms provide the essential control plane for monitoring performance, managing API costs, and enforcing security policies across diverse agentic workflows. Using an AI orchestration platform is the most effective strategy for scaling repeatable, multi-step processes that require absolute consistency and technical auditability.

In the 2026 enterprise landscape, the value shift has moved from individual model capabilities to the sophisticated coordination of the entire AI stack.

What is an AI orchestration platform and why does it matter in 2026?

An AI orchestration platform is the technical control plane that coordinates models, tools, data, and human steps into a single, trustworthy system. In 2026, the competitive advantage has shifted. Because most organizations have access to frontier models with similar reasoning capabilities, the primary differentiator is no longer the model itself.

The value lies in how effectively an AI orchestration platform integrates these components into a cohesive workflow. In practical terms, an AI orchestration platform manages the movement of requests through models, specialized agents, vector stores, and APIs while preserving critical state and memory. This is why orchestration is frequently described as the conductor of the enterprise AI stack.

It ensures that data, decisions, and actions flow in a deterministic order with built-in governance and observability. For the 2026 professional, the core objective has shifted from selecting the best LLM to designing high-leverage systems where multiple agents and humans collaborate with minimal errors and predictable latency.

How does an AI orchestration platform actually work?

An AI orchestration platform works by defining and executing workflows that connect models, tools, and data stores, typically structured as Directed Acyclic Graphs (DAGs) or State Machines. Unlike simple automation, an AI orchestration platform maintains a persistent control plane to track state across multiple steps, manage asynchronous failures, and provide “Human-in-the-Loop” (HITL) interfaces for high-stakes decision points.

Core Architectural Components

- Workflow Engine: The central logic layer that routes tasks between agents and models based on conditional state. It ensures that the output of one agent (e.g., a data extractor) is correctly formatted and passed as the input for the next (e.g., a technical analyst).

- System Connectors: Pre-built integration layers to vector databases, enterprise APIs, and legacy software. These allow the AI orchestration platform to read from and write to operational data sources without custom middleware.

- State and Context Management: A dedicated layer that persists the “memory” of long-running processes. This ensures that an agent remains consistent across hours or days of execution by retrieving historical context from structured stores or embeddings.

- Governance and Observability Layer: A centralized audit log that records prompts, model outputs, token costs, and user interactions. This layer is essential for maintaining compliance and performing root-cause analysis when a multi-agent workflow deviates from its objective.

By using this architecture, a professional can coordinate specialized agents to function as a unified team. For example, a “Researcher Agent” can fetch and clean raw data, an “Analyst Agent” can identify trends, and a “Writer Agent” can generate a final report, all while the AI orchestration platform enforces technical guardrails and cost limits at every stage.

The Three Strategic Tiers of AI Orchestration Platforms

For selection clarity, the top 9 platforms are segmented into three functional tiers that are mutually exclusive and collectively exhaustive (MECE) for most use cases. This categorization reduces tool fatigue and aligns selection with your team’s technical depth.

Tier 1: Pro-Code AI Orchestration Frameworks

These are code-first frameworks designed for engineers building deeply integrated, production-grade systems where logic must be version-controlled and highly customizable.

- LangGraph (Python/JS): The industry standard for stateful, cyclic orchestration. It uses an explicit graph abstraction where nodes represent agents or tools and edges define the state transition logic. It is optimized for complex, multi-step workflows requiring durable execution and “time-travel” debugging.

- Microsoft Agent Framework (The successor to AutoGen/Semantic Kernel): Reaching Release Candidate status in early 2026, this is the unified evolution of Microsoft’s agentic tools. It combines AutoGen’s conversational multi-agent patterns with Semantic Kernel’s enterprise-grade type safety and session management.

- CrewAI: A role-based orchestration framework that abstracts the complexity of multi-agent communication. It is ideal for teams building “virtual departments” where agents are assigned specific personas, goals, and backstories to collaborate autonomously.

Tier 2: Visual Low-Code AI Workflow Builders

These platforms prioritize visual design and rapid iteration. They provide significant leverage to Operations and Product teams without requiring heavy backend engineering.

- n8n: A powerful visual builder that bridges the gap between traditional automation and agentic AI. It supports over 400 integrations and enables self-hosted deployments, making it a favorite among technical teams prioritizing data privacy and custom JavaScript nodes.

- Vellum: An enterprise-focused platform designed for building, evaluating, and governing AI agents. It excels at “Prompt-to-Build” workflows and provides built-in quantitative evaluation metrics to track agent accuracy and cost before deployment.

- MindStudio: A platform focused on “AI-native” application development. It handles the underlying complexities of token management, long-term memory, and model routing by design, allowing users to build complex agents through a unified visual interface.

Tier 3: Ecosystem-Native Agent Platforms

These are orchestration capabilities embedded within cloud hyperscalers. They offer the strongest governance, identity management, and data controls for organizations already locked into a specific cloud provider.

- Azure AI Foundry Agent Service: Officially reaching General Availability in March 2026, this is a redesigned API and runtime for enterprise-grade agents. It integrates natively with Microsoft 365, SharePoint, and Entra ID, providing a secure “Control Plane” for observable and governed agent workflows.

- Amazon Bedrock AgentCore: A managed AWS service that utilizes “Custom Orchestration” logic (such as ReWoo or ReAct patterns) to execute multi-step tasks. It is the go-to for AWS-native teams requiring high scalability and deep integration with S3, Lambda, and Bedrock Guardrails.

- Google Vertex AI Agent Builder: A Google-native toolset for low-code agent creation. It leverages Google’s specialized Gemini models and provides tight integration with BigQuery and Google Search, optimized for data-heavy enterprise automation.

Tier 1 offers maximum Flexibility, Tier 2 offers maximum Speed, and Tier 3 offers maximum Compliance. Most organizations starting their AI journey in 2026 find the 80/20 balance in Tier 2 for internal tools, moving to Tier 1 for core product features.

How should different roles choose an AI orchestration platform?

Selecting an AI orchestration platform is a high-leverage decision that should be based on your team’s technical depth and the specific “moat” you are building. The following MECE matrix maps the three tiers to functional roles and their primary pain points.

| Role / Objective | Recommended Tier | Representative Platforms | Core Strategic Leverage |

| Technical Founder / CTO | Tier 1: Pro-Code | LangGraph, Microsoft Agent Framework, CrewAI | Fine-grained control of state machines, custom graph-level routing, and native integration into existing CI/CD pipelines. |

| Product Manager | Tier 2: Low-Code | Vellum, MindStudio, n8n | Rapid prototyping, real-time evaluation of model accuracy, and built-in cost-monitoring dashboards for unit economics. |

| RevOps / BizOps | Tier 2 → 3 Hybrid | n8n, Zapier Central, Vellum | High-velocity automation using pre-built connectors to CRM/ERP systems while maintaining a visual logic flow for non-engineers. |

| Enterprise IT Leader | Tier 3: Ecosystem | Azure AI Foundry, AWS Bedrock AgentCore | Centralized governance, native IAM (Identity and Access Management) integration, and audit-ready logs for SOC 2 compliance. |

| Security & Compliance | Tier 3 (or Self-Hosted) | Bedrock Agents, Self-hosted n8n/LangGraph | Strict control over data residency, VPC (Virtual Private Cloud) deployment options, and automated policy enforcement. |

Strategic Implementation Note

This framework prevents “tool fatigue” by forcing a selection based on Governance and Technical Depth first. Once a tier is selected, the specific platform choice should be dictated by your existing cloud ecosystem (e.g., choosing Azure AI Foundry if you are a Microsoft shop) and your team’s existing skill sets.

- Choose Tier 1 if the orchestration logic is the product.

- Choose Tier 2 if the orchestration logic supports the product or business operation.

- Choose Tier 3 if the orchestration logic must adhere to strict enterprise guardrails.

By following this 80/20 approach, organizations can avoid the “Frankenstein” architecture of disconnected scripts and move toward a unified AI orchestration platform that scales with their complexity.

How does an AI orchestration platform reduce cycle time and error rates?

An AI orchestration platform reduces cycle time by automating complex, multi-step sequences that previously required manual handoffs. It simultaneously lowers error rates through the use of structured state and automated verification loops.

For instance, utilizing the custom orchestration features within Amazon Bedrock AgentCore allows engineers to define explicit verification steps before an agent produces a final output, catching logic errors early in the execution flow.

Real-World Performance Metrics

The impact of a unified AI orchestration platform is most visible in high-volume operational workflows:

- Lead Triage and Sales Operations: In technical career audits at Skilldential, RevOps teams frequently spend hours on manual lead triage, switching between CRMs, email clients, and scoring spreadsheets. Implementing an orchestrated, multi-agent workflow—where one agent normalizes raw data, a second scores the leads, and a third drafts outreach sequences—reduced manual triage time by approximately 40%. This systematic approach surfaces high-value accounts earlier in the business cycle.

- Routing Accuracy: When combined with ecosystem-native logging, these orchestrated workflows reduced misrouted leads by an estimated 30% due to the consistent application of logic rules that do not suffer from human fatigue.

- Enterprise Supply Chain and Onboarding: Multi-agent orchestration within the Azure AI Foundry Agent Service is optimized for long-running, stateful processes. By maintaining context across multiple days or weeks and coordinating specialized agents, organizations have reported forecast accuracy reaching the 95% range for structured tasks. This is achieved by cross-referencing multiple “Expert Agents” over a shared state, ensuring that data inconsistencies are flagged before they impact the final forecast.

The 80/20 of Automation Value

The primary leverage of an AI orchestration platform is not just speed; it is the transition from probabilistic outcomes (typical of raw LLMs) to deterministic systems. By enforcing a “Control Plane” over the AI agents, the platform ensures that 80% of the routine decision-making is handled autonomously with 100% auditability.

AI Orchestration Platforms vs. Traditional Automation (Zapier/Make)

Traditional automation tools like Zapier or Make are optimized for event-driven, deterministic workflows. They are highly effective for moving data between APIs when the logic is linear. However, these tools were not designed for deep reasoning, long context windows, or multi-agent collaboration.

The Technical Inflection Point

As users attempt to chain multiple LLM calls within legacy automation tools, they encounter three primary systemic failures:

- State and Context Fragmentation: Traditional tools treat each step as an isolated task. An AI orchestration platform manages context windows, recursive chunking, and persistent state centrally. This prevents “token truncation,” a common failure in legacy tools when processing long documents or multi-threaded conversations.

- Economic Inefficiency: Task-based pricing models in Zapier or Make become prohibitively expensive for iterative AI flows. An AI orchestration platform is architected for AI-heavy workloads, often optimizing model routing to reduce costs by using smaller models for sub-tasks.

- Linear Logic vs. Agentic Loops: Legacy tools struggle with “Cyclic” logic—where an agent must go back a step to correct an error. AI orchestration platforms utilize Directed Acyclic Graphs (DAGs) and State Machines to allow for autonomous self-correction and multi-agent “brainstorming.”

When to Transition to an AI Orchestration Platform

For teams currently stretching Zapier or Make with individual GPT modules, the transition to a dedicated AI orchestration platform is necessary when:

- Coordination Complexity: You require multi-agent collaboration or sophisticated “Human-in-the-Loop” (HITL) approval gates.

- Data Volume: You frequently hit token limits or experience degraded output quality due to poor context management over large datasets.

- Governance Requirements: You need centralized observability over prompts, latency, and costs to meet enterprise audit standards.

The 80/20 Comparison

| Feature | Traditional Automation (Zapier/Make) | AI Orchestration Platform |

| Logic Type | Deterministic (Linear) | Probabilistic (Iterative/Agentic) |

| State Management | Stateless (Task-to-Task) | Stateful (Persistent Memory) |

| Primary Strength | 6,000+ API Integrations | Reasoning, Tool-Calling, & Handoffs |

| Failure Handling | Simple Retries | Self-Correction & Reasoning Loops |

This comparison highlights that the AI orchestration platform is not a replacement for Zapier, but a superior “Control Plane” for intelligence. In a professional 2026 stack, Zapier acts as the “hands” (connectivity), while the orchestration platform acts as the “brain” (logic).

What governance, security, and audit features should enterprises demand?

Enterprise-grade AI orchestration platform adoption must align with established IT security frameworks. In 2026, the shift from “chatbots” to “autonomous agents” has forced a move toward Agentic Identity and Just-in-Time (JIT) Authorization. A robust platform is no longer just a workflow builder; it is a specialized security and compliance layer.

Core Governance and Audit Capabilities

- Audit-Ready Traceability: Platforms must provide a full execution log including the raw prompt, the specific retrieved context (RAG), the tool-call arguments, and the model’s final output. These logs should be mapped to unique user identities to ensure accountability for every automated action.

- Agent Identity and IAM Integration: Every agent within the AI orchestration platform should have a machine identity tied to enterprise directory services (e.g., Entra ID or Okta). This allows for granular, role-based access control (RBAC) where an agent only has the permissions necessary to perform its specific task, adhering to the principle of least privilege.

- Data Isolation and Residency: High-leverage platforms provide Virtual Private Cloud (VPC) deployment options. This ensures that sensitive data, such as internal financial records or customer PII, remains within approved geographic regions or dedicated infrastructure, meeting the requirements of the EU AI Act and GDPR.

- Automated Red-Teaming and Evaluation: In 2026, leading platforms include continuous monitoring for prompt injection and model drift. These “Evaluation Gates” automatically pause a workflow if the agent’s output violates safety policies or deviates from its deterministic guardrails.

Regulatory Alignment: The 2026 Standard

For regulated sectors, an AI orchestration platform should facilitate alignment with the NIST AI Risk Management Framework (AI RMF) and the ISO/IEC 42001 standard. These frameworks demand a “Govern, Map, Measure, Manage” approach to AI risk.

- Govern: Establish clear ownership for every agentic workflow.

- Map: Identify where the AI system interacts with sensitive third-party APIs.

- Measure: Track quantitative metrics like hallucination rates and latency.

- Manage: Implement “kill switches” that allow human operators to instantly revoke an agent’s tool access if anomalous behavior is detected.

What is an AI orchestration platform?

An AI orchestration platform is the central control plane that coordinates models, tools, data pipelines, and human-in-the-loop steps into a unified system. Unlike isolated scripts, it manages state, logic routing, and security policies across multi-step processes to ensure high-leverage automation.

How is AI orchestration different from standard automation tools?

Standard automation tools (like Zapier or Make) are built for deterministic, “If-This-Then-That” actions. An AI orchestration platform is designed for probabilistic, reasoning-heavy workflows. It manages the long-term memory, context windowing, and self-correction loops required for autonomous agents to function reliably.

When is a dedicated AI orchestration platform necessary?

Transition to a dedicated platform when your workflows involve multi-step reasoning, multiple specialized agents, or long-form context that exceeds simple API triggers. If you require centralized monitoring of API costs, latency, and output accuracy across an entire department, a professional AI orchestration platform is the required infrastructure.

Can an AI orchestration platform support models from different providers?

Yes. Most leading platforms are model-agnostic. They allow you to route specific sub-tasks to the most efficient model (e.g., using GPT-4o for complex reasoning and a smaller Llama model for basic data extraction). This strategy prevents vendor lock-in and optimizes the unit economics of your AI stack.

How does AI orchestration facilitate compliance and audits?

An AI orchestration platform provides a centralized “Paper Trail” for every autonomous decision. By logging the exact prompt, retrieved data, and model output, these platforms satisfy regulatory requirements for traceability and risk management. This is critical for industries governed by the EU AI Act or NIST frameworks.

In Conclusion

In 2026, an AI orchestration platform is the essential control plane that transforms fragmented models, tools, and data into a reliable, compliant system. This architecture shifts the focus from model selection to system coordination, ensuring that autonomous agents operate within strict technical and ethical guardrails.

Final Strategic Takeaways

- Tier-Based Selection: The most effective strategy is to categorize your needs into one of three tiers: Pro-Code, Low-Code, or Ecosystem-Native. Align your choice with your specific role, governance requirements, and technical depth to avoid tool fatigue.

- 80/20 Operational ROI: An AI orchestration platform delivers maximum leverage by targeting the 20% of workflow handoffs that cause 80% of errors. It achieves this through persistent state management, automated verification steps, and centralized performance monitoring.

- Actionable Implementation: To move from theory to industry success, map your top three AI use cases (e.g., lead triage, ticket routing, or FP&A forecasting) into explicit workflows. Evaluate one candidate platform from each relevant tier in a 4–6 week pilot.

Success in the current landscape is measured by cycle time, error rates, and unit economics. Before committing to long-term adoption, instrument your pilot with clear metrics to verify that your chosen AI orchestration platform provides a measurable reduction in manual overhead and a predictable path to scale.