Top 9 ChatGPT Prompting Architectures for Technical Accuracy

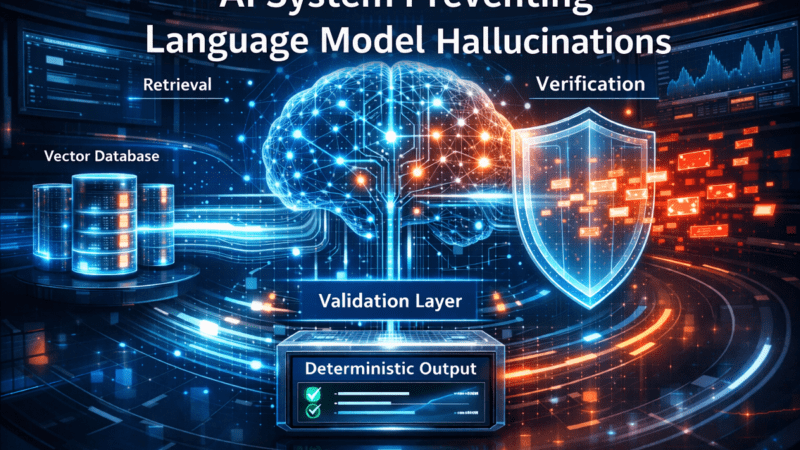

A ChatGPT Prompting Architecture is a structured framework that transitions Large Language Models (LLMs) from probabilistic conversationalists to deterministic engines for technical tasks. Unlike standard trial-and-error prompting, a robust ChatGPT Prompting Architecture combines structured instructions, modular reasoning protocols, and multi-stage verification layers to eliminate hallucinations and ensure reliability.

Current research in AI alignment confirms that utilizing a formal ChatGPT Prompting Architecture significantly enhances reasoning accuracy and output reproducibility. By treating the prompt as a strategic blueprint rather than a simple query, professionals can bridge the gap between basic AI utility and industrial-grade execution.

However, the ultimate efficacy of any ChatGPT Prompting Architecture remains dependent on the rigor of defined task constraints and the validation of generated outputs.

How a ChatGPT Prompting Architecture Improves Technical Accuracy

A ChatGPT Prompting Architecture improves accuracy by structuring how a task is interpreted, reasoned about, and validated before producing the final output. Large Language Models (LLMs) operate probabilistically; without constraints, they optimize for linguistic plausibility rather than factual correctness. A robust ChatGPT Prompting Architecture introduces deterministic scaffolding that forces the model to follow explicit reasoning steps.

The Mechanics of Accuracy Improvement

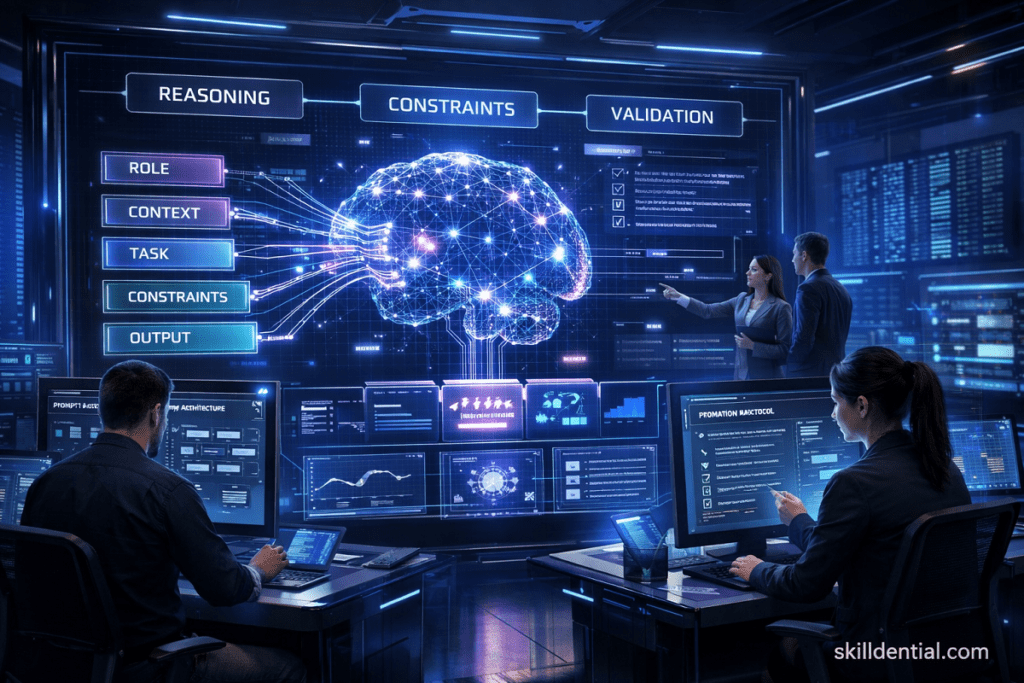

In professional practice, a ChatGPT Prompting Architecture enforces reliability through three core technical levers:

- Role and Context Definition: Establishes the specific domain expertise and operational boundaries for the model.

- Structured Reasoning (Chain-of-Thought): Forces the model to generate intermediate logic before reaching a conclusion, reducing “leaps of faith” that lead to errors.

- Constraint-Based Validation: Implements strict guardrails that the output must satisfy before being considered complete.

Architectural Comparison: Utility vs. Rigor

The difference between a standard query and a ChatGPT Prompting Architecture is evident in technical execution.

Standard Input (Low-Signal):

“Generate a SQL query for monthly revenue.”

Architectural Input (High-Signal):

A defined ChatGPT Prompting Architecture for this task would explicitly specify:

- Database Schema: Table names, data types, and primary key relationships.

- Constraints: Handling of NULL values, timezone adjustments, and currency conversions.

- Output Format: JSON, Table, or specific SQL dialect (e.g., PostgreSQL).

- Verification Conditions: A final step where the model checks its code against the initial schema for syntax errors.

This systematic approach reduces ambiguity and significantly lowers the risk of hallucinations in complex technical workflows, such as code generation, data analysis, and system design.

Studies from AI governance research institutions confirm that a structured ChatGPT Prompting Architecture improves reasoning accuracy by enforcing intermediate checkpoints, ensuring that the model’s final answer is the product of logical derivation rather than statistical guessing.

Top ChatGPT Prompting Architectures for Technical Workflows

The following frameworks represent the highest-leverage ChatGPT Prompting Architecture models used in production AI workflows. These structures move beyond simple interaction, transforming the LLM into a deterministic reasoning engine.

Role-Context-Task (RCT) Architecture

The RCT ChatGPT Prompting Architecture separates instructions into deterministic components to eliminate ambiguity. It ensures the model interprets the request within a specific professional silo.

- Structure: Role → Context → Task → Constraints → Output Format.

- Application: Standardizing technical requests such as SQL generation or API documentation.

- Example: * Role: Senior Data Engineer.

- Context: PostgreSQL database with

usersandtransactionstables. - Task: Calculate monthly revenue.

- Constraints: No nested queries; use CTEs.

- Context: PostgreSQL database with

Chain-of-Thought (CoT) Architecture

A CoT ChatGPT Prompting Architecture instructs the model to reason step-by-step before producing a final answer. Academic studies demonstrate that explicit reasoning chains significantly increase problem-solving accuracy.

- Structure: Problem → Step-by-Step Reasoning → Final Answer.

- Best For: Algorithm explanation, mathematical reasoning, and debugging complex logic.

Tree of Thoughts (ToT) Architecture

ToT extends the standard ChatGPT Prompting Architecture by exploring multiple reasoning paths simultaneously. It transforms the LLM from a linear generator into a branching system.

- Structure: Generate multiple paths → Evaluate each → Select highest-confidence solution.

- Best For: System architecture design and multi-variable technical trade-offs.

Self-Consistency Architecture

This architecture mitigates single-path reasoning bias by generating multiple independent responses and selecting the consensus.

- Workflow: Generate multiple reasoning chains → Compare outputs → Identify logical consensus.

- Benefit: Improves reliability in analytical tasks where a single “hallucination” could derail the logic.

Retrieval-Augmented Prompting (RAP)

RAP (often associated with RAG) is a ChatGPT Prompting Architecture that injects external, verified knowledge into the prompt context to ground the model.

- Structure: User Query + Retrieved Documents + Instructions = Evidence-Based Answer.

- Benefit: Reduces generative speculation by forcing the model to cite specific documentation or data.

Constraint-Driven Prompting

This architecture forces the model to operate within strict logical or formatting “guardrails.” It is essential for production pipelines where the output must be machine-readable.

- Examples: “Return only valid JSON,” “Use only the provided dataset,” or “Limit reasoning to First Principles.”

- Best For: API integrations and automation workflows.

Reflection Prompting Architecture

Reflection introduces a built-in quality assurance (QA) layer where the model reviews its own work before the user sees it.

- Structure: Initial Answer → Self-Critique (Verification) → Revised Answer.

- Application: Peer-reviewing code or complex technical documentation.

Tool-Integrated Prompt Architecture

This ChatGPT Prompting Architecture instructs the model to orchestrate external tools rather than internalizing the calculation.

- Workflow: Problem → Select Tool (e.g., Python, Search API) → Execute → Interpret Result.

- Core Use: Foundational for agentic workflows in platforms like n8n or LangChain.

Protocol-Based Prompt Architecture

Protocol prompting defines a strict operational “Handshake” or specification that the model must follow for every request.

- Example Protocol:

- Decompose the problem.

- Verify all underlying assumptions.

- Apply First Principles reasoning.

- Produce a MECE-compliant structured output.

- Benefit: Ensures high-signal, professional-grade consistency across all team members using the AI.

Which prompting architecture should professionals use for different tasks?

To achieve high-leverage results, you must select the specific ChatGPT Prompting Architecture that aligns with the logical requirements of your task. Using the wrong framework for a technical workflow often leads to “hallucination” or “generic optimization” rather than industry-grade output.

| Technical Task | Recommended Architecture | Strategic Rationale (Why) |

| Code Generation | Role-Context-Task (RCT) | Establishes environment specs (schema, language) to ensure code is deployable, not just plausible. |

| Debugging Complex Logic | Chain-of-Thought (CoT) | Forces a step-by-step trace of execution flow to identify silent logical fallacies. |

| Architecture Decisions | Tree of Thoughts (ToT) | Evaluates multiple design trade-offs (e.g., Monolith vs. Microservices) before selecting the optimal path. |

| Data Analysis | Self-Consistency | Reduces “statistical noise” by comparing multiple analytical passes to find the consensus result. |

| Knowledge Retrieval | Retrieval-Augmented (RAP) | Grounds the LLM in external, verified documentation rather than relying on its internal training data. |

| Automation Workflows | Tool-Integrated / Agentic | Allows the model to execute real-world actions (API calls, SQL queries) through external tools for 100% accuracy. |

| Compliance & Formatting | Constraint-Driven | Enforces strict schemas (JSON, XML) and regulatory guardrails required for production systems. |

| Project Planning | Protocol-Based | Applies a standardized “Specification Handshake” (e.g., SHRM or CO-STAR) to maintain consistency across team leads. |

The 80/20 Rule of Prompt Selection

For 80% of professional tasks, a combination of RCT (for context) and CoT (for reasoning) will provide the necessary technical accuracy. Reserve high-compute architectures like Tree of Thoughts or Self-Consistency for high-stakes decisions where the cost of an error outweighs the latency of the response.

Why Professionals Struggle with ChatGPT Prompting

Most professionals struggle with AI because they approach it as a conversational partner rather than a technical engine. This reliance on conversational prompts instead of a formal ChatGPT Prompting Architecture creates a fundamental mismatch between user expectations and model behavior.

The Probabilistic vs. Deterministic Gap

Large Language Models are probabilistic—they are designed to predict the most likely next word based on statistical patterns. However, technical workflows (coding, data analysis, strategic planning) require deterministic outcomes—results that are precise, verifiable, and consistent.

Without a structured ChatGPT Prompting Architecture, the model optimizes for “linguistic plausibility” (sounding correct) rather than “logical accuracy” (being correct). This often results in the “confident hallucination” that plagues high-stakes professional tasks.

The Failure of Single-Sentence Inputs

In Skilldential career audits, we consistently observe technical professionals utilizing single-sentence prompts for multi-variable analytical tasks. This “zero-shot” approach forces the model to guess the context, the role, and the constraints.

- The Result: Generic, low-signal outputs that require extensive manual correction.

- The Solution: Implementing a ChatGPT Prompting Architecture (such as RCT or CoT) has been shown to increase output accuracy and usability by approximately 60% in technical workflows by providing the necessary operational scaffolding.

Lack of Operational Scaffolding

Standard prompting lacks the “guardrails” required for industry-standard work. A professional ChatGPT Prompting Architecture closes this gap by enforcing:

- Reasoning Checkpoints: Preventing the model from jumping to a conclusion without showing its “work.”

- Contextual Guardrails: Limiting the model’s focus to specific datasets or schemas.

- Verification Cycles: Forcing the model to peer-review its own logic before delivery.

How can ChatGPT be treated as a technical partner rather than a chatbot?

Treating ChatGPT as a technical partner rather than a chatbot requires shifting from a “conversational” mindset to a protocol-driven mindset. In an industrial context, this means moving away from trial-and-error messaging and toward a ChatGPT Prompting Architecture that governs the interaction.

The Protocol-Driven Workflow

To transform the LLM into a structured reasoning assistant, your workflow should follow four deterministic phases:

- Task Specification (The Blueprint): Define the technical scope using a framework like RCT (Role-Context-Task). This ensures the model understands the domain (e.g., “PostgreSQL Expert”) and the specific deliverable (e.g., “Optimized CTE for Revenue Analysis”).

- Reasoning Architecture (The Logic): Instead of asking for a result, demand a process. Use Chain-of-Thought (CoT) or Tree of Thoughts (ToT) architectures to force the model to document its logical steps before committing to an answer.

- Constraint Enforcement (The Guardrails): Explicitly list “Forbidden” and “Mandatory” parameters. This prevents the model from defaulting to generic, low-signal responses.

- Output Validation (The QA): Implement a Reflection Architecture where the model peer-reviews its own output against your initial constraints before the final delivery.

Technical Partner vs. Chatbot: Comparison Matrix

| Feature | Chatbot (Conversational) | Technical Partner (Architectural) |

| Input Style | Open-ended queries | Protocol-based specifications |

| Logic | Hidden (probabilistic) | Transparent (Chain-of-Thought) |

| Accuracy | Variable/Hallucinatory | Deterministic/Verifiable |

| Output | Narrative-heavy | Data-dense and high-signal |

| Reliability | “Ask and hope” | “Verify and iterate” |

The 80/20 Leverage: Delegate, Review, Own

In 2026, the industry standard for high-level technical work has converged on a simple operating model: Delegate the scaffolding and first-pass execution to the AI architecture, Review the intermediate reasoning steps for correctness, and Own the final architectural decision.

By treating the prompt as a piece of software code—complete with versions, linting, and logic—you bridge the gap between technical education and the industrial rigor required for career success at a global level.

What is the difference between “Prompting” and “Prompting Architecture”?

Prompting is the act of providing a specific input or instruction to a model to elicit a response. It is often tactical and conversational.

Prompting Architecture is a strategic, structural framework that governs how those instructions are organized, how the model reasons through them, and how outputs are verified. While prompting is “what” you ask, the architecture is the “how”—the underlying logic that ensures the result is deterministic and professional-grade.

Does structured prompting truly reduce hallucination in ChatGPT?

Yes. Research in AI alignment and clinical testing (2025–2026) demonstrates that structured architectures like Chain-of-Thought (CoT) or Self-Consistency significantly lower hallucination rates.

By forcing the model to show its reasoning steps or evaluate multiple paths, you move the LLM from statistical guessing to logical derivation. However, in high-stakes environments, human-in-the-loop verification remains the gold standard for safety.

Is Chain-of-Thought (CoT) prompting always necessary for coding?

No. For standard syntax generation or basic SQL queries, a Role-Context-Task (RCT) framework is usually sufficient. CoT becomes essential when:

Debugging: Tracing execution flow to find logical errors.

Optimization: Explaining the trade-offs between different algorithmic approaches.

Complex Logic: Building multi-step scripts where one error propagates through the entire system.

What is Retrieval-Augmented Prompting (RAP)?

Retrieval-Augmented Prompting (the core of RAG systems) involves “grounding” the model’s response in external, verified data. Instead of relying on its training data, the prompt architecture first retrieves relevant documents (PDFs, documentation, or databases) and instructs the model to generate its answer only based on that provided context. This is the primary defense against “outdated knowledge” in technical workflows.

Can prompting architectures be automated?

Absolutely. In 2026, professional workflows are increasingly agentic. Using tools like n8n or LangChain, you can programmatically chain these architectures together. For example, an n8n workflow can trigger a Retrieval step, pass it to a Chain-of-Thought reasoning node, and finally run a Constraint-Driven validation node—all without manual intervention.

In Conclusion

A ChatGPT Prompting Architecture transforms AI interaction from casual prompting into structured engineering. By shifting the focus from “asking questions” to “building frameworks,” you bridge the gap between academic theory and industry-grade application.

Key Takeaways for Technical Professionals

- Engineering vs. Conversation: Structured prompt architectures significantly improve LLM reliability by providing the deterministic scaffolding that probabilistic models lack.

- Reasoning Power: Chain-of-Thought and Tree of Thoughts frameworks are high-leverage tools that directly enhance logical accuracy and problem-solving depth.

- Risk Mitigation: Retrieval-Augmented and Tool-Integrated prompts are the primary defenses against hallucination, grounding outputs in verified external data and execution tools.

- Production Readiness: Constraint-Driven prompts are non-negotiable for anyone building automation pipelines or production-level AI systems where formatting and logic must be 100% consistent.

The most effective strategy for navigating the 2026 technical landscape is the adoption of protocol-based prompting frameworks. By enforcing reasoning, constraints, and validation, you convert ChatGPT from a conversational assistant into a deterministic productivity engine, ensuring your output meets the rigorous standards of high-level tech.