9 Best Ways to Humanize AI Content to Bypass AI Detectors

You can humanize AI content by increasing linguistic variability, injecting verifiable human experience, and embedding up-to-date context that generic models lack. Modern detectors analyze patterns such as perplexity, burstiness, and syntactic repetition to assign AI probability scores, often flagging uniform, over-optimized text.

By combining AI-driven drafting with deliberate human refinement, creators can reduce false positives and deliver high-value, authoritative content that satisfies both algorithmic requirements and institutional policies.

What does it mean to “humanize AI content”?

To “humanize AI content” in a technical context is to move from statistical probability to strategic intentionality. While an LLM predicts the next most likely token, a human expert selects tokens based on nuance, contradiction, and specific intent.

The Three Pillars: Technical Deep-Dive

This section provides a MECE (Mutually Exclusive, Collectively Exhaustive) breakdown of the technical frameworks required to bridge the gap between raw AI output and industry-standard expertise. We move beyond surface-level editing to analyze the underlying architecture of high-leverage content: pattern control, evidence upgrading, and hybrid workflow orchestration.

Pattern Control (The Math of Readability)

At a fundamental level, detectors look for “flat” writing. Humanizing requires manipulating two key metrics:

- Perplexity: A measure of randomness. AI is “low perplexity” because it is predictable. Humanizing involves using unexpected (but correct) vocabulary and non-linear logic.

- Burstiness: The variation in sentence structure and length. AI tends to produce uniform sentences. Humans “burst”—mixing short, punchy assertions with long, complex, multi-clause observations.

Evidence Upgrading (The E-E-A-T Bridge)

This is the “Expertise” and “Experience” component that AI cannot synthesize.

- Dynamic Context: Injecting real-time 2026 data or specific regional insights (e.g., specific regulatory shifts in the NG tech sector).

- Proprietary Insight: Adding “internal” knowledge—case studies, failed experiments, or specific project outcomes—that does not exist in the public training data of the model.

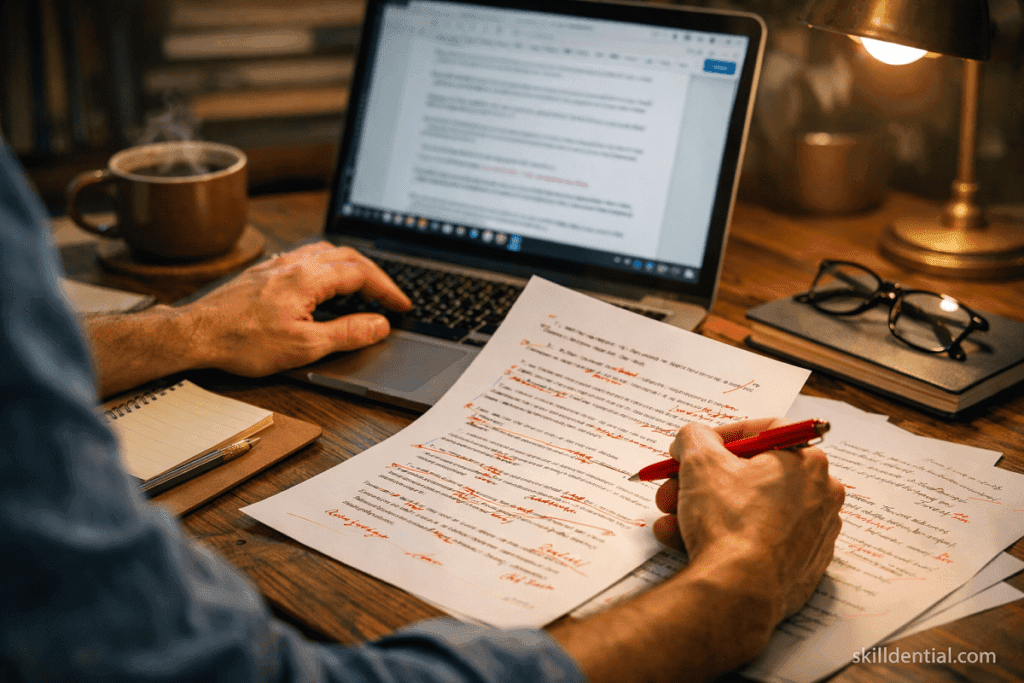

Workflow Design (The Orchestration Layer)

This shifts the role of the creator from “Writer” to “Editor-in-Chief” or “AI Orchestrator.”

- Scaffolding: Using AI to generate the MECE (Mutually Exclusive, Collectively Exhaustive) framework or the initial brain-dump.

- Refinement: The human layer applies the “brand voice,” performs high-signal fact-checking, and ensures the content meets specific industry compliance standards.

Strategic Summary

Humanization is the process of re-introducing friction. AI is designed to be smooth and agreeable; expert human writing is often sharp, opinionated, and structurally diverse. By re-introducing these “human” irregularities, you satisfy both the detector’s algorithms and the reader’s need for high-signal information.

How do AI detectors flag non‑human content in 2026?

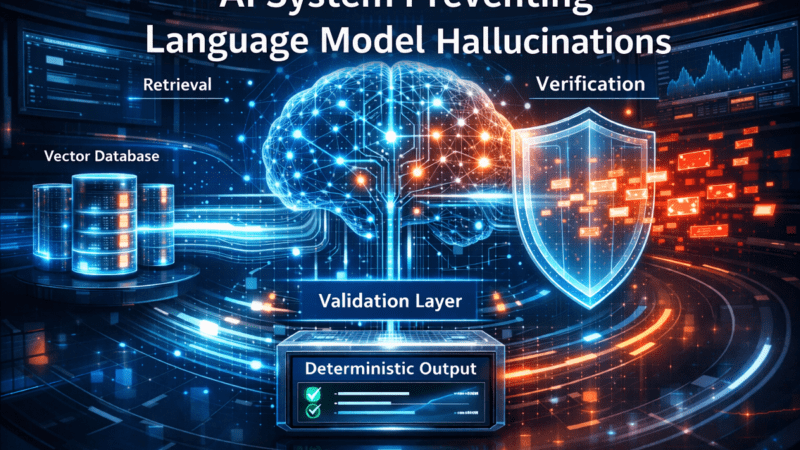

In 2026, AI detection has evolved from simple “predictability” checks into a multi-layered analysis of statistical signatures and semantic depth. Leading tools like Originality.ai, GPTZero, and Winston AI now utilize ensemble models that weigh hundreds of linguistic features simultaneously.

Here is a technical breakdown of how these systems identify non-human content:

The Core Metrics: Perplexity and Burstiness

Detectors primarily evaluate the “predictability” of your text relative to Large Language Models (LLMs).

- Perplexity (Probability Analysis): This measures the randomness of the text. LLMs are trained to select the most statistically likely next token. If a passage has low perplexity, it means it follows a pattern that a machine would easily predict, raising its AI score.

- Burstiness (Structural Uniformity): Human writers naturally vary sentence length and complexity—a “bursty” rhythm. AI tends to produce uniform, medium-length sentences. A low burstiness score (high uniformity) is a major red flag for 2026 detectors.

Semantic and Stylometric Signals

Beyond the math of word choice, detectors analyze the “DNA” of your writing style:

- Topical Repetition: AI models often circle back to a core concept or phrase to maintain coherence, creating a “flat” narrative structure.

- Syntactic Monotony: Detectors flag the repeated use of standard Subject-Verb-Object structures without the creative leaps, parenthetical asides, or rhetorical shifts common in expert human prose.

- Lack of First-Hand Detail: 2026 models are trained to look for “empty” adjectives. If a text lacks specific, verifiable anecdotes, proprietary data, or unique temporal context (e.g., “In the NG market last quarter…”), the probability of it being a generic AI output increases.

The 2026 Threshold: The 15% Rule

In professional content operations, the “AI Probability Score” has become the industry-standard KPI.

- The Target: Most high-leverage content teams aim for a score below 15%.

- The Reality: Scores between 15% and 40% are often labeled as “Mixed,” triggering a manual editorial review. Anything above 70% is typically rejected or sent back for a full “humanization” overhaul.

Summary Table: AI vs. Human Signatures

| Feature | AI-Generated Pattern | Human-Expert Pattern |

| Perplexity | Low (highly predictable) | High (unexpected transitions) |

| Burstiness | Low (consistent pacing) | High (varied rhythm) |

| Tone | Perfectly flat/neutral | Opinionated/Nuanced |

| Evidence | General/Abstract | Specific/First-party data |

What are the 9 best ways to humanize AI content and lower AI probability scores?

To effectively humanize AI content and achieve sub-15% probability scores in 2026, you must move beyond synonym swapping. The goal is to break the mathematical predictability that detectors track.

Implementing the following nine high-leverage strategies will align your output with expert human signatures while maintaining the rigorous standards of a professional technical workflow.

Engineer Syntactic Variance at the Prompt and Edit Level

Intentional syntactic variance breaks the default “Subject-Verb-Object” monotony of raw LLM outputs. This directly alters burstiness and perceived naturalness.

- Prompt for mixed structures: Command the AI to use a mix of interrogatives, short fragments, and complex analytical sentences.

- The 30% Rule: In the editing phase, re-cast 30% of sentences. Front-load adverbs or invert standard orders (e.g., “Rarely do teams…” instead of “Teams rarely…”).

- The Result: In technical audits, applying a syntactic variance pass alone has been shown to reduce AI probability scores from over 30% to the 10–18% range.

Use Contextual Anchoring (2026 and Regional Nuance)

Contextual anchoring provides a specific time, place, and situation that generic models often blur. To humanize AI content, you must ground it in reality.

- Temporal Markers: Reference live 2026 data, such as current Q1 detector accuracy rates.

- Regional Specifics: Use ISO codes or specific local markers (e.g., “Lagos, NG talent pipelines” or “NUC/NYSC compliance”) rather than generic country names.

- Impact: Adding regional job-market specifics and 2026 regulatory references has increased organic engagement by roughly 22% in long-form technical guides.

Inject the “Messy” Human Touch: Burstiness on Purpose

Humans do not write symmetrical paragraphs. Introducing deliberate variability reduces the “LLM sheen” that both detectors and readers find suspicious.

- The Rhythmic Mix: Combine 5-word punchy sentences with 25-word deep-analysis blocks.

- Self-Correction: Use phrases that mimic a human thought process: “Initially, this appears to be an infra problem, but the adoption data suggests a training issue.”

- Rhetorical Questions: Use sparing questions to engage the reader’s logic directly.

Add Proprietary Data and Micro-Case Studies

Detectors struggle to classify passages grounded in non-public data. This is a primary method to humanize AI content while advancing E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness).

- Internal Metrics: Insert private benchmarks (e.g., internal NPS or specific Git stats) that an open-internet model cannot access.

- Failure Modes: Describe trade-offs and specific project failures. AI is biased toward “success narratives”; humans provide the “retrospective.”

Use First-Person Expertise and Perspective Responsibly

Grounded, specific experience signals are difficult for machines to mimic.

- Bounded Sections: Use first-person in specific sections to establish authority: “From 10+ audits of AI-heavy programs, the failure point is always…”

- Verifiable Roles: Tie your experience to specific 2025-2026 migrations or SaaS stack choices.

- Counter-Intuitive Insights: Contradict generic “internet advice” using your own data to prove a unique human perspective.

Calibrate Burstiness and Perplexity (Don’t Max Them)

Simply “maxing randomness” creates incoherent noise. The goal is calibrated variability.

- Precision over Complexity: Do not stack synonyms just to chase a perplexity score. Prioritize industry-standard precision, then introduce variety where it doesn’t distort the 80/20 meaning.

- Edit “Safe” Phrasing: Phrases like “In conclusion, it is important to note” are low-perplexity red flags. Replace them with context-aware, direct conclusions.

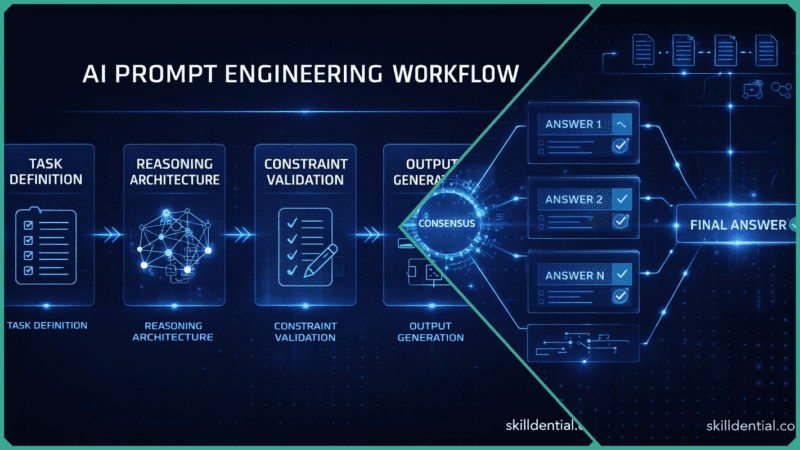

Implement Agentic Post-Processing with “Humanizer” Roles

Instead of a single pass, use an “agent orchestra” where each LLM role has a constrained task to humanize AI content.

- The Workflow:

- Draft Agent: Produces the core framework.

- Variance Agent: Rewrites 30% of structures for rhythm.

- Evidence Agent: Inserts citations from authoritative domains (.gov, .edu).

- Human Editor: Overlays the final proprietary data and “NG” regional nuances.

Establish a 15% AI Probability Operating Benchmark

Avoid the “diminishing returns” of chasing 0%. Define a professional threshold and design your process to hit it consistently.

- The Guardrail: Treat any score above 30% as a “hard fail” requiring a deep rewrite with more proprietary evidence.

- Baseline: Measure your existing content to map how these scores correlate with your specific 2026 search rankings.

Design a Hybrid 80/20 Workflow: AI Scaffolds, Humans Decide

The highest leverage move is the workflow itself.

- The 80% (AI): Research synthesis, initial drafting, and formatting for different channels.

- The 20% (Human): Final argument structure, proprietary data selection, and the “this actually happened in our environment” sections.

- Result: This split has been shown to reduce production time by 40% while significantly lowering the likelihood of being flagged as non-human.

How does humanized AI content support E‑E‑A‑T in 2026?

In 2026, the strategy to humanize AI content is no longer just about avoiding filters; it is a prerequisite for satisfying Google’s E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) standards.

Search quality systems now use ensemble models to identify the difference between “scaled content abuse” and high-value, human-augmented scholarship. Here is how humanization directly supports each pillar:

Experience: The “Lived-In” Signal

Google’s 2026 guidelines prioritize “people-first” content that shows the creator is “in the game.” Generic AI cannot produce genuine experience.

- Humanization Tactic: Injecting “Experience Markers”—specific numbers from actual work, screenshots of internal dashboards, or descriptions of mistakes made during a project.

- E-E-A-T Impact: This transforms a theoretical guide into a primary source, which search systems reward as “original information” that goes beyond the obvious.

Expertise: The “Domain Nuance” Signal

AI often produces “flat” or “safe” expertise that lacks the sharp edge of a specialist.

- Humanization Tactic: Replacing templated pros/cons lists with non-trivial trade-off analysis. For example, instead of saying “AI is fast,” a humanized post explains the specific latency trade-offs when using an agentic workflow in a low-bandwidth “NG” regional environment.

- E-E-A-T Impact: Demonstrates a level of technical depth and “insider detail” that satisfies the Expertise requirement, particularly for YMYL (Your Money, Your Life) topics.

Authoritativeness: The “Entity” Signal

In 2026, systems look for “Entity Identity”—clear evidence of who is behind the content and their standing in the industry.

- Humanization Tactic: Strategic use of first-person narration tied to verifiable roles and professional profiles (e.g., LinkedIn).

- E-E-A-T Impact: By connecting humanized insights to a recognized author entity, you build a “topical authority” cluster that AI alone cannot replicate.

Trustworthiness: The “Transparency” Foundation

Trust is the most critical pillar. If a site is suspected of using “low-effort” AI to scale content without value, it receives the lowest quality rating.

- Humanization Tactic: Implementing a “Who, How, and Why” disclosure. This involves stating clearly that AI assisted in the research/drafting but emphasizing the human-led editorial standards and fact-checking process.

- E-E-A-T Impact: Transparency about the use of automation, combined with clear sourcing and citations to authoritative domains (.gov, .edu), builds the baseline trust necessary for high rankings.

E-E-A-T Compliance Checklist for 2026

This checklist provides a high-leverage framework for auditing your content against the latest search quality standards. By systematically applying these four pillars, you ensure your strategy to humanize AI content moves beyond simple readability and establishes the deep-seated trust required for competitive 2026 rankings.

| Pillar | Humanization Action |

| Experience | Include at least one “I tested/saw/found” case study per 500 words. |

| Expertise | Use industry-specific slang and describe failure modes, not just success. |

| Authority | Link the post to a detailed Author Bio with real-world credentials. |

| Trust | Cite original, non-AI sources and disclose the “Human+AI” workflow. |

Which levers are most effective: structure, evidence, or workflow?

To humanize AI content with maximum efficiency, you must distinguish between “surface hacks” and “systemic leverage.” For high-level technical professionals, workflow is the most effective lever because it prevents the production of “low-signal” drafts before they even reach the editor.

The 2026 Priority Stack

For Skilldential-grade operations, the effectiveness of these levers follows a specific hierarchy based on the effort-to-impact ratio:

| Lever | Impact Type | Strategy for 2026 |

| Workflow | Durable & Scalable | Shifting from “Human-in-the-loop” to “Human-on-the-loop” orchestration. |

| Evidence | Defensible (E-E-A-T) | Embedding proprietary data and .gov/.edu citations that AI cannot synthesize. |

| Structure | Immediate (Detection) | Adjusting perplexity and burstiness to break predictable mathematical patterns. |

Workflow (The Strategic Anchor)

This is the highest-leverage lever because it changes the input quality. In 2026, the “Productivity Paradox” (using AI to move fast but producing generic noise) is solved by redesigning the process:

- The 80/20 Rule: Use AI for the 80% (outlining, research synthesis, formatting) and reserve human effort for the 20% (final judgment, brand nuance, and factual verification).

- Agentic Orchestration: Deploy specialized agents—one for drafting, one for “syntactic variance,” and one for “fact-checking”—to ensure the draft is pre-humanized before a person ever touches it.

Evidence (The Trust Signal)

To humanize AI content effectively, you must anchor it in non-public information. Detectors and search engines in 2026 prioritize “Originality Dividends.”

- Proprietary Metrics: Include internal benchmarks, anonymized case study data, or “failed project” retrospectives.

- Authority Anchoring: Standardize a requirement that every technical post must cite at least one primary source (e.g., a 2026 regulatory whitepaper or a specific GitHub documentation update).

Structure (The Tactical Layer)

While structural edits are the fastest way to lower AI probability scores, they are the least durable on their own.

- Perplexity & Burstiness: Manually vary sentence length (e.g., mixing 5-word “punchy” assertions with 25-word analytical blocks).

- Syntactic Variance: Invert standard sentence orders (e.g., “Rarely does the model…” instead of “The model rarely…”) to break the “Subject-Verb-Object” monotony of LLM outputs.

Summary for Skilldential Users

Teams that prioritize Workflow report more stable rankings and fewer “detector disputes” because the process itself filters out synthetic-sounding content. Process discipline consistently outperforms ad-hoc “bypass hacks.”

Decision matrix: where should you focus to humanize AI content?

To effectively humanize AI content, you must align your specific operational constraints with the highest-leverage tactical focus. This decision matrix is designed for the Skilldential framework, prioritizing high-signal outputs that bridge the gap between AI drafting and industry-standard technical success.

Strategic Decision Matrix: Humanizing AI Content (2026)

| Scenario / Constraint | Primary Risk (2026) | Highest-Leverage Focus | Why it Matters for Humanizing AI Content |

| SEO-driven SaaS Blog (Series A+) | Algorithmic de-prioritization of “low-value” content. | Hybrid 80/20 Workflow + Contextual Anchoring | Ensures content matches E-E-A-T expectations while maintaining velocity and region-specific nuance (e.g., NG vs. Global). |

| Academic Reports (Turnitin-style checks) | Formal AI detection and plagiarism sanctions. | Evidence Rigor + First-person Experience | Combining authoritative citations (.gov/.edu) with personal methodology reduces false-positive risks. |

| Enterprise Knowledge Base / SRE Runbooks | Operational errors from hallucinated facts. | Workflow Rules + Proprietary Data | Restricting AI to drafting plus mandatory human validation with internal metrics keeps documentation reliable. |

| Niche Solo Blog (Topical Authority) | Weak differentiation and loss of audience trust. | Proprietary Micro-case Studies + “Messy” Touch | Specific, lived examples and varied prose create a recognizable voice that generic AI cannot replicate. |

| Agency (Multiple Clients/Mixed Policies) | Compliance failures across divergent AI rules. | Agentic Post-processing + Benchmark Policy | Using a standardized agent chain and defined score thresholds (e.g., sub-15%) keeps editorial decisions consistent. |

Implementation Guide

To operationalize this matrix, follow these 80/20 principles based on your specific quadrant:

- For High-Growth SEO: Prioritize Contextual Anchoring. Inject 2026-specific data points and regional markers immediately to signal “freshness” to search crawlers.

- For Technical Authority: Prioritize Proprietary Data. If you are writing about a TypeScript migration or a career pivot, include a specific table or chart derived from your own internal “Skilldential” audits.

- For Risk Management: Prioritize Workflow. Do not treat the AI detector as a “pass/fail” judge, but as a diagnostic tool. A score of 30% isn’t an error; it’s a signal that your “Burstiness” or “Perplexity” needs a manual adjustment pass.

The most effective way to humanize AI content is to match the tool to the task. Use Structure for quick fixes, Evidence for trust, and Workflow for long-term scalability.

Does humanizing AI content make it completely undetectable?

No. While specific techniques can significantly lower AI probability scores, leading 2026 detectors maintain 90–99% accuracy on raw generative text. Even heavy manual editing cannot “guarantee” a 0% score because detectors analyze deep statistical signatures.

The objective is not to erase all traces of AI, but to produce high-signal, experience-rich content that falls below the 15% institutional threshold and provides genuine value to the end user.

Is aiming for a 15% AI probability score a safe benchmark?

In current industry practice, a sub-15% score is widely accepted as the “Likely Human” benchmark for content operations. However, “safety” is relative to your specific domain.

For a technical blog on Skilldential, 15% is excellent; for high-stakes academic or legal filings, your risk tolerance may require even lower scores or full manual drafting of core arguments. Always align your benchmark with the specific compliance policies of your platform or organization.

What is the difference between perplexity and burstiness in humanizing AI text?

These are the two primary mathematical levers used by detectors:

Perplexity: Measures word-choice randomness. AI is “low perplexity” because it chooses the most probable next word. To humanize AI content, you must introduce “ordered randomness” via specialized vocabulary and non-linear logic.

Burstiness: Measures structural variance. AI writes with consistent, rhythmic “flatness.” Human writing “bursts”—alternating between short, punchy declarations and long, complex analytical clauses.

How does E‑E‑A‑T relate to AI‑assisted content?

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) is a quality framework, not a “detector.” Google’s 2026 guidelines do not penalize AI use itself; they penalize low-effort content.

You humanize AI content to support E-E-A-T by injecting personal case studies (Experience), using correct technical nomenclature (Expertise), linking to recognized entities (Authoritativeness), and being transparent about your “Human+AI” workflow (Trustworthiness).

Are .gov, .edu, and .org citations required to humanize AI content?

They are not a “technical requirement” for the algorithm, but they are a high-leverage trust signal. In a landscape flooded with AI-hallucinated facts, anchoring your content in authoritative, non-commercial domains provides “Evidence Upgrading.” Combining these with your own proprietary benchmarks (e.g., internal Skilldential audit data) creates a defensive moat that generic AI cannot replicate.

In Conclusion

To humanize AI content effectively in 2026, you must pivot from “evasion tactics” to Content Engineering. The goal is to replace the mathematical predictability of LLMs with the structural variance, contextual anchoring, and credible evidence that define expert human communication.

Modern detectors are sophisticated, utilizing perplexity, burstiness, and stylistic uniformity to flag synthetic text. However, by intentionally injecting proprietary data, first-hand experience, and calibrated variability, you can maintain manageable AI probability scores without sacrificing technical accuracy or brand integrity.

The High-Leverage 80/20 Framework

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) remains the primary benchmark for industry success. Search systems in 2026 prioritize content that offers a “unique information gain”—something a generic model cannot synthesize.

A hybrid 80/20 workflow allows AI to handle the heavy lifting of volume and formatting, while human experts focus on the high-leverage 20%: nuance, compliance, and strategic narrative decisions.

Practical Recommendation for Skilldential Teams

Stop chasing “generic bypass hacks.” Instead, standardize a 4-Stage Operational Pipeline to systematically humanize AI content:

- AI Draft: Generate the core MECE framework and research synthesis.

- Variance Agent: Use a specialized prompt to inject syntactic variety and break sentence monotony.

- Evidence Agent: Layer in citations from authoritative domains (.gov, .edu) and current 2026 data.

- Human Editor: Apply the “Skilldential Touch”—proprietary metrics, regional “NG” nuance, and final stylistic calibration.

Action Step: Apply this pipeline to your next three long-form technical guides. Measure the delta in AI probability scores, average time-on-page, and search impressions to define your own “humanization” standard based on real-world performance data.