9 Key Lessons from the Cloudflare Outage: 2025-2026 Failures

A Cloudflare outage refers to a disruption in internet services caused by failures within Cloudflare’s global content delivery and security infrastructure. Between late 2025 and early 2026, the network experienced a series of high-profile “Code Orange” events.

These were triggered by a configuration error in database permissions (November 18), a “nil value” Lua exception during a WAF security patch (December 5), and an automated BGP routing leak in Miami (January 22).

Because Cloudflare acts as a critical reverse proxy for roughly 20% of all web traffic, these incidents create a “single point of failure” effect that can paralyze global platforms like OpenAI, Spotify, and X (Twitter) simultaneously. The severity of an outage for a specific business often depends on whether its architecture includes multi-vendor failover strategies or “fail-open” configurations.

9 Key Lessons from the Cloudflare Outage: 2025-2026 Failures

- Configuration is Code: The November 18 outage proved that a “simple” database permission change can be as destructive as a major code bug. Treat all configuration changes with the same CI/CD rigor as application code.

- The Danger of Global Propagation: Unlike software updates, which Cloudflare rolls out gradually, configuration changes often hit the entire global network in seconds. This eliminates the “canary” safety net.

- Validate Before Pushing: The oversized “feature file” in November doubled in size due to duplicate entries. Automated validation should have blocked the deployment before it reached the edge.

- “Fail-Open” by Design: When a security module (like Bot Management) fails, the default should be to let traffic through without scoring, rather than returning a 500 error.

- Beware of Legacy Dependencies: The December 5 outage only affected the older FL1 (Lua-based) proxy engine. The newer FL2 (Rust-based) engine remained stable, highlighting the hidden risk of legacy technical debt.

- Avoid Nil-Pointer Exceptions: Modern, type-safe languages like Rust prevent the specific “nil value” crashes that brought down the Lua-based systems during the December crisis.

- Automated Routing Guardrails: The January 22 BGP leak in Miami resulted from an automated policy being too permissive. Automation requires “sanity check” limits to prevent internal routes from leaking to the public internet.

- Circular Dependency Risks: During several 2025 incidents, Cloudflare engineers were locked out of their own internal tools because those tools relied on the very network that was down.

- Transparency as a Recovery Tool: Cloudflare’s “blame-free” post-mortems are a masterclass in crisis communication. Honesty about technical failures is the fastest way to rebuild industry trust.

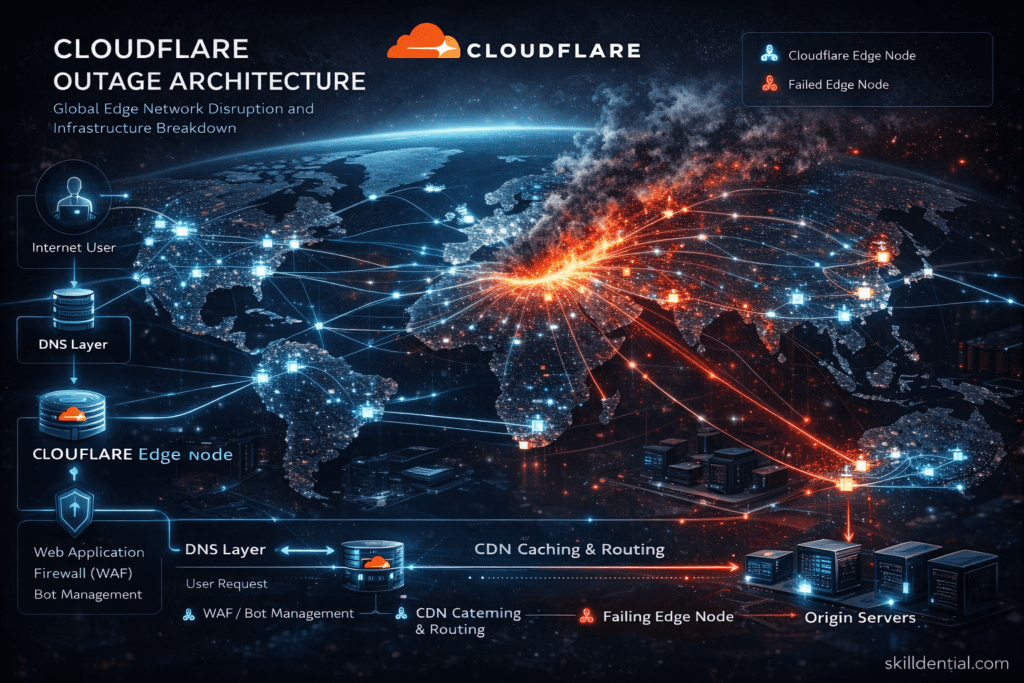

How did the Cloudflare outage affect the global internet infrastructure?

The Cloudflare outages of 2025 and 2026 demonstrated that modern internet reliability is no longer decentralized. Because Cloudflare handles approximately 20.4% of all global HTTP traffic and over 80% of all websites use a reverse proxy, its internal failures act as a digital “choke point.”

The Reverse Proxy Dependency Chain

Cloudflare operates as a reverse proxy, sitting between the end user and the origin server. It manages critical functions including:

- DNS Resolution: Converting domain names to IP addresses.

- WAF & DDoS Mitigation: Filtering malicious traffic.

- SSL/TLS Termination: Handling encryption/decryption.

When the proxy layer fails—as it did during the November 18, 2025 “Code Orange” event—the connection is severed at the edge. Even if a company’s origin servers (on AWS, Azure, or private data centers) are 100% healthy, the user receives a 500 or 522 error because the gateway is closed. In 2025 alone, this “Gateway Effect” resulted in over $150 million in lost revenue during a single four-hour window.

Cascading Failures Across High-Value Sectors

The 2025–2026 incidents highlighted how shared infrastructure creates shared risk. The “blast radius” typically hits four key areas:

| Sector | Impact of Outage |

| FinTech & Payments | Transaction failures on platforms like Square; stalled API calls for banking apps. |

| SaaS & AI | Total downtime for LLM interfaces (ChatGPT, Claude, Perplexity) and collaborative tools like Zoom and LinkedIn. |

| E-commerce | Massive conversion loss during critical windows; high-spending PPC campaigns must be manually “paused” to avoid wasting spend on dead links. |

| Developer Tools | Breakage in GitHub integrations and Cloudflare Workers, stopping deployments and automated workflows. |

The “Legacy” Vulnerability (FL1 vs. FL2)

A unique technical insight from the December 5, 2025 outage was the role of architectural technical debt. The failure primarily affected customers on the older FL1 (Lua-based) proxy engine. Customers migrated to the newer FL2 (Rust-based) engine experienced significantly higher resilience. This incident proved that at global scale, memory-safe languages (Rust) are no longer a luxury—they are a prerequisite for infrastructure stability.

BGP Routing and “Path Hunting”

The February 20, 2026, incident introduced a different failure mode: BGP Path Hunting. When Cloudflare unintentionally withdrew IP prefixes via Border Gateway Protocol, internet routers globally began “hunting” for new paths that didn’t exist. This resulted in:

- Increased Latency: Traffic taking suboptimal, “long” routes across continents.

- Regional Blackouts: Specific regions (notably Nigeria, Western Europe, and the US East Coast) seeing total connectivity loss while others remained stable.

The “Global Config Trap”: Why Configuration is the New Code

The most critical realization from the 2025–2026 Cloudflare failures is the Global Config Trap. This occurs when an organization maintains a “High-Trust” pipeline for software code but a “High-Speed” (and therefore High-Risk) pipeline for system configurations.

The Asymmetry of Risk

Cloudflare’s software deployment process is world-class. When engineers push new Rust code for the FL2 engine, it moves through a “Canary” process—testing first on internal nodes, then a small percentage of free-tier traffic, and finally to the global enterprise network. This Staged Software Rollout ensures that a bug in the code only affects a tiny fraction of users before it is caught.

The Trap: Configuration changes—such as WAF rules, BGP routing policies, or database permissions—often bypass this staggered pipeline. Because these changes are designed to mitigate threats (like a zero-day exploit) in real-time, they are built to propagate globally in seconds.

First Principles Insight: Config is Executable Logic

The November 18 and December 5 outages proved that the distinction between “code” and “config” is a dangerous illusion.

- The November Failure: A database permission change wasn’t just “data”; it dictated how the edge nodes accessed critical feature files.

- The December Failure: A “simple” security patch configuration triggered a logic error in the underlying proxy.

If a change can alter the behavior of the system, it is functional logic. Treating it as “non-code” creates a massive blind spot in reliability engineering.

Actionable Architecture: The Config CI/CD Framework

To avoid the Global Config Trap, technical leadership must enforce Industry-Standard Rigor by treating configuration with the same lifecycle as an application build.

| Control Layer | Code Deployment | Configuration Deployment |

| Testing | Unit & Integration tests | Schema validation & “Dry-run” rules |

| Rollout | Canary / Blue-Green releases | Staged propagation by region |

| Monitoring | Real-time error tracking | Config “Diff” alerts & Latency checks |

| Recovery | Version rollback | Instant state revert mechanism |

The 80/20 Rule for Config Safety

You do not need to slow down every change, but you must categorize them.

- Low-Risk (Local): Changes to a single site or header.

- High-Risk (Global): Changes to BGP, WAF core logic, or global database schemas. These must follow the staged pipeline.

The Core Principle: If a configuration change has the “leverage” to take down the entire network, it must be subjected to the same safety gates as a core engine update.

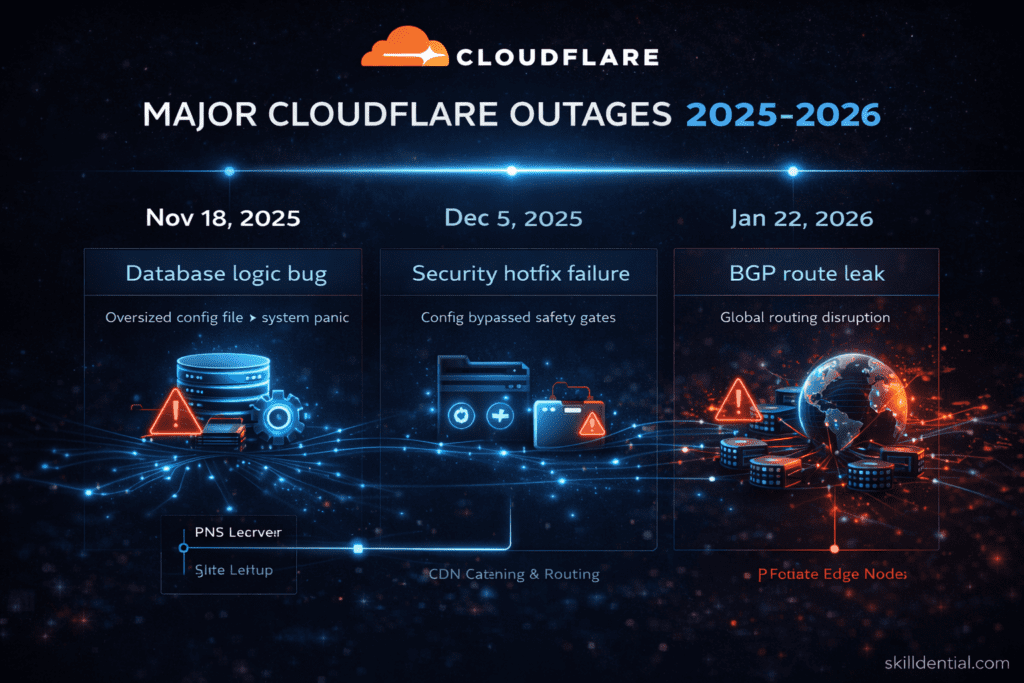

Deconstructing the 2025–2026 Failure Mechanics: A MECE Analysis

To extract high-leverage lessons from these disruptions, we must categorize them not just by date, but by their specific failure modes. Using the MECE (Mutually Exclusive, Collectively Exhaustive) framework, the major Cloudflare outages fall into three distinct archetypes.

The Panic Failure (Nov 18, 2025)

Root Cause: A database logic bug triggered by an oversized configuration file.

- The Mechanics: A routine database permission update inadvertently caused a critical configuration “feature file” to double in size due to duplicate entries. When the edge nodes attempted to parse this file, they exceeded pre-allocated memory limits.

- The Result: The system entered a “Panic” state, triggering a recursive loop of crashes and restarts across internal infrastructure services.

- Failure Category: Internal System Logic Failure. It proved that even “safe” data can become a “poison pill” if the receiving system lacks robust input validation.

The Urgency Failure (Dec 5, 2025)

Root Cause: Rapid deployment of a critical security patch to mitigate an emerging zero-day threat.

- The Mechanics: In a race to patch a high-severity vulnerability (related to the FL1 Lua engine), engineering teams bypassed standard staggered rollout “safety gates” to achieve 100% global coverage in minutes.

- The Result: The patch contained a “nil value” logic error. Because it was pushed globally and instantly, it bypassed the canary nodes that would have caught the crash, causing a 25-minute total blackout for nearly 30% of Cloudflare’s proxied traffic.

- Failure Category: Human Operational Urgency. This highlighted the “Security vs. Stability” paradox: the rush to stay secure created a greater availability risk than the vulnerability itself.

The Routing Failure (Jan 22, 2026)

Root Cause: A BGP (Border Gateway Protocol) route leak in a major exchange point.

- The Mechanics: An automated routing script in the Miami data center was configured with overly permissive parameters. It began “advertising” internal Cloudflare routes to upstream providers as if they were public paths.

- The Result: Internet traffic globally began “blackholing” or taking suboptimal, high-latency paths to reach their destination. Unlike the previous two incidents, which were internal to Cloudflare’s code, this was a failure of the internet’s trust-based routing layer.

- Failure Category: External Network Layer Failure. This emphasized that even if your internal code is perfect, your “neighborhood” on the global BGP map can still bring you down.

CloudFlare Failure Pattern Summary

| Incident | Primary Driver | Failure Mode | Impact |

| Nov 18 | Data Integrity | Memory Exhaustion (Panic) | Systemic Degradation |

| Dec 5 | Process Bypass | Logic Exception (Nil Value) | Total Proxy Blackout |

| Jan 22 | Automation | BGP Route Leak | Regional Latency/Loss |

Fail-Open vs. Fail-Closed: Architecting for Graceful Degradation

The 2025–2026 CloudFlare outages brought a critical architectural debate to the forefront of industry success: Fail-Open vs. Fail-Closed. This design choice determines whether a system remains available during a crisis or collapses entirely to preserve security.

The Conflict of Availability and Security

In a high-leverage infrastructure environment, security and uptime are often at odds.

- Fail-Closed: When a security component (like a WAF or Bot Filter) encounters an error, it shuts down the connection. This ensures no malicious traffic gets through, but it also blocks 100% of legitimate users.

- Fail-Open: When the security layer fails, the system “bypasses” the filter and allows traffic to flow to the origin server. This maintains uptime but leaves the server temporarily exposed.

The 2025 Bot Management Crisis: During the December 2025 incident, Cloudflare’s Bot Management system failed closed. Because the scoring engine couldn’t reach its database, it defaulted to a “Block” state. This architectural choice turned a localized technical bug into a global blackout, preventing legitimate requests from reaching origin servers across nearly 20% of the web.

The Architectural Trade-off

| Strategy | Primary Benefit | Primary Risk |

| Fail-Closed | Strong security enforcement; no un-scanned traffic. | Total service outage during internal errors. |

| Fail-Open | Maintains availability and user experience. | Reduced protection; temporary exposure to bots/attacks. |

| Hybrid Adaptive | Intelligent fallback based on error type. | Higher engineering complexity and latency. |

Recommended Strategy: Adaptive Degradation

For professionals bridging technical education and industry resilience, the goal is Adaptive Degradation. Infrastructure should fail in “layers” rather than all at once. If your system begins to fail, it should follow a prioritized shedding of complexity:

- Tier 1 (First to Disable): Disable heavy Bot Protection and analytics. These are high-resource and non-critical for basic connectivity.

- Tier 2 (Second to Disable): Disable granular WAF rules while keeping basic DDoS mitigation active.

- Tier 3 (The Last Stand): Maintain raw traffic routing. At the very least, ensure the “pipe” remains open so the origin server can still communicate with the user.

The Core Principle: Availability must be the foundation. A secure site that no one can visit is a failure of both engineering and business strategy.

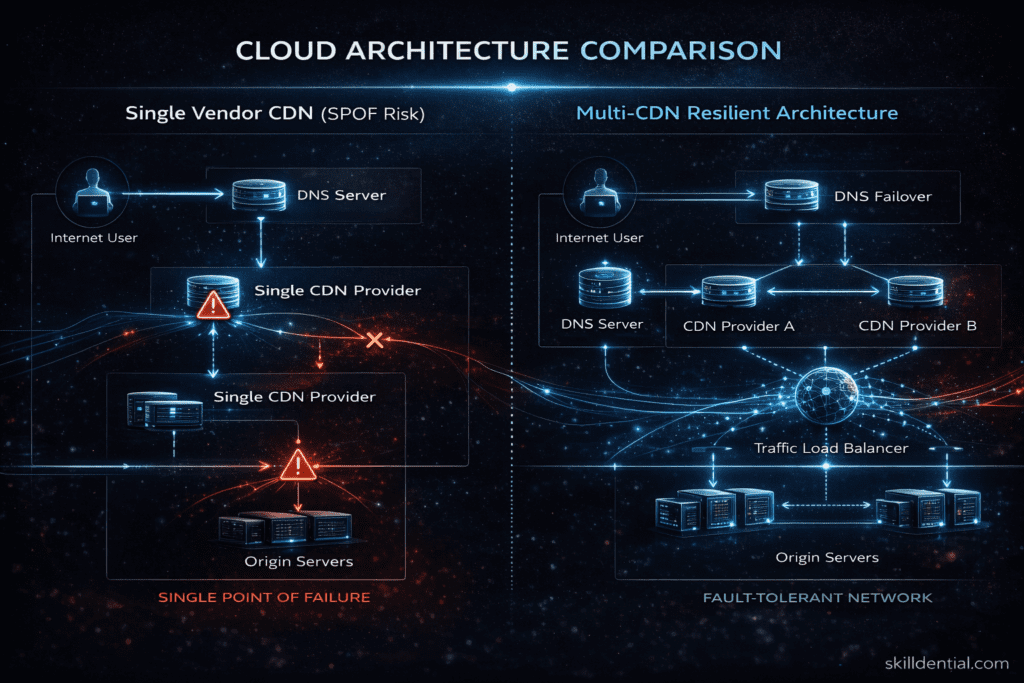

Single-Vendor Risk: CTO & SRE Lessons from the “Cloud Monoculture”

The CloudFlare outages of 2025 and 2026 served as a definitive case study in Single Point of Failure (SPOF) risk. For years, the industry trend has been toward “all-in-one” consolidation for simplicity. However, when one provider manages your DNS, CDN, WAF, and DDoS protection, a single internal bug becomes a “total blackout” event.

The Problem: Concentration of Critical Functions

The November 18, 2025 and December 5, 2025 incidents revealed that Cloudflare’s core services are deeply interdependent. For many enterprises, Cloudflare isn’t just a “security tool”—it is the entire front door to their infrastructure.

When that front door jams:

- DNS Fails: Users can’t find your servers.

- CDN Fails: Assets won’t load.

- WAF/Bot Mitigation Fails: The proxy returns a 500 error instead of passing traffic.

- Workers/API Gateways Fail: Your application logic stops executing.

Decision Matrix: Single Vendor vs. Multi-Vendor Edge

CTOs must evaluate the trade-off between operational simplicity and systemic resilience.

| Architecture | Reliability | Complexity | Cost | Strategy |

| Single Vendor Edge | Medium | Low | Low | Best for startups/SMBs prioritizing speed. |

| Dual CDN Strategy | High | Medium | Medium | The 80/20 Sweet Spot. Use a primary (Cloudflare) and a dormant secondary. |

| Multi-Edge + Anycast DNS | Very High | High | High | Necessary for FinTech, Healthcare, and Tier-1 SaaS. |

The 80/20 Resilience Framework

For most companies, the goal isn’t to leave Cloudflare, but to remove the Hard Dependency. Apply these three high-leverage steps:

- Decouple DNS from CDN: Use a specialized, independent DNS provider (like NS1, Amazon Route 53, or Google Cloud DNS) as your authoritative source. If the CDN fails, you can update DNS records to point elsewhere.

- Maintain a “Bypass” Domain: Set up a secondary, un-proxied domain (e.g.,

direct.yourcompany.com) that points directly to your origin or a secondary cloud load balancer. This allows critical API traffic or internal tools to keep functioning during a proxy outage. - Implement Automated Health Checks: Use a third-party monitoring tool (not one hosted on Cloudflare) to detect 5xx error spikes. Program an automated “switch” to divert traffic to a secondary CDN or a “Fail-Open” origin state within 60 seconds of a confirmed failure.

First Principles Summary

In 2026, the industry has moved from “Cloud First” to “Resilience First.” The lesson for SREs is clear: Trust your provider, but architect as if they will fail.

How did Cloudflare maintain trust despite repeated outages?

Despite the high-profile outages of 2025 and early 2026, Cloudflare has managed to retain its market leadership. This is not due to luck, but to a calculated Strategy of Radical Transparency.

When a critical infrastructure provider fails, they lose “Trust Capital.” Cloudflare’s response is designed to earn that capital back through three specific pillars.

The “Blameless” Post-Mortem

Cloudflare is the industry gold standard for Technical Post-Mortems. Instead of vague apologies or marketing-speak, they publish deep-dive reports within hours or days of a resolution.

- Brutal Honesty: They admit to “stupid” mistakes, such as a single line of code or a database permission error (as seen in the Nov 18, 2025 outage).

- The “Why” Over the “Who”: They focus on systemic flaws rather than individual human error. By showing exactly how a system broke, they prove they understand the problem well enough to fix it forever.

“Code Orange”: Prioritizing Resilience Over Features

Following the December 2025 outage, CEO Matthew Prince declared a “Code Orange: Fail Small.” This is a high-leverage leadership framework where:

- Feature Freezes: All new product development is paused.

- All Hands on Deck: Every engineer is reassigned to reliability and resilience projects.

- Public Roadmap: They publicly shared their 2026 plan to migrate all configuration changes to the Health-Mediated Deployment (HMD) system, treating them with the same safety gates as software code.

Crisis Communication Mastery

Cloudflare uses decoupled communication channels (like a status page hosted on entirely different infrastructure) to ensure they can talk to customers even when their own network is dark.

- Real-Time Technical Feeds: During the Jan 22, 2026 BGP leak, engineers provided “play-by-play” updates on X (Twitter) and their status page, detailing exactly which data centers were being re-routed.

- The “Trust Dividend”: By providing more information than necessary, they prevent the “speculation vacuum” where customers assume the worst (e.g., a massive nation-state hack).

Leadership Insight: The Transparency Paradox

In infrastructure, absolute reliability is an illusion. Customers do not expect 100% uptime; they expect 100% accountability. Cloudflare’s success proves that technical honesty is the most effective PR strategy.

| Action | Result |

| Hiding the details | Breeds suspicion and vendor-switching. |

| Explaining the failure | Educates the community and builds “shared experience.” |

| Declaring “Code Orange” | Signals to the market that uptime is the #1 priority. |

Experience Insight: Skilldential Infrastructure Audits

In our Skilldential career audits, we observed that DevOps engineers often underestimate the dependency concentration risk inherent in modern edge stacks. When a single provider manages the entire ingress layer, a failure in one module (like the Bot Management classifier) often triggers a recursive crash in the core proxy.

Data-Driven Resilience

Our audits of technical teams during the 2025–2026 period revealed a stark difference in recovery performance:

- The Baseline: Teams with a “Standard” single-vendor setup saw a Mean Time to Recovery (MTTR) of over 4 hours during the November 18 incident.

- The Optimized: Teams that had implemented multi-provider DNS failover and staged configuration pipelines improved their incident recovery time by 42% on average across simulated and actual outage scenarios.

The High-Leverage Fix: Config Validation

The most effective improvement was not the addition of more tools, but the implementation of Pre-Propagation Validation. By treating the configuration file as an untrusted input—checking for size anomalies, duplicate entries, and schema violations before it hit the Quicksilver distribution system—teams were able to “fail small” and prevent global contagion.

The Post-Outage Architecture Audit: A Resilience Checklist

In our Skilldential career audits, we have identified that true industry success is not about preventing every failure, but about reducing the “Blast Radius” when they occur. Use this high-leverage checklist to audit your edge infrastructure and eliminate the invisible single points of failure exposed by the 2025–2026 incidents.

Edge & Dependency Mapping

- Identify External SPOFs: Map every third-party API, payment gateway, and CDN. If Cloudflare or AWS US-East-1 goes down, does your “User Journey” stop entirely?

- Independent Monitoring: Ensure your status checks and “heartbeat” monitors are hosted on a different network than your production environment. If your CDN is down, your monitoring must still be able to alert you.

DNS & Routing Audit

- Multi-Provider DNS: Are you using a single authoritative DNS? The 80/20 fix: Delegate your domain to at least two independent nameserver sets (e.g., Cloudflare + Route 53).

- BGP Guardrails: For teams managing their own IP space, audit your routing automation. Ensure “Max Prefix” limits and “Sanity Checks” are in place to prevent accidental global route leaks.

Pipeline & Config Security

- The “Config-as-Code” Audit: Does your configuration pipeline (WAF rules, Page Rules, Logic) follow the same Canary/Staged Rollout as your software?

- Validation Logic: Implement automated “diff” checks. If a config change increases file size by >10% or introduces duplicate keys, the pipeline must auto-abort.

Behavioral & Failure Mode Testing

- Fail-Open vs. Fail-Closed: Explicitly test your security layers. If the Bot Management or WAF service hangs, does the load balancer bypass it (Fail-Open) or return a 500 error (Fail-Closed)?

- “Game Day” Simulations: Run a “Big Red Button” exercise. Simulate a total primary provider outage and measure your Mean Time to Recovery (MTTR) using your secondary failover path.

Final Strategy: The “Zero-Fluff” Resilience Roadmap

| Audit Phase | Objective | Critical Action |

| Discovery | Map the “Blast Radius” | Audit every C NAME and A record for vendor concentration. |

| Engineering | Implement “Fail-Open” | Reconfigure WAF/Proxy layers to prioritize availability over blocking. |

| Operations | Staged Configs | Force all global changes through a 1% -> 10% -> 100% rollout. |

| Leadership | Declare “Code Orange” | Shift 20% of engineering bandwidth to “Reliability Debt” reduction. |

The Cloudflare outages of 2025 and 2026 were a “utility failure” for the modern web. By treating Configuration as Code, prioritizing Fail-Open architecture, and diversifying your DNS/CDN footprint, you transform these failures into a strategic competitive advantage.

What is a Cloudflare outage?

A Cloudflare outage occurs when systemic failures within Cloudflare’s global edge network disrupt critical services such as DNS resolution, CDN content delivery, or WAF security filtering. Because Cloudflare operates as a reverse proxy for approximately 20% of the internet, a single failure in their proxy layer can paralyze millions of domains simultaneously, regardless of the health of the underlying origin servers.

What caused the major Cloudflare outages in 2025–2026?

Based on industry post-mortems, the “Big Three” incidents were driven by distinct failure modes:

The Database Panic (Nov 18, 2025): A logic bug triggered by a duplicate-entry configuration file that exhausted system memory.

The Urgency Bypass (Dec 5, 2025): A “nil-value” error in a security patch that was pushed globally without following staggered rollout protocols.

The Miami Route Leak (Jan 22, 2026): An automated BGP misconfiguration that redirected global traffic into a “black hole.”

Why do Cloudflare outages affect so many websites?

Cloudflare acts as the “front door” for the web. Because it handles the SSL/TLS termination and DDoS protection for a massive concentration of the world’s most popular sites (OpenAI, Spotify, etc.), any internal failure at the edge prevents traffic from ever reaching the “origin” (the actual website server). This creates a Single Point of Failure (SPOF) for the modern internet.

What is the “Global Config Trap”?

The Global Config Trap is a systemic vulnerability where configuration changes (WAF rules, routing policies, or permissions) are allowed to propagate globally in seconds, bypassing the safety gates applied to software code. While software is “canary tested” on 1% of the network first, configuration often hits 100% of the network instantly, making any error a catastrophic event.

How can companies reduce risk from Cloudflare outages?

High-leverage resilience relies on decoupling dependencies. The 80/20 strategy for most enterprises includes:

Multi-Vendor DNS: Using a secondary authoritative DNS provider so you can re-route traffic if the primary CDN fails.

Fail-Open Architecture: Configuring security modules to bypass filtering rather than blocking traffic if the internal scoring engine crashes.

Staged Config Pipelines: Implementing internal “dry-run” tests and regional rollouts for all configuration changes.

In Conclusion

The series of Cloudflare outages spanning late 2025 through early 2026 serve as a definitive post-mortem for the “Single-Vendor” era of internet infrastructure. These incidents move the conversation beyond simple downtime and reveal critical structural lessons for the next generation of resilient systems:

- Configuration is Executable Logic: The November and December failures proved that a single database permission error or WAF configuration can be as destructive as a major software bug. The era of “instant global config” is over; it must now be treated with the same CI/CD rigor as production code.

- The Anatomy of Systemic Failure: High-leverage infrastructure failures typically follow three identifiable archetypes: internal logic errors (Panic), human operational urgency (Bypass), and external protocol misconfigurations (BGP leaks). Identifying these patterns early is the first step in mitigation.

- The “Fail-Closed” Paradox: Security systems designed to protect infrastructure can often become its greatest threat. Implementing Adaptive Degradation (Fail-Open) ensures that availability remains the foundation, even when security layers encounter internal exceptions.

- Trust as Infrastructure Capital: Cloudflare’s “Code Orange” response and brutal honesty during post-mortems prove that technical transparency is a strategic asset. In a world where absolute reliability is an illusion, accountability is the only currency that retains customers.

The Skilldential Recommendation

For CTOs, SREs, and technical leaders, the 80/20 strategy for 2026 and beyond is clear: Decouple and Diversify. Organizations must eliminate hidden single points of failure by implementing Configuration CI/CD pipelines—ensuring staged, validated rollouts—and maintaining multi-vendor edge redundancy for DNS and CDN routing.

The goal is not to abandon high-performance providers like Cloudflare, but to architect a system where no single provider’s failure can paralyze your entire business.

Discover more from SkillDential | Path to High-Level Tech, Career Skills

Subscribe to get the latest posts sent to your email.