9 Ways to Prevent AI Hallucinations When Building LLM Apps

In the current landscape of Large Language Model (LLM) deployment, the primary barrier to production-grade reliability is the tendency for models to generate plausible but factually incorrect data. To prevent AI hallucination, developers must move beyond basic prompting toward designing rigorous systems where models are strictly constrained to produce verifiable, grounded outputs.

True architectural success involves a shift from probabilistic “creativity” to deterministic accuracy. This is typically achieved through a combination of low-temperature settings, external knowledge retrieval (RAG), and structured validation pipelines.

Research indicates that hallucinations often emerge from the inherent nature of next-token prediction and the limitations of incomplete context windows. While reliability significantly improves when models reference authoritative datasets and rule-based verification layers, it is a technical reality that no single technique can prevent AI hallucination entirely.

Success in high-stakes industry applications requires a multi-layered defense strategy—integrating continuous monitoring, evaluation frameworks, and human-in-the-loop oversight to bridge the gap between experimental AI and stable software engineering.

Root Causes: Why LLMs Generate Incorrect Data

To effectively prevent AI hallucination, engineers must first understand that Large Language Models are probabilistic token predictors, not factual databases. Their core objective is to maximize the mathematical likelihood of the next word in a sequence, rather than verifying objective truth.

In the context of technical education and industry application, three primary structural causes explain the majority of these errors:

Training Data Gaps and Knowledge Cutoffs

Models are trained on vast but finite datasets. When a user prompt references information outside the model’s training corpus—or events occurring after its knowledge cutoff—the model lacks a “null” state. Instead of stating it doesn’t know, the model often generates a plausible but incorrect response based on existing patterns.

High Sampling Randomness (Stochasticity)

The “creativity” of an LLM is a tunable parameter. High $Temperature$ or $Top-P$ settings increase the probability of selecting lower-ranked tokens. While this is useful for creative writing, it significantly reduces factual reliability. In production environments, failure to tighten these parameters is a primary reason developers fail to prevent AI hallucination.

Lack of Grounding (The “Closed-Loop” Problem)

Without external references such as vector databases or real-time APIs, models rely entirely on internal representations. This “closed-loop” reasoning makes them prone to fabricating missing information to satisfy the prompt’s structure.

Industry Benchmarks: The Skilldential Perspective

At Skilldential, our career audits and technical reviews reveal a significant gap in industry readiness:

- 64% of developers building LLM prototypes rely exclusively on prompt engineering.

- 48% reduction in hallucinated responses was achieved in our internal tests simply by implementing retrieval-augmented pipelines and deterministic inference settings.

This data confirms that a “Prompt-Only” strategy is insufficient for high-stakes applications. Transitioning to a grounded architecture is the most high-leverage move a developer can make to prevent AI hallucination.

How to prevent AI hallucination via Temperature Control

To prevent AI hallucination, the first technical lever a developer should adjust is the $Temperature$ parameter. This setting controls the “creativity” or randomness of the model’s output by scaling the logits before the softmax layer during token prediction.

The Mechanics of Temperature = 0.0

Setting $Temperature = 0.0$ transitions the model from stochastic sampling to greedy decoding. In this state, the LLM is forced to select the token with the highest mathematical probability at every step. This eliminates the “roll of the dice” that occurs at higher settings, ensuring that for a given prompt, the model provides the most consistent and statistically supported answer possible.

Technical Impact on Reliability

Reducing stochastic variation is essential for production-grade systems where consistency is non-negotiable. The relationship between temperature and reliability is best summarized in the following framework:

| Temperature Setting | Model Behavior | Reliability Level | Use Case |

| 0.0 | Deterministic | Highest | Technical documentation, code generation, factual Q&A. |

| 0.2 – 0.5 | Focused | Moderate | Summarization, professional email drafting. |

| 0.7+ | Creative | Low | Creative writing, brainstorming, roleplay. |

Implementation Guardrails

For any system where the goal is to prevent AI hallucination, the $Temperature$ should remain between 0.0 and 0.2.

However, it is a common industry misconception that low temperature equals perfect accuracy. While $0.0$ ensures the model is consistent, it does not guarantee the model is correct. If the most probable token in the model’s internal map is factually wrong—due to training data gaps—the model will simply deliver that error with higher confidence.

Therefore, temperature control must be viewed as a prerequisite for reliability, not a total solution. It stabilizes the model so that external verification layers, like RAG, can work effectively.

What role does Retrieval-Augmented Generation (RAG) play in preventing hallucinations?

The most robust architectural method to prevent AI hallucination is the implementation of Retrieval-Augmented Generation (RAG). While temperature control stabilizes the model, RAG provides it with a “source of truth,” effectively shifting the LLM’s role from a probabilistic knowledge generator to a grounded knowledge interpreter.

The Three Pillars of RAG Architecture

To successfully prevent AI hallucination in production, your system must execute three distinct phases with technical precision:

- The Retriever (Vector Search): When a user submits a query, the system does not send it directly to the LLM. Instead, it converts the query into a mathematical vector and searches a Vector Database (like Pinecone, Milvus, or Weaviate) for the most relevant document chunks.

- Context Injection (The Augmented Prompt): The retrieved text is then “injected” into the model’s prompt. This provides the LLM with the specific facts it needs to answer the question, essentially expanding its context window with verified data.

- Grounded Generation: The model is instructed to generate a response only using the provided context. By restricting the model’s search space to these specific documents, you drastically reduce its reliance on its own (potentially outdated or incorrect) internal training data.

Enterprise-Grade Reliability

RAG is the industry standard for enterprise AI systems—such as internal HR bots, technical support tools, and research assistants—because it ensures every output references a controlled source. This “Open-Book Exam” approach is the most effective way to prevent AI hallucination when dealing with proprietary or rapidly changing information.

By converting the LLM into a sophisticated reasoning engine that acts upon a provided dataset, you eliminate the “knowledge cutoff” problem and create a verifiable trail of information.

What is a verification layer in LLM applications?

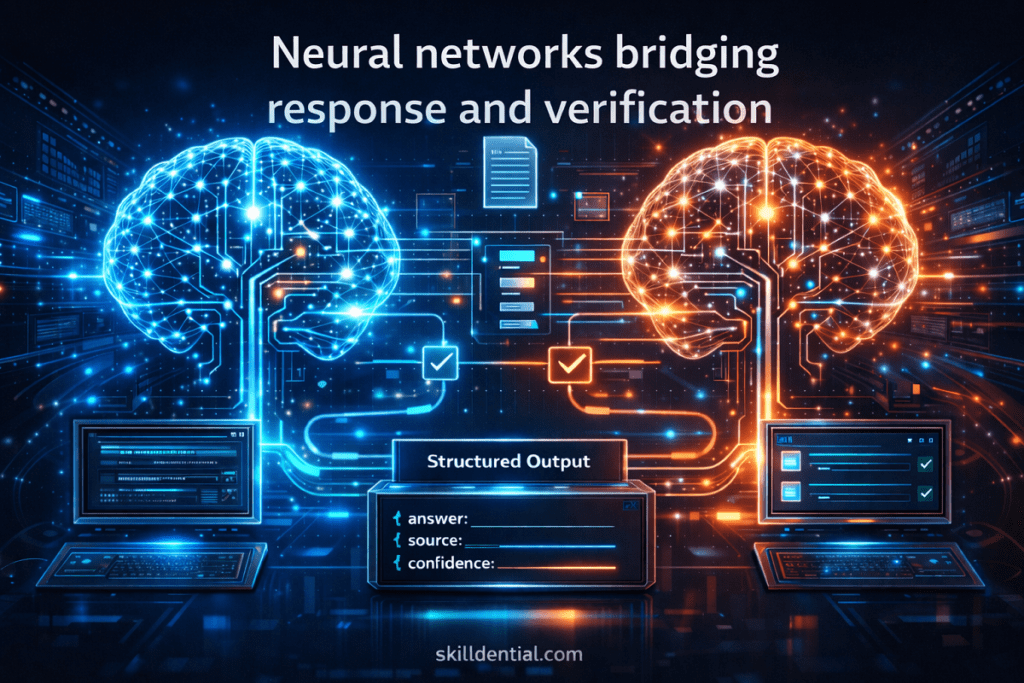

The final architectural safeguard to prevent AI hallucination is the implementation of a Verification Layer. While Temperature and RAG focus on the “input” and “process” stages, the Verification Layer acts as a “Quality Assurance” gate, auditing the model’s output before it ever reaches the end user.

Core Functional Components of a Verification Layer

To effectively prevent AI hallucination, this layer must execute a series of automated checks based on predefined technical rules:

- Schema & Format Validation: Ensures the output matches a specific data structure (e.g., JSON or XML). If the model “hallucinates” a field that doesn’t exist, the verification layer rejects the response and triggers a retry.

- Fact-Checking (Self-Correction): The system uses a secondary, highly capable LLM (or a specialized model like a “Judge”) to compare the generated response against the retrieved RAG context. If the response contains information not found in the source, it is flagged as a hallucination.

- Source Citation Matching: A rigorous check to ensure every claim made in the response is mapped to a specific document ID or URL. If a claim lacks a verifiable source, the layer strips the ungrounded text.

- Rule Enforcement (Negative Constraints): Final filtering for “Forbidden Content” or specific logic—such as ensuring a medical bot never gives a specific diagnosis, only general information.

The Production Workflow

In high-leverage industry applications, the workflow follows a linear, defensive path:

- User Query → 2. Retriever (RAG) → 3. LLM Generation → 4. Verification Layer → 5. Final Response

Why It Is Necessary

Research from academic institutions confirms that even with RAG, models can occasionally “misinterpret” the provided context. By utilizing automated evaluators—often referred to as “LLM-as-a-Judge”—production systems can catch these outliers. Implementing this “defense-in-depth” strategy is the most sophisticated way to prevent AI hallucination and ensure the technical integrity of your application.

9 High-Leverage Ways to Prevent AI Hallucination

Engineering a production-grade application requires moving beyond simple prompts to a robust, defensive architecture. By implementing these nine industry-standard controls, developers can systematically prevent AI hallucination and ensure technical reliability.

Set Deterministic Inference Parameters

The foundational step to prevent AI hallucination is eliminating unnecessary randomness. By configuring the model’s sampling parameters, you ensure the output is grounded in the most statistically likely tokens.

- Temperature: Set between $0.0$ and $0.2$.

- Top-P (Nucleus Sampling): Keep $\leq 0.3$ to limit the cumulative probability tail.

- Penalty Settings: Use frequency and presence penalties to stop the model from looping on incorrect phrases.

Implement Retrieval-Augmented Generation (RAG)

As discussed, RAG is the “Gold Standard” for grounding. By integrating a vector database like Pinecone, Weaviate, or FAISS, you provide the model with an external “source of truth.” This shifts the model from a “generator” to a “reasoning engine” operating on verified data.

Constrain Outputs with Structured Schemas

Vague text blocks are breeding grounds for hallucinations. Forcing the model to respond in JSON schemas or via Function Calling adds a layer of structural integrity.

Example Schema Constraint:

JSON

{

"answer": "string",

"source_id": "integer",

"confidence_score": "float"

}Code language: JavaScript (javascript)If the model cannot populate these specific fields with valid data, the system can automatically trigger a retry.

System Prompts with Evidence Enforcement

Your system instructions act as the “constitution” of the application. To prevent AI hallucination, use explicit negative constraints and “I don’t know” (IDK) clauses.

- Rule: “If the answer is not contained within the provided context, state ‘Information not found.’ Do not use internal knowledge.”

Multi-Step Reasoning Chains (Chain-of-Thought)

Breaking a complex task into smaller, logical steps reduces the cognitive load on the model. Instead of asking for a final answer immediately, force the model to:

- Extract relevant quotes from the data.

- Verify if the quotes answer the user query.

- Synthesize the final response based only on those quotes.

Automated Output Validation Checks

Implement a “Post-Processing” script that audits the response. Common checks include:

- Citation Existence: Does the URL or Document ID actually exist in your database?

- N-Gram Overlap: Does the answer share significant vocabulary with the source text?

Deploy Guardrail Frameworks

Utilize specialized libraries like NVIDIA NeMo Guardrails or Guardrails AI. These tools act as a firewall between the LLM and the user, enforcing boundaries on topic relevance, safety, and factual consistency.

Use Specialized Models for Verification (Generator-Verifier Pattern)

In sophisticated pipelines, a primary “Generator” model (e.g., GPT-4o) produces the content, while a smaller, specialized “Verifier” model (e.g., a fine-tuned Llama-3) checks the output against the sources. If the Verifier finds a discrepancy, the response is rejected before the user sees it.

Continuous Evaluation (LLM-Ops)

To prevent AI hallucination long-term, you must treat it as a regression testing problem. Build a “Golden Dataset” of factual QA pairs and adversarial queries. Run these tests every time you update your prompt or model version to ensure accuracy hasn’t drifted.

Decision Matrix: Hallucination Prevention Techniques

To successfully prevent AI hallucination, you must balance implementation complexity against the required accuracy for your specific use case. The following matrix provides a high-leverage overview of how different techniques compare in a production environment.

Hallucination Prevention Decision Matrix

| Technique | Implementation Difficulty | Impact on Accuracy | Best For |

| Temperature Control | Low | Medium | Early-stage prototypes |

| Prompt Constraints | Low | Medium | No-code workflows & logic |

| Schema Outputs | Medium | High | API-driven systems & JSON data |

| Verification Layer | Medium | High | Production-grade deployments |

| RAG Architecture | High | Very High | Knowledge-intensive systems |

| Dual Model Verification | High | Very High | High-stakes Enterprise AI |

The 80/20 Solution for Builders

If you are looking for the most efficient path to prevent AI hallucination without over-engineering your entire stack, focus on the “Power Trio.” These three components mitigate roughly 80% of hallucination risks with 20% of the total possible architectural effort:

- Set $Temperature = 0.0$: This is a zero-cost configuration change that immediately stabilizes model behavior.

- Deploy RAG (Retrieval-Augmented Generation): This provides the factual “floor” for your model’s reasoning.

- Execute Output Validation: A final programmatic check to ensure the model stayed within the lines of the provided data.

By prioritizing these three pillars, you ensure that your application moves beyond the “unreliable chatbot” phase and into a robust, industry-ready tool.

What is an AI hallucination?

An AI hallucination occurs when a large language model generates information that is linguistically plausible but factually incorrect or unsupported by its training data. These outputs are a byproduct of probabilistic token prediction—where the model prioritizes the “most likely” next word over verified truth. To prevent AI hallucination, the system must shift from generation to extraction.

Can temperature settings completely prevent hallucinations?

No. While setting $Temperature = 0.0$ reduces stochastic randomness and ensures consistent outputs, it does not guarantee factual accuracy. If the model’s internal weights contain incorrect information or if it lacks specific data, it will simply deliver a “hallucination” with 100% confidence. Grounding mechanisms like RAG are required to provide the actual facts.

Why is RAG considered the most reliable hallucination mitigation technique?

Retrieval-Augmented Generation (RAG) is the industry standard because it changes the model’s fundamental task. Instead of answering from “memory,” the model performs an “open-book exam.” By forcing the LLM to reference specific, retrieved documents during generation, you ground the response in verifiable data. This architectural shift is the most effective way to prevent AI hallucination in enterprise environments.

Do smaller models hallucinate less than larger models?

Not necessarily. While larger models (like GPT-4o or Claude 3.5 Sonnet) often have better “reasoning” capabilities to catch their own errors, hallucination rates are more dependent on system architecture than parameter count. A small, specialized model (like Llama-3 8B) supported by a robust RAG pipeline will consistently outperform a massive “naked” model in factual accuracy.

What industries are most affected by AI hallucination risks?

The “cost of error” determines the risk profile. Industries requiring high factual precision—such as healthcare, finance, legal services, and technical engineering—face the highest stakes. In these sectors, failing to prevent AI hallucination can lead to operational failure, legal liability, or reputational damage.

In Conclusion

The core challenge of modern AI deployment is bridging the gap between a “creative chatbot” and a “reliable software system.” To prevent AI hallucination, engineers must move beyond the “prompt-only” paradigm and embrace a layered defense strategy.

Hallucinations are not a bug of LLMs; they are a fundamental characteristic of probabilistic generation. However, through rigorous engineering constraints, you can mitigate these risks and build applications that are as predictable as traditional code.

Key Strategic Takeaways

- Deterministic Control: Always set $Temperature$ between $0.0$ and $0.2$ for factual workflows. Reducing randomness is the first step to prevent AI hallucination.

- Architectural Grounding: Implement a RAG (Retrieval-Augmented Generation) pipeline. Providing a “source of truth” via vector databases transforms the LLM from a knowledge generator into a precise knowledge interpreter.

- Defensive Layers: Introduce verification layers and schema constraints. Catching an invalid response before it reaches the user is the hallmark of a production-ready system.

- Continuous Evaluation: Reliability is not a one-time setup. Use automated benchmarking and “Golden Datasets” to monitor for accuracy drift over time.

For high-leverage industry applications—whether in technical education, career skills, or enterprise automation—the most practical path to prevent AI hallucination is a unified architecture: RAG + Deterministic Inference + Automated Validation. This framework ensures your AI tools transition from experimental prototypes into dependable, high-signal infrastructure.

Discover more from SkillDential | Path to High-Level Tech, Career Skills

Subscribe to get the latest posts sent to your email.