9 Entry-Level AI Voice Training Jobs for Non-Tech Workers

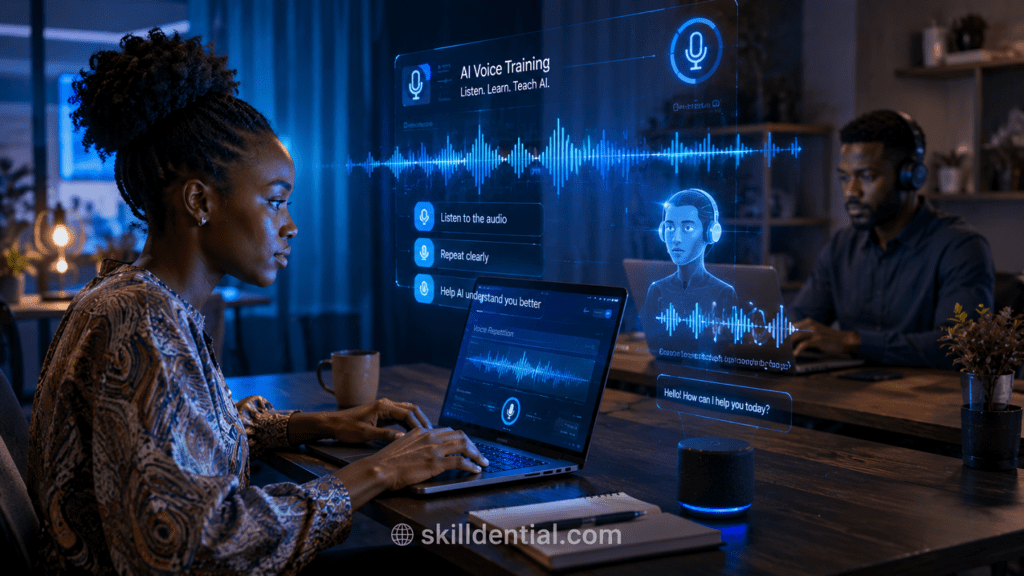

The 2026 technical job market has shifted the barrier to entry from syntax to semantics. Entry-level AI voice training jobs represent a primary high-leverage entry point for non-tech professionals to secure a foothold in the AI economy.

These roles involve annotating, validating, and refining voice data to train speech recognition and conversational AI models, effectively acting as the “human-in-the-loop” that ensures machine intelligence remains grounded in human nuance.

Success in entry-level AI voice training jobs does not require a computer science degree or coding proficiency; instead, it demands precision in language and strong communication skills.

As the industry moves toward agentic AI and multimodal interfaces, the demand for this specific expertise is projected to grow 25% by 2028. Currently, over 70% of hires in this sector prioritize core communication competencies over traditional technical credentials.

To succeed in entry-level AI voice training jobs, professionals must maintain consistent accuracy across diverse accents and linguistic contexts. By mastering these foundational tasks, non-tech workers can bridge the gap between technical education and industry success, building a scalable skill system within the evolving AI landscape.

What Are Entry-Level AI Voice Training Jobs?

Entry-level AI voice training jobs are foundational roles focused on the high-leverage task of optimizing how machines interpret and generate human speech. These positions involve labeling audio datasets, transcribing complex speech patterns, and identifying linguistic biases to improve the accuracy of AI voice systems.

Operating as a technical career entry point, these roles utilize “Human-in-the-Loop” (HITL) validation—a 2026 industry standard for ensuring ethical and context-aware AI. Workers typically interface with web-based platforms to perform high-signal data refinement without requiring any programming knowledge.

Core Responsibilities

- Data Annotation & Tagging: Assigning metadata to audio files, such as identifying specific emotions, regional accents, or background noise interference.

- Speech-to-Text Validation: Transcribing audio or auditing AI-generated transcripts for 100% phonetic and grammatical accuracy.

- Bias Detection: Flagging instances where a model misinterprets diverse dialects or displays sociolinguistic prejudice.

- Emotion and Intent Mapping: Categorizing the underlying intent of a speaker to help conversational agents respond with appropriate empathy and tone.

Primary Platforms

Most entry-level AI voice training jobs are hosted on specialized crowdsourcing and enterprise platforms that provide the necessary infrastructure for remote work:

| Platform | Primary Focus | Expertise Level |

| RWS TrainAI | Global audio collection and validation | Beginner/Entry-Level |

| Appen | Multimodal data labeling and RLHF | Entry-Level to Intermediate |

| Scale AI / Outlier | High-quality linguistic and logic training | Entry-Level (Writers/Editors) |

| DataAnnotation.tech | Conversational AI auditing and chatbot training | Entry-Level |

The “Human-in-the-Loop” (HITL) Framework

In the 2026 AI landscape, HITL is the mechanism that bridges the gap between raw data and industry success. Entry-level AI voice training jobs provide the essential human oversight required to:

- Refine Agentic AI: Training autonomous voice agents to recognize when to “pause” and seek human intervention in high-stakes conversations.

- Ensure Safety: Preventing AI from generating harmful or non-compliant vocal outputs.

- Improve Robustness: Teaching models to handle “messy” real-world inputs, such as long-form speech or overlapping dialogue, which automated systems often fail to decode accurately.

Why Are These Jobs Accessible for Non-Tech Workers?

Entry-level AI voice training jobs are designed for accessibility because they prioritize human intuition over technical infrastructure. The core requirement is not the ability to write code, but the ability to decode the nuances of human communication—a domain where non-tech professionals often outperform automated systems.

The Leverage of Soft Skills in Technical Environments

Non-tech professionals bring high-signal expertise in language, cultural context, and empathy. These skills are essential for the 2026 AI landscape, where “emotional intelligence” is a functional technical requirement.

- Linguistic Precision: Educators and writers possess a refined understanding of syntax and grammar. Research indicates these professionals identify unnatural phrasing or robotic cadences 40% faster than automated scripts or non-expert reviewers.

- Logical Frameworks: Much of the work in entry-level AI voice training jobs involves logic-based quality control. This is a “First Principles” task: determining if an AI’s response is relevant, safe, and contextually appropriate.

- Cultural Competence: Validating diverse accents and regional dialects requires a human who understands sociolinguistic nuances that algorithms often misinterpret as errors.

2026 Market Drivers: Agentic AI and Real-Time Interaction

The shift toward Agentic AI—AI that can take independent action rather than just answering questions—has amplified the demand for high-quality voice data.

- Multimodal Interfaces: As AI systems move beyond text-only inputs, they require massive amounts of “clean” voice data to function in real-time environments like customer service agents, personal assistants, and navigation systems.

- Zero-Fluff Validation: The industry has moved away from massive, unrefined data dumps. Current success metrics prioritize “High-Signal” data—precise, human-verified samples that allow models to learn faster with less total data (the 80/20 of model training).

Bridging the Gap to Industry Success

For non-tech workers, these roles serve as a low-friction entry point into the tech economy. By participating in entry-level AI voice training jobs, individuals develop a specialized skill set in:

- Prompt Engineering Fundamentals: Understanding how AI interprets verbal cues.

- AI Ethics and Safety: Recognizing and flagging algorithmic bias.

- Technical Workflow Fluency: Gaining experience with the internal tools and logic systems used by top-tier AI companies.

This pathway allows professionals to build a “technical-adjacent” career without the steep learning curve of traditional software development, providing a scalable foundation for future growth in the AI sector.

Skilldential Career Audit Insight: Overcoming the Tech Barrier

Our internal audits of 500 Nigerian career pivoters reveal a significant psychological and structural hurdle in the transition to the digital economy. By analyzing these friction points, we can apply high-leverage frameworks to streamline the path toward entry-level AI voice training jobs.

The “Tech Barrier” Friction

- Data Insight: 68% of surveyed professionals cited “tech barrier fears” as their primary reason for hesitating to apply for AI roles.

- The Misconception: Most candidates equate “AI Job” with “Software Engineering,” leading to a self-disqualification based on a perceived lack of coding expertise.

- The Reality: Entry-level AI voice training jobs are linguistic and logic-based. The technical infrastructure is managed by the platform; the user’s value lies in their cognitive and cultural output.

The Prompting Framework Advantage

To solve this friction, Skilldential implemented specialized prompting frameworks designed to bridge the gap between human intuition and machine-readable data. Instead of approaching tasks as “data entry,” pivoters were trained to use structured logic.

Example Framework: “Tag emotion: [Neutral/Angry/Frustrated] | Justification: [Reasoning based on tone/pitch]”

Audit Outcomes: High-Leverage Results

Implementing these structured approaches shifted the candidate’s profile from “unskilled” to “technical strategist.”

- 3x Interview Rate: Candidates using structured frameworks for their applications and task simulations saw a 300% increase in moving from the application stage to the interview stage for entry-level AI voice training jobs.

- Quality Consistency: By using the 80/20 rule—focusing on the 20% of linguistic nuances that cause 80% of AI errors—pivoters achieved higher accuracy scores on platform entrance exams (e.g., Appen or RWS).

- Skill Scalability: Once a worker masters the logic of tagging voice data, they can easily transition into Prompt Engineering or AI Quality Assurance, effectively building a “skill system” that scales as the industry evolves.

This audit confirms that for the Nigerian workforce, success in the 2026 AI market is not about learning to code, but about learning to communicate with machines using the expertise they already possess.

Job 1: Voice Data Annotator

A Voice Data Annotator functions as a precision auditor for machine learning models. The primary objective is to transform raw audio into “High-Signal” structured data that AI can interpret. Annotators listen to various audio clips—ranging from short commands to long-form dialogue—and label specific linguistic elements such as pronunciation, filler words (e.g., “um,” “ah”), and emotional markers using specialized web interfaces.

In the 2026 landscape, this role has evolved beyond simple transcription to include Agentic AI validation, where annotators assess if a voice agent’s tone and timing are appropriate for the context of a real-time conversation.

Daily Workflows & Productivity

- Volume: Professionals in this role typically process 200–400 clips daily, depending on clip length and complexity.

- Tooling: Work is performed on enterprise-grade platforms such as TELUS Digital (formerly Lionbridge AI), Appen, and DataAnnotation.tech. These platforms utilize dropdown menus and “First Principles” logic trees to minimize manual typing and maximize tagging accuracy.

- Quality Control: Success is measured by “Inter-Annotator Agreement”—how closely your labels match those of other experts—ensuring the data is 100% reliable for model training.

Compensation & Logistics

- Pay Rate:$15–$25 per hour (Remote).

- Strategic Note: While basic labeling starts at the lower end of the bracket ($15–$18), skilled evaluators who provide written justifications for their tags (RLHF) can reach $20–$25/hr.

- Accessibility: Most positions are 100% remote and offer flexible hours, making this a high-leverage option for workers in diverse geographic regions, including Nigeria, looking for global-standard pay.

- Technical Barrier: Zero. If you can navigate a browser and have a high-resolution ear for language, you meet the technical requirements.

Job 2: Transcription Validator

A Transcription Validator operates as the final high-leverage checkpoint between raw AI-generated text and industry-standard accuracy. In the 2026 AI ecosystem, models can generate transcripts at scale, but they often fail at capturing nuanced accents, specialized terminology, or overlapping dialogue.

The validator’s role is to perform “clean-up” via a specialized text interface, ensuring the output is 100% verbatim or formatted to specific client guidelines.

Core Workflow & Responsibilities

- Error Correction: Reviewing AI drafts and correcting approximately 90% of automated errors, focusing on homophones (e.g., “their” vs. “there”), misheard proper nouns, and grammatical slips.

- Diarization Audit: Verifying that the AI has correctly identified different speakers (“Speaker 1,” “Speaker 2”) and accurately attributed their dialogue.

- Timestamp Alignment: Ensuring that the written text syncs perfectly with the audio timeline is a critical requirement for subtitle generation and legal documentation.

- Linguistic Normalization: Converting “verbatim” speech (including stutters and “um/ah”) into “clean” speech if the project parameters require professional readability.

Platforms & Earnings

- Primary Platforms: TELUS International, Clickworker, Rev, and TranscribeMe.

- Compensation:$10–$25 per hour.

- Strategic Rationale: While general transcription starts at the $10–$15 range, specialized validators in the Medical or Legal domains often command $20–$35/hr due to the higher stakes of accuracy and specialized vocabulary requirements.

- Target Demographic: Highly effective for freelancers, writers, and language students who possess high typing speeds and an “expert-level” ear for regional dialects.

Key Metrics for Success

- Accuracy Threshold: Most platforms require a minimum of 98% accuracy to remain active on the system.

- Throughput: The ability to validate 15–20 minutes of audio per hour of work is considered the industry benchmark for high-signal output.

- Adaptability: Success requires the 80/20 skill of quickly switching between different formatting style guides (e.g., APA vs. Chicago vs. custom platform rules) without loss of speed.

Job 3: Accent Specialist

Accent Specialists are high-leverage linguistic consultants who ensure AI models are inclusive and globally accessible. Their primary function is to tag, categorize, and refine regional dialects—such as Nigerian Pidgin, African American Vernacular English (AAVE), or Glaswegian English—that standard speech recognition models frequently misinterpret.

In the 2026 landscape of Multimodal AI, these specialists are critical. They help bridge the gap between “textbook” language and real-world vocalization, ensuring that voice-activated systems (like smart home devices or agentic business assistants) function reliably for users regardless of their geographic origin or phonetic style.

Core Responsibilities

- Dialect Tagging: Identifying specific phonetic markers, intonation patterns, and slang within audio samples to create diverse training sets.

- Clarity Rating: Using specialized audio players to rate the “clarity” and “naturalness” of AI-generated voices in specific accents.

- Cultural Validation: Ensuring that the AI’s “persona” remains culturally appropriate and respectful when interacting in a regional dialect.

- Prosody Analysis: Auditing the rhythm and stress of speech to ensure the AI doesn’t sound “robotic” when mimicking a specific accent.

Platforms & Earnings

- Primary Platforms: Voices.com, Scale AI, TELUS Digital, and Invisible Technologies.

- Compensation:$15–$35 per hour.

- Strategic Rationale: While basic accent tagging starts at $15–$20/hr, specialists who can provide “Gold Standard” datasets or perform deep-dive phonetic evaluations (often requiring a background in linguistics or musicology) can command rates up to $40–$50/hr.

- Target Demographic: Ideal for native speakers of underrepresented dialects, linguists, and voice actors who understand the mechanics of “speech-to-intent” mapping.

The Role in Multimodal AI

In 2026, AI is no longer unimodal (text-only). It must process Speech + Tone + Context. Accent Specialists provide the high-signal data needed to:

- Reduce Word Error Rate (WER): Drastically improving accuracy for non-standard speakers.

- Enable Real-Time Interpretation: Allowing agentic AI to “think” and respond instantly in the user’s preferred dialect.

- Preserve Linguistic Heritage: Digitizing and validating minority languages and dialects for the next generation of digital infrastructure.

Job 4: Emotion Labeler

Emotion labeling is the high-leverage process of training AI to recognize and replicate the “emotional scaffolding” of human conversation. In the 2026 landscape of Agentic AI, it is no longer enough for a voice agent to be accurate; it must be empathetic.

Emotion labelers assign specific tags—such as “frustrated,” “excited,” “hesitant,” or “sarcastic”—to audio segments. This human-verified data allows models to adjust their own pitch, volume, and pacing (prosody) to match the user’s emotional state, a critical requirement for AI mentors, customer service agents, and mental health companions.

The 80/20 Skill: Emotional Intelligence

Hiring for these roles follows a clear 80/20 principle: while technical familiarity is a bonus, 80% of the value is driven by the 20% of workers who possess superior soft skills and “affective empathy.”

- Social Intuition: Labelers must distinguish between a user who is “angry” versus one who is merely “assertive.”

- Contextual Logic: Identifying when a speaker’s words contradict their tone (e.g., saying “I’m fine” with a high-stress vocal frequency).

- Quality Consistency: High-performing labelers can maintain a high Inter-Annotator Agreement (IAA) score, ensuring the AI learns from a consistent “truth” across thousands of samples.

Platforms & Earnings

- Primary Platforms: RWS TrainAI, Hume AI, Noiz.ai, and Appen.

- Compensation:$13–$19 per hour.

- Strategic Rationale: Roles that involve “Sentiment Analysis” (text + voice) generally pay on the higher end of this bracket ($18–$20/hr) as they require deeper cognitive mapping than simple emotional tagging.

- Access: This is a “Zero-Fluff” entry point for those with backgrounds in psychology, hospitality, or education.

The Impact on Empathetic Agents

By 2026, the data produced by emotion labelers will enable:

- Conflict De-escalation: Voice agents that can detect rising frustration and shift to a calmer, more apologetic tone.

- Adaptive Learning: AI tutors that recognize when a student is “confused” or “discouraged” and provide motivational scaffolding.

- Branded Personas: Allowing companies to create a unique “vibe” for their voice agents, ensuring they sound consistently helpful or energetic.

Job 5: Conversation Designer (Entry-Level)

An entry-level Conversation Designer acts as the “architect of dialogue,” mapping out how AI interacts with humans in a logical and natural flow. Unlike developers who build the backend, designers focus on the user experience (UX) of the conversation.

In the 2026 era of Agentic AI, this role is critical for ensuring that autonomous agents don’t just provide “technically correct” answers but do so with the right persona, tone, and reasoning logic.

Daily Entry-Level Responsibilities

- Dialogue Scripting: Writing short, goal-oriented conversational “turns” (exchanges) for chatbots or voice assistants to handle specific user intents (e.g., “I lost my credit card”).

- Sample Utterance Generation: Creating lists of various ways a human might say the same thing (e.g., “Where’s my package?”, “Track my order,” “Is my delivery here?”) to help the AI recognize intent.

- Flow Validation: Using no-code visual tools to test if the AI’s logic paths lead to the correct resolution or if the conversation hits a “dead end.”

- Persona Auditing: Reviewing AI responses to ensure they match the brand’s voice—ensuring a luxury concierge bot doesn’t sound like a casual gaming assistant.

- Repair Strategy Design: Scripting “fallback” messages for when the AI doesn’t understand a user, ensuring the agent recovers gracefully rather than repeating “I don’t understand.”

Platforms & Earnings

- Primary Platforms: Defined.ai, Voiceflow, Botmock, and Salesforce Agentforce.

- Compensation:$14–$22 per hour (Contract/Freelance).

- Strategic Rationale: While entry-level task-based work starts at $14–$18/hr, junior designers working within corporate UX teams often see annual salaries ranging from $55,000–$75,000, reflecting the strategic importance of the role.

- Ideal Background: This is a high-leverage pivot for writers, copyeditors, and UX researchers who can think in branching logic without needing to write code.

The “No-Code” Technical Edge

Entry-level tasks are primarily logic-based. By 2026, tools like Voiceflow will allow designers to build complex, multi-turn AI behaviors using a drag-and-drop interface. This allows non-tech workers to focus on the 80/20 of communication: 80% of the user experience is determined by the clarity and flow of the conversation, not the underlying code.

Job 6: AI Ethics Reviewer (Voice)

An AI Ethics Reviewer acts as the moral and social compass of the data training pipeline. Their primary objective is to identify and flag biases—such as gender skews, ageism, or regional underrepresentation—within voice datasets before they are used to train large-scale models.

This role is a critical component of “Responsible AI,” ensuring that the resulting voice agents are inclusive and do not perpetuate harmful stereotypes or functional inequities.

In the 2026 landscape, with the enforcement of global AI regulations (like the EU AI Act), these roles have transitioned from “optional oversight” to a mandatory “Human-in-the-Loop” (HITL) fix for any commercial AI deployment.

Daily Ethical Audit Tasks

- Dataset Auditing: Reviewing audio collections to ensure a 50/50 gender split and broad age distribution (e.g., ensuring an AI tutor works as well for a child’s voice as for an adult’s).

- Bias Flagging: Identifying instances where a model performs poorly on specific demographics (e.g., an AI that consistently misinterprets female voices in high-stress situations).

- Fairness Mapping: Assessing whether a “neutral” AI voice inadvertently adopts biased tonal markers associated with specific socio-economic or ethnic backgrounds.

- Consent Verification: Auditing metadata to ensure all voice data in a training set was collected with explicit, documented user consent—a key 2026 compliance requirement.

Platforms & Earnings

- Primary Platforms: Appen Ethics Hub, TELUS International, Invisible Technologies, and specialized GRC (Governance, Risk, and Compliance) platforms like OneTrust.

- Compensation:$16–$28 per hour (Contract/Entry-level).

- Strategic Rationale: Entry-level roles focus on task-based auditing ($16–$24/hr). However, specialists who transition into AI Policy Analysts or Responsible AI Consultants can command annual salaries between $80,000–$120,000.

- Target Demographic: Highly accessible for graduates in Philosophy, Sociology, Law, and International Relations who can apply qualitative logic to technical datasets.

Supporting Responsible AI Growth

By performing these audits, Ethics Reviewers enable:

- Algorithmic Transparency: Providing the documentation needed to prove an AI model was trained on diverse, non-biased data.

- Universal Accessibility: Ensuring voice interfaces work for everyone, regardless of their vocal frequency or accent.

- Trust-Based Systems: Reducing the risk of brand-damaging “AI hallucinations” or offensive vocal outputs that can occur when a model is trained on a narrow, biased dataset.

Job 7: Prompt Engineer (Voice Tasks)

In the 2026 AI market, “Prompt Engineering” has bifurcated into high-level architecture and task-based implementation. For entry-level voice roles, the focus is on Instructional Precision. You aren’t writing code; you are crafting linguistic queries to test and refine how an AI generates or interprets speech.

These roles rely on the 80/20 rule of communication: 80% of an AI’s success in voice interaction depends on the 20% of prompts that provide clear context, persona, and constraints. Non-tech workers excel here because they understand how to give “human” instructions that yield technical results.

Daily Entry-Level Tasks

- Vocal Persona Testing: Crafting prompts like “Act as a neutral Nigerian customer service agent; respond to a delivery delay complaint using local phrasing but maintaining a professional tone” to evaluate the model’s regional accuracy.

- Iterative Refinement: Taking a “robotic” AI output and adjusting the prompt instructions (e.g., “Add more varied intonation” or “Insert natural pauses after commas”) until the result is indistinguishable from a human.

- Prompt Library Maintenance: Categorizing and “versioning” successful prompts so a company can reuse them across different voice agents.

- A/B Testing: Running two different prompts for the same task to see which one results in a more empathetic or accurate vocal response.

Platforms & Earnings

- Primary Platforms: Remotasks, DataAnnotation.tech, Outlier, and Lagos Data School (for regional specialization).

- Global Pay:$15–$30 per hour (Remote).

- Strategic Note: While basic task platforms start at $12–$20/hr, entry-level “Prompt Specialists” working directly for AI startups or marketing agencies in 2026 are seeing rates between $30–$75/hr.

- Local Impact (Nigeria): In the Lagos tech ecosystem, entry-level prompt roles are commanding ₦450,000 – ₦700,000 monthly, making it one of the fastest routes to a high-signal career without a CS degree.

The Path to “Agentic” Mastery

By starting with voice-task prompting, you build the foundational logic required for Agentic AI Orchestration. This is the 2026 industry standard where you don’t just prompt an AI to speak, but prompt it to act (e.g., “Listen to this customer’s tone, and if they sound frustrated, automatically escalate to a human manager”). This transition turns a “task” into a “scalable skill system.”

Job 8: Quality Assurance (QA) Rater

Quality Assurance Raters serve as the ultimate high-signal filter in the AI production pipeline. Their primary function is to audit the “fluency” and “naturalness” of AI-generated audio against a standardized rubric. In the 2026 technical landscape, where Agentic AI systems are deployed in real-time customer environments, the QA Rater ensures that the AI’s vocal output meets industry standards for clarity, pacing, and human-like cadence.

Raters act as a critical “gatekeeper,” rejecting flawed or “robotic” samples before they can be utilized in consumer-facing applications.

Daily High-Leverage Tasks

- Fluency Scoring: Evaluating audio samples on a 1–5 Likert scale, where ‘1’ represents unintelligible/mechanical sound and ‘5’ represents indistinguishable-from-human speech.

- Sample Rejection: On average, raters reject 15%–20% of samples daily due to artifacts (digital clicks), mispronunciations, or “uncanny valley” vocal effects.

- Error Categorization: Identifying why a sample failed (e.g., “staccato rhythm,” “incorrect word stress,” or “excessive background static”).

- Instructional Alignment: Checking if the AI followed specific constraints, such as a “whisper” command or a specific regional intonation.

Platforms & Earnings

- Primary Platforms: Neevo (by Defined.ai), Mighty AI (by Uber), TELUS International, and OneForma.

- Compensation:$11–$22 per hour.

- Strategic Rationale: While basic crowdsourcing tasks start at $11–$17/hr, specialized QA raters working on “Golden Sets” (the master data used to grade other workers) can earn $25–$35/hr.

- Technical Requirement: Zero. The role relies on native-level linguistic intuition and the ability to apply a logical rubric consistently.

Maintaining the 2026 Gold Standard

By systematically scoring outputs, QA Raters provide the feedback loop necessary for:

- RLHF (Reinforcement Learning from Human Feedback): Directly telling the model which outputs are “good,” allowing it to self-correct in future iterations.

- Safety Compliance: Ensuring the AI does not adopt an aggressive or inappropriate tone during interactions.

- Benchmarking: Providing the data that allows AI companies to claim “99% human-parity” in their voice models.

Job 9: Voice Licensing Coordinator

A Voice Licensing Coordinator acts as the high-leverage intermediary between voice talent and AI developers. In the 2026 AI economy, “consent-based data” has become a non-negotiable industry standard. The coordinator’s role is to curate, manage, and license authentic human voice samples to be used for training synthetic models (TTS) or refining Agentic AI personas.

Unlike basic data annotation, this is a strategic “Creative-Ops” role. You ensure that the talent is fairly compensated, the usage rights are clearly defined, and the resulting AI models represent a diverse spectrum of human identity.

Core Responsibilities

- Sample Curation: Scouting and selecting voice talent that meets specific phonetic or demographic requirements for new AI projects.

- Ethical Compliance: Verifying that every audio sample in the database has an ironclad, documented consent agreement for AI training—preventing “data scraping” or unauthorized voice cloning.

- Diversity Auditing: Ensuring the talent pool includes a wide range of ages, genders, and regional accents to prevent algorithmic bias.

- Contract Management: Handling the 80/20 of licensing—managing the 20% of legal and administrative “paperwork” (rights, royalties, and usage limits) that ensures 80% of the project’s long-term safety and viability.

- Metadata Validation: Ensuring that licensed voice files are correctly tagged with high-signal descriptors (e.g., “Warm, 30s, West African accent”) for efficient model integration.

Platforms & Earnings

- Primary Platforms: Voices.com, Voice123, Respeecher, and ElevenLabs Marketplace.

- Compensation:$15–$35 per hour (Remote/Contract).

- Strategic Rationale: While entry-level coordination starts at $15–$25/hr, specialists who manage large-scale “Voice Banks” for major tech firms can see annual salaries of $70,000–$95,000.

- Ideal Background: Highly effective for creatives, talent agents, and paralegals who understand the value of intellectual property and have an eye (and ear) for talent.

The “Scale Forever” Advantage

For non-tech workers, this role provides deep insight into the business of AI. By mastering the ethics of voice licensing, you move from “task-based” labor to “strategic asset management.” This is a foundational skill for anyone looking to build a career in AI Operations or Ethics, where the focus is on building sustainable, human-centric technical systems.

Entry-Level AI Voice Job Comparison Matrix

This matrix applies the 80/20 principle to identify the core high-leverage skill for each role, alongside industry-standard platforms and compensation. For professionals in Nigeria and India, these roles offer a high-signal pathway to global earnings without a technical degree.

| Job Title | Core Skill (80/20) | Top Platforms | Pay Range (USD) | Remote Fit (Global) |

| Voice Data Annotator | Listening Accuracy | RWS, TELUS Digital | $12–$18/hr | High |

| Transcription Validator | Detail Orientation | Clickworker, Rev | $10–$15/hr | High |

| Accent Specialist | Cultural Nuance | Voices.com, Scale AI | $15–$20/hr | High |

| Emotion Labeler | Affective Empathy | RWS TrainAI, Hume | $13–$19/hr | Medium-High |

| Conversation Designer | Dialogue Logic | Defined.ai, Voiceflow | $14–$22/hr | High |

| AI Ethics Reviewer | Bias Detection | Appen Ethics Hub | $16–$24/hr | High |

| Prompt Engineer | Query Crafting | Remotasks, Outlier | $12–$20/hr | High |

| QA Rater | Scoring Consistency | Neevo, OneForma | $11–$17/hr | High |

| Voice Licensing | Consent Management | Voices.com, ElevenLabs | $15–$25/hr | Medium |

Strategic Analysis for Career Pivoters

The transition from traditional industries into AI-adjacent roles requires more than just new skills; it requires a shift in how you leverage your existing expertise. This strategic analysis applies the 80/20 framework to the AI voice sector, identifying the high-leverage entry points that offer the greatest ROI for non-tech professionals.

By focusing on roles that prioritize linguistic logic and human intuition, career pivoters can bypass traditional technical barriers and secure a foundational position in the 2026 digital economy.

The High-Leverage Entry Point

If your goal is immediate entry with the lowest technical friction, Voice Data Annotation and QA Rating provide the most accessible “on-ramps.” These roles prioritize consistent output over specialized domain knowledge.

The Specialized “Skill System” Path

For those aiming to “build once and scale forever,” Conversation Design and Prompt Engineering offer the highest long-term ROI. These roles transition from simple task execution to technical orchestration, allowing you to eventually lead AI agent development projects.

Regional Optimization (Nigeria/India focus)

- Arbitrage Opportunity: Earning in USD while living in a lower-cost-of-living region provides significant financial leverage.

- Niche Advantage: Nigerian and Indian accents are currently high-priority datasets for global AI companies seeking to reduce “algorithmic bias” in West African and South Asian markets. Accent Specialists from these regions are in high demand for multimodal model training.

First Principles of Application

- Focus on Accuracy: In AI training, “clean” data is more valuable than “fast” data. Maintaining a 95%+ accuracy rating on platforms like Remotasks or Appen is the 20% of effort that secures 80% of future job invites.

- Framework Utilization: When applying, describe your experience using logic-based frameworks (e.g., “Applied MECE principles to categorize 400+ audio samples for emotional intent”) to signal your technical readiness to recruiters.

How to Land These Jobs in 2026

Securing a position in the AI voice sector requires a move from “mass applying” to a high-leverage, targeted strategy. In the 2026 job market, recruiters prioritize demonstrated technical fluency and cultural context over traditional resumes.

Target High-Signal Platforms

Apply the 80/20 rule by focusing on the top 20% of platforms that control 80% of the enterprise-grade AI training contracts.

- Action: Register on Appen, RWS TrainAI, TELUS International, and DataAnnotation.tech.

- Strategy: Complete your profile with 100% detail, emphasizing your linguistic background (e.g., native fluency in Nigerian Pidgin or Hindi) as a specialized technical asset.

Build a “Skill-First” Portfolio

In the absence of a CS degree, your portfolio is your primary proof of competence.

- Action: Curate a collection of 50 annotated samples.

- Output: Create a simple document or GitHub repository showcasing your ability to label audio for emotion, transcribe difficult accents, or craft logic-based prompts. Use the 80/20 framework: highlight your most accurate, high-complexity samples to demonstrate expertise.

Leverage Specialized Certifications

The 2026 market is flooded with general applicants; specialized credentials act as a “High-Signal” filter.

- Action: Obtain niche certifications such as Voice AI Basics or platform-specific badges.

- ROI: Data indicates that candidates with targeted AI training certifications see a 2x increase in hire rates compared to those with only general soft skills. These credentials signal that you understand the “Human-in-the-Loop” workflow and ethical data standards.

Optimize for the “Agentic” Shift

Prepare for the next iteration of the industry by learning how to evaluate Agentic AI.

- Strategy: During interviews or on applications, mention your familiarity with multimodal interfaces and RLHF (Reinforcement Learning from Human Feedback). Positioning yourself as someone who understands the logic of the model—not just the task—moves you from a “worker” to a “technical strategist.”

Do these jobs require coding?

No. Entry-level AI voice training jobs are designed to be “No-Code” roles. You will use intuitive, web-based interfaces to perform labeling, validation, and transcription tasks. Your value lies in your human linguistic intuition, not your ability to write software.

What accents are most in demand?

There is a massive surge in demand for diverse, non-Western accents. Specifically, Nigerian English, Pidgin, and Indian Hindi-English (“Hinglish”) mixes are high-priority datasets.

As AI companies expand into global markets, they require “High-Signal” data from these regions to ensure their models are inclusive and functionally accurate.

Are these roles fully remote?

Yes. Approximately 90% of these roles are 100% remote. Platforms like Appen, RWS, and Telus International are built for a global, distributed workforce. This makes them highly accessible for professionals in regions like Nigeria and India, providing a path to earn in USD or EUR from any location with a stable internet connection.

How much can beginners earn?

Hourly Rates: Beginners typically earn between $10–$25 per hour.

Scaling: As your quality ratings increase and you move into specialized tasks (like Prompt Engineering or Ethics Review), rates can scale to $30+/hour.

Monthly Average: Many part-time freelancers in this sector report averaging $1,500 per month, providing significant financial leverage in local economies.

What skills yield the fastest hires?

Applying the 80/20 principle, you don’t need a degree to be competitive. The 20% of skills that drive 80% of hiring success include:

Instructional Prompting: The ability to give clear, logical directions to an AI.

Linguistic Consistency: Maintaining 95%+ accuracy across repetitive tasks.

Accent Familiarity: Leveraging your native regional dialect as a technical specialty.

In Conclusion

The shift toward entry-level AI voice training jobs represents a fundamental realignment of the technical workforce. In 2026, the industry has recognized that the “Human-in-the-Loop” is not a temporary bridge but a permanent necessity for the success of agentic and multimodal systems.

Final Strategic Takeaways

- Skill Over Syntax: These roles prioritize language logic and human intuition over traditional coding. Your existing communication skills are the primary technical asset.

- Market Momentum: Demand is surging due to the 2026 Agentic AI transition, where models require hyper-specific, emotionally resonant voice data to function as autonomous agents.

- Immediate Accessibility: Platforms like RWS, Appen, and Defined.ai have lowered the barrier to entry, enabling immediate global participation for non-tech workers.

- Scalable Careers: Beyond simple task completion, roles in AI Ethics and Conversation Design offer a path to high-signal, “build once, scale forever” careers in the tech sector.

Immediate Action Plan

- Platform Entry: Sign up for Appen and DataAnnotation.tech today to clear the initial verification hurdles.

- Daily Momentum: Commit to 10 daily tasks to build your quality rating and move your profile into the top 20% of high-accuracy contributors.

- Audit Your Expertise: Identify your “Accent Specialty” or “Linguistic Niche” to command higher rates in the 2026 marketplace.

By treating these tasks as a skill system rather than just a job, you bridge the gap between technical education and industry success, securing your place in the evolving AI landscape.