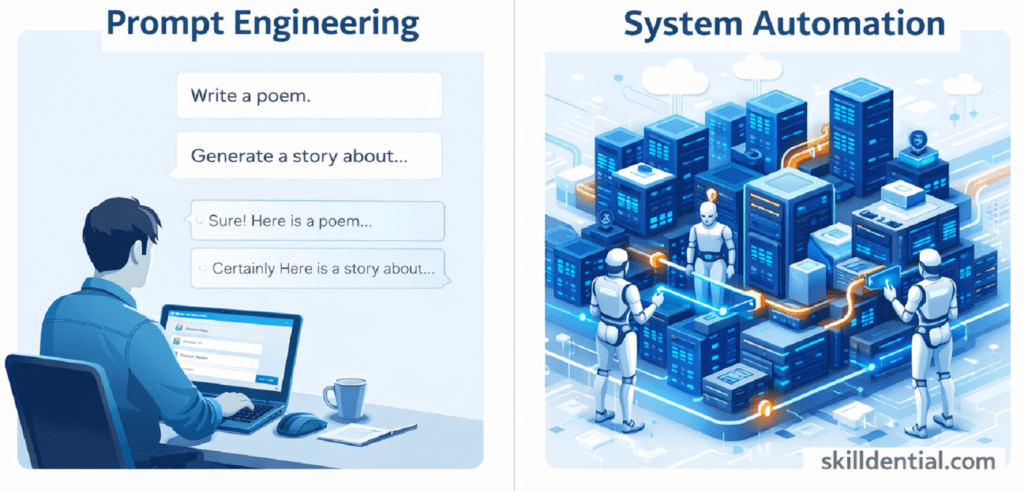

Prompt Engineering vs. System Automation: Scalability Gap

System automation integrates AI models into scalable frameworks to execute tasks independently, managing data processing, decision-making, and complex workflows without manual intervention. By utilizing components such as RAG (Retrieval-Augmented Generation) for context retrieval, API orchestration for operation chaining, and LLM-as-a-judge for automated evaluation, it bridges the gap between probabilistic outputs and deterministic requirements.

Unlike manual prompting, system automation ensures reliability across high volumes through rigorous state management and caching. This approach requires production-grade infrastructure to mitigate nondeterminism and optimize operational costs effectively.

What Is Prompt Engineering?

Prompt engineering is the iterative process of crafting inputs to guide Large Language Model (LLM) outputs for specific tasks. While this approach excels during the discovery and prototyping phase, it inevitably hits scalability limits due to context instability and a lack of generalizability. Manual refinements are insufficient under high throughput, as static prompts cannot address race conditions, handle state consistency, or adapt to the inherent drift in probabilistic models.

The 80/20 Limit of Prompting

- Discovery Power: Excellent for identifying the “path of least resistance” for a model to understand a specific domain.

- The Scalability Wall: Because prompts rely on linguistic heuristics, they lack the deterministic guardrails required for autonomous industry applications.

Technical Contrast: Heuristics vs. Infrastructure

| Feature | Prompt Engineering (Heuristic) | System Automation (Infrastructure) |

| Logic Gate | Linguistic Nuance | Code-based Validation |

| State | Stateless/Single-turn | Stateful/Persistent |

| Error Handling | Manual “Retry” | Automated Circuit Breakers |

In a high-leverage workflow, Prompt Engineering should be viewed as the R&D cost required to define the logic that System automation will eventually codify. Relying on prompting for production is a technical debt that leads to the “Scalability Gap.”

Why Does Prompt Engineering Fail at Scale?

Manual prompt engineering breaks beyond the prototype stage due to model nondeterminism, retrieval failures, and distributed state issues. In high-throughput environments, the linguistic nuances that work for a single query fail to account for the variance inherent in Large Language Models.

Data from Skilldential career audits confirms this “Scalability Wall”: AI leads experienced a 40% output variance in high-volume tasks when relying solely on refined phrasing. By transitioning from manual prompts to automated RAG pipelines, that variance was reduced by 75%.

Key Failure Points at Scale

- Model Nondeterminism: The probabilistic nature of LLMs means the same prompt can yield divergent results across thousands of iterations, leading to inconsistent user experiences.

- Retrieval Failures: Without automated context injection, prompts often lack the necessary real-time data to maintain accuracy, resulting in hallucinations.

- State Consistency: Prompts cannot maintain a persistent memory or “state” across distributed systems, making multi-step workflows impossible to manage manually.

The Engineering Pivot

Reliability in production demands engineering over phrasing. While a prompt defines the “what,” System automation builds the “how,” providing the deterministic guardrails (such as validation layers and automated retries) that linguistic tweaks cannot provide.

Prompt Engineering vs. System Automation

The “Scalability Gap” is best understood by analyzing how these two approaches handle high-leverage technical constraints. While prompt engineering identifies the logic, System automation codifies it into a production-grade asset.

Technical Comparison Matrix

| Aspect | Prompt Engineering | System Automation |

| Scalability | Human-limited; fails >100 tasks/day | Handles 10k+ via orchestration |

| Determinism | Probabilistic; sensitive to phrasing | Enforced via caching/state stores |

| Cost Control | Token budgeting only | Dynamic optimization |

| Use Case | Prototyping/discovery | Production pipelines |

Strategic Breakdown

- Scalability: Manual prompting scales linearly with headcount, creating a bottleneck. System automation scales logarithmically with infrastructure, allowing a single architect to manage massive throughput.

- Determinism: Phrasing is an unstable foundation for high-volume tasks. Automation utilizes state stores (like Redis or Postgres) and caching to ensure that once a “correct” logic is found, it is replicated reliably without the drift inherent in LLM temperature settings.

- Cost Control: Beyond simple token counting, automation allows for dynamic optimization—using cheaper models (like GPT-4o-mini or Gemini Flash) for routing and validation, and reserving high-parameter models only for complex reasoning steps.

The 80/20 Rule of Implementation

In the Skilldential framework, 80% of your initial time is spent in Prompt Engineering to discover the model’s boundaries. However, 80% of your long-term value is derived from the System Automation that removes the human from the loop.

How Does the Automation Stack Work?

The automation stack functions as a deterministic wrapper around probabilistic models. To achieve industrial-grade reliability, the architecture layers four distinct technical stages:

Data Ingestion & RAG Retrieval

Automation begins by replacing static prompts with dynamic context. Ingestion pipelines process raw data, while Retrieval-Augmented Generation (RAG) identifies and injects only the relevant vectors into the model’s context window. This minimizes hallucination by grounding the generation in verifiable facts.

LLM Generation & API Orchestration

Rather than a single “chat,” API orchestration (using frameworks like LangGraph or Semantic Kernel) chains multiple operations. This allows the system to execute branching logic—where the output of one model informs the task of the next—enabling complex, multi-step decision-making without human oversight.

Evaluation: LLM-as-a-Judge

Quality control is automated via LLM-as-a-judge. This system uses a highly capable model (e.g., Gemini 1.5 Pro) to score the outputs of smaller, faster production models.

- Pointwise Scoring: Evaluating a single output against a rubric (e.g., accuracy, tone).

- Pairwise Comparison: Comparing two potential outputs to select the highest-leverage response.

Deployment & Monitoring (MLOps)

The final layer utilizes MLOps tools like MLflow to deploy these chains. Continuous monitoring tracks model drift, latency, and token costs in real-time. This infrastructure ensures that if a “Scalability Gap” appears, it is identified by data triggers rather than user complaints.

The 80/20 Technical Stack

- Orchestration: 20% of the code (The logic chain).

- Evaluation: 80% of the reliability (The automated guardrails).

What Components Build System Automation?

System automation shifts the focus from “what” the AI says to “how” the AI is governed. To bridge the gap from hobbyist prompting to professional infrastructure, a production-grade stack requires four core architectural pillars.

Contextual Memory: RAG and Vector Databases

While a prompt is limited by a static context window, system automation uses Retrieval-Augmented Generation (RAG) to pull real-time, external knowledge.

- Vector Databases: Tools like Pinecone, Weaviate, or Milvus store data as numerical embeddings. This allows the system to perform semantic searches across millions of documents, injecting only the most relevant 80/20 of information into the model at runtime.

- Hybrid Search: Modern stacks combine vector similarity with traditional keyword search (BM25) to ensure precision for technical terms and serial numbers.

The Logic Engine: Orchestration Frameworks

Orchestration is the “manager” of the AI workflow. Instead of a single prompt, frameworks like LangGraph, CrewAI, or Semantic Kernel chain multiple tasks together.

- Multi-Agent Workflows: Specialized agents (e.g., a “Researcher” agent and a “Writer” agent) collaborate, passing data back and forth to solve complex problems that a single prompt would fail to process.

- State Management: Orchestration ensures the system remembers progress across multi-step tasks, preventing the “memory loss” common in long-form manual prompting.

Quality Control: LLM-as-a-Judge

In professional systems, “vibe checks” are replaced by automated metrics. LLM-as-a-judge uses a superior model (like Gemini 1.5 Pro) to evaluate the output of production models based on a strict rubric.

- Pointwise Scoring: Assigning a numerical value to an output’s accuracy or safety.

- Pairwise Comparison: Choosing the better of two candidate responses to optimize the system over time.

Scalable Infrastructure: Cloud-Native MLOps

To handle 10,000+ tasks daily, the system must live on cloud-native infrastructure.

- Serverless Scaling: Utilizing platforms like AWS Lambda or Google Cloud Run to spin up compute power only when needed, controlling costs dynamically.

- Monitoring (Observability): Tools like MLflow or LangSmith track every “trace” of a conversation. This allows architects to debug exactly where a retrieval failed or a model hallucinated, providing the high-signal data needed for continuous improvement.

Strategic Insight: If Prompt Engineering is the art of discovery, these components represent the science of scale. Transitioning to this stack is the definitive move for any technical professional seeking industry success in 2026.

How to Transition to System Automation?

Transitioning from manual prompting to a production-grade system requires a structured, phased approach. To achieve 80/20 efficiency, operations strategists must move from linguistic experimentation to architectural deployment.

Prototype with RAG

Do not start with complex agents. Begin by grounding your current “best” prompts in a Retrieval-Augmented Generation (RAG) pipeline. Replace static instructions with dynamic data injection from a vector database. This immediately stabilizes outputs and reduces the need for manual prompt tweaking.

Orchestrate via FastAPI and LangChain

Move the logic out of the chat interface and into code. Use FastAPI to create a scalable backend and LangChain or LangGraph to manage the workflow logic.

- Stateful Logic: Design your system to remember where it is in a multi-step process.

- Asynchronous Execution: Ensure the system can handle multiple requests simultaneously without blocking resources.

Implement Automated Evaluation

Replace human review with LLM-as-a-judge. Create a testing suite that automatically scores your system’s outputs against a gold-standard dataset. This provides the high-signal feedback loop necessary to iterate without manual intervention.

Audit and Optimize for Bottlenecks

Analyze your pipeline to identify where latency or costs are spiking.

- 80/20 Cost Control: Use high-parameter models for reasoning and switch to smaller, faster models (like Gemini 1.5 Flash) for routing and summarization tasks.

Deploy on Kubeflow

For true industry success, the stack must be portable and scalable. Deploying via Kubeflow on a Kubernetes cluster allows you to manage the entire machine learning lifecycle—from data preparation to model serving—with production-grade monitoring and automated scaling.

Strategic Outcome

By following this transition, you move from a task-based hobbyist to a systems-based architect. The result is a robust, autonomous framework that maintains 99% reliability regardless of task volume.

What defines system automation in AI?

System automation is the deployment of AI models within autonomous pipelines for independent task execution. Unlike traditional Rule-Based Automation, AI system automation processes unstructured data via deep learning mechanisms, allowing it to adapt to patterns rather than following a rigid “if-this-then-that” script. It bridges the gap between probabilistic model outputs and deterministic business requirements.

When does prompt engineering become insufficient?

Prompt engineering hits its “Scalability Wall” when task volume exceeds a human’s ability to monitor for variance. It fails at scale due to model nondeterminism (inconsistent outputs for the same input) and distributed state issues. While optimal for low-volume discovery and R&D, manual prompting cannot handle the race conditions or state consistency required for high-leverage industry applications.

What is RAG in automation stacks?

Retrieval-Augmented Generation (RAG) is the architectural bridge between an LLM and external data. It involves two stages:

Indexing: Converting proprietary data into vector embeddings.

Retrieval: Querying that data at runtime to provide the LLM with relevant context.This prevents hallucinations and scales via caching mechanisms, ensuring the system remains grounded in verifiable facts.

How does LLM-as-a-judge work?

LLM-as-a-judge replaces manual human “vibe checks” with automated, metric-based evaluation. A highly capable model (the “Judge”) assesses production model outputs using specific rubrics.

Pointwise: Scoring a single response on a scale (e.g., 1–5 for accuracy).

Pairwise: Comparing two candidate responses and selecting the superior one.This enables scalable, objective quality control within a deployment pipeline.

What MLOps practices support automation?

Professional-grade automation requires rigorous versioning and monitoring tools.

MLflow: Used for experiment tracking and model versioning to ensure reproducibility.

Kubeflow: Facilitates deployment on cloud-native infrastructure, managing the lifecycle from data prep to serving.

Integration: These tools integrate with frameworks like PyTorch and TensorFlow to ensure continuous monitoring and automated retries when the system detects drift or failure.

In Conclusion

The transition from Prompt Engineering to System Automation marks the shift from experimental AI use to an industrial-grade application. While prompt engineering is the optimal tool for discovery and identifying the “path of least resistance” for a model, it cannot bridge the Scalability Gap alone.

True reliability at scale requires a deterministic infrastructure—a stack comprised of RAG for contextual grounding, API orchestration for complex workflows, and LLM-as-a-judge for automated quality metrics. By enforcing these through MLOps frameworks, you move beyond the fragility of manual phrasing into the robustness of persistent systems.

80/20 Action Plan

- Audit Current Pipelines: Identify high-volume manual prompting tasks that suffer from >20% output variance.

- Prototype RAG: Transition your most effective prompts into a RAG-based workflow to ground outputs in proprietary data.

- Automate Logic: Use orchestration to remove the human-in-the-loop, targeting the automation of 80% of your manual prompting load.

By architecting systems rather than just writing prompts, you align with the high-leverage frameworks necessary for industry success in the AI era.

Discover more from SkillDential | Path to High-Level Tech, Career Skills

Subscribe to get the latest posts sent to your email.