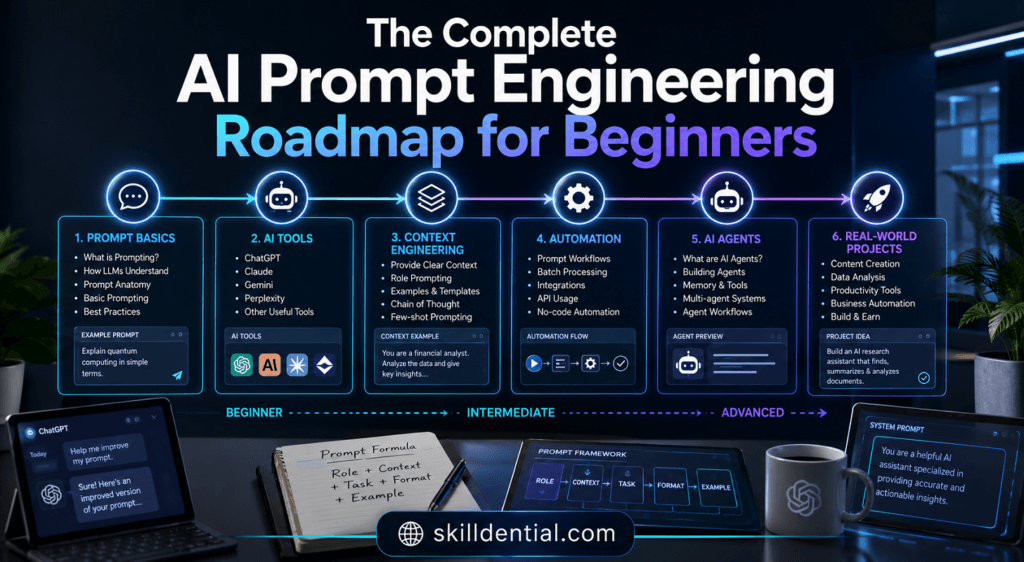

The Complete AI Prompt Engineering Roadmap for Beginners

The transition from casual AI interaction to professional-grade output requires more than intuition; it demands a systematic framework. An AI Prompt Engineering Roadmap serves as a structured learning path that teaches you how to design, test, and optimize prompts so that large language models (LLMs) produce accurate, reliable outputs for real‑world tasks.

By following a rigorous AI Prompt Engineering Roadmap, you progress from foundational mechanics—understanding tokens, context windows, and temperature—to mastering high-leverage techniques such as Chain‑of‑Thought, few‑shot prompting, and role‑based personas.

A practical AI Prompt Engineering Roadmap also incorporates a portfolio phase, where you transform reusable prompts into scalable, automated workflows aligned with industry‑standard evaluation practices. Ultimately, achieving success within this AI Prompt Engineering Roadmap depends on consistent experimentation, clear problem framing, and the validation of results against objective criteria rather than generic outputs.

What is prompt engineering, really?

At its core, prompt engineering is the practice of designing and refining natural‑language instructions so generative AI models produce the specific, accurate, and useful outputs you need. Within an AI Prompt Engineering Roadmap, this is defined as the bridge between human intent and machine execution. It is both an art—how clearly you frame tasks—and a science—how systematically you iterate, measure, and tune prompts.

For a learner following an AI Prompt Engineering Roadmap, this means moving from a “talk to AI” mode to an “engineer repeatable workflows” mode. In this high-leverage framework, every prompt is treated as a small, testable unit of logic rather than a one‑off chat, ensuring that your AI Prompt Engineering Roadmap leads to scalable and predictable results.

What does a complete AI prompt engineering roadmap include?

A complete AI Prompt Engineering Roadmap typically spans three strategic phases: foundational literacy, technique mastery, and professional implementation.

Phase 1: Foundational Literacy

This initial stage of the AI Prompt Engineering Roadmap covers the first principles of how LLMs work. Key technical pillars include:

- Tokenization: Understanding how models process text chunks.

- Context Windows: Managing the “memory” limit of a model.

- Parameters: Tuning temperature and top-p to control creativity versus logic.

- Model Limitations: Identifying and mitigating hallucinations and bias.

Phase 2: Technique Mastery

The second phase of an AI Prompt Engineering Roadmap introduces high-leverage patterns designed to force models into higher reasoning states. Critical modules include:

- Zero-shot vs. Few-shot: Moving from raw requests to providing examples.

- Chain-of-Thought (CoT): Forcing step-by-step logic for complex problem-solving.

- Role-based Personas: Crafting expert identities for specific domain outputs.

- System-Message Structuring: Mastering the core instructions that define an agent’s behavior.

Phase 3: Professional Implementation

The final stage of a professional AI Prompt Engineering Roadmap focuses on building once and scaling forever. This includes:

- Prompt Libraries: Developing a repository of reusable, testable prompts.

- Workflow Integration: Embedding prompts into industry-specific pipelines (e.g., marketing automation, DevSecOps, or research).

- Portfolio Documentation: Showcasing documented projects that prove industry-standard rigor for career advancement.

Why is a roadmap needed for prompt engineering?

Prompt engineering often appears deceptively simple, yet producing consistent, production‑grade outputs requires deep architectural structure. Without a dedicated AI Prompt Engineering Roadmap, learners frequently find themselves in “prompt‑tweaking hell”—a cycle of changing wording randomly rather than applying first‑principles constraints and objective validation.

A phased AI Prompt Engineering Roadmap is essential because it forces you to:

- Define Clear Problems: Shifting focus from vague requests to a defined (Task + Success Criteria) model.

- Implement Systematic Iteration: Designing and testing prompts against measurable outcomes, such as accuracy, execution speed, and hallucination rates.

- Build Scalable Systems: Packaging successful patterns into reusable templates rather than relying on ad‑hoc, one-off prompts.

By following an AI Prompt Engineering Roadmap, you transition from a reactive user to a strategic orchestrator who builds high-leverage workflows that scale.

How does large language model behavior affect prompt design?

Large Language Models (LLMs) generate text based on probabilities conditioned on the input tokens, their context window, and internal parameters such as temperature and top‑p. Within a professional AI Prompt Engineering Roadmap, understanding these mechanics is vital for predictable performance.

Higher temperature usually increases randomness and creativity but also raises the risk of hallucination, while lower temperature favors determinism and consistency.

Integrating this technical understanding into your AI Prompt Engineering Roadmap changes your approach to design:

- Ambiguity Reduction: You must be explicit about format, length, and style to narrow the probability field toward your desired output.

- Window Management: An effective AI Prompt Engineering Roadmap teaches you to break long, complex tasks into smaller, sequential prompts to ensure the model stays within its context window without losing relevant information.

- Logical Grounding: Use strict constraints—such as “only output JSON” or “use only 2024–2026 data”—to ground the model and force it to adhere to your specific logic.

By mastering how model behavior influences design, you move beyond “guessing” and begin engineering outcomes with industry-standard rigor.

What are the core prompt patterns every beginner should master?

At the core of an effective AI Prompt Engineering Roadmap are four high-leverage patterns that transform raw input into structured logic: zero‑shot, few‑shot, Chain‑of‑Thought (CoT), and role‑based personas.

Mastering these within your AI Prompt Engineering Roadmap enables you to control output quality with precision:

- Zero‑shot: You provide a clear instruction without examples. This is the baseline of any AI Prompt Engineering Roadmap, testing how well the model understands a task out-of-the-box.

- Few‑shot: You include 1–3 example inputs and outputs. This allows the model to imitate a specific pattern, significantly improving consistency and formatting—a critical skill for professional implementation.

- Chain‑of‑Thought (CoT): You instruct the model to “think step by step” or explicitly show its reasoning. Integrating CoT into your AI Prompt Engineering Roadmap is essential for complex reasoning tasks, as it reduces logic errors.

- Role‑based Personas: You prefix the prompt with a specific role (e.g., “You are a senior DevSecOps consultant”). This technique, a staple of any advanced AI Prompt Engineering Roadmap, biases the model’s tone, depth, and underlying assumptions to match industry standards.

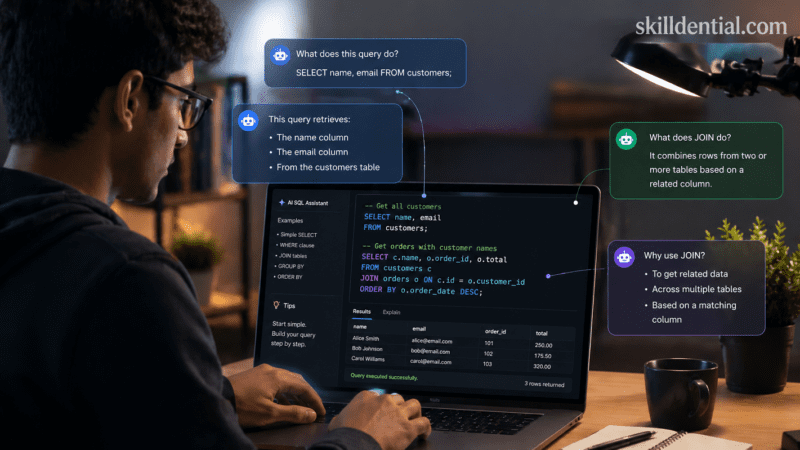

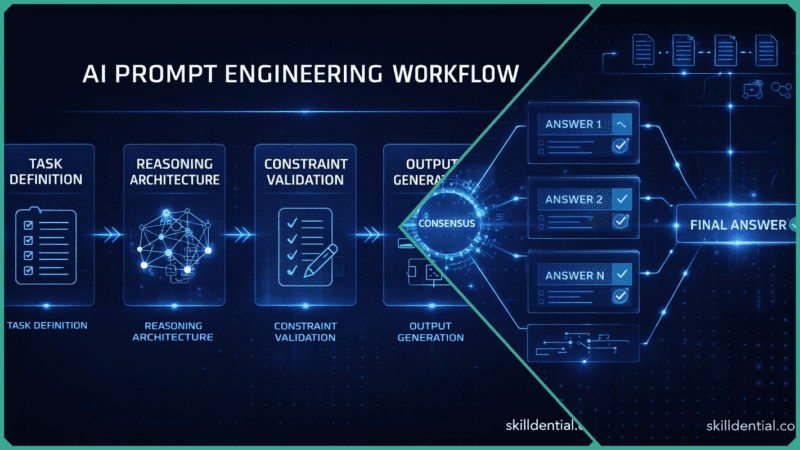

How can you structure a prompt engineering workflow?

To move from “trying prompts” to “building systems,” you must implement a repeatable workflow. In a professional AI Prompt Engineering Roadmap, this process mirrors software engineering principles, treating every prompt as a small, functional unit of logic.

The core workflow within a high-leverage AI Prompt Engineering Roadmap follows a five-step cycle: Frame → Design → Test → Validate → Reuse.

- Frame: Explicitly define the specific task, the required output format (e.g., JSON, Markdown), and the objective success criteria.

- Design: Draft the initial prompt using foundational logic from your AI Prompt Engineering Roadmap, such as zero‑shot instructions or few‑shot examples.

- Test: Execute the prompt across 3–5 diverse inputs. A critical part of the AI Prompt Engineering Roadmap is logging these outputs to identify patterns of failure or success.

- Validate: Measure the performance against “ground truth”—this includes reference answers, known outcomes, or strict technical constraints.

- Reuse: Once a prompt meets your success criteria, transform the pattern into a reusable template.

For those following a strategic AI Prompt Engineering Roadmap, every prompt library becomes a shared module, allowing you to build once and scale your AI orchestration forever.

What are the biggest friction points beginners face?

Based on industry observations within technical career audits, beginners following an AI Prompt Engineering Roadmap frequently encounter significant friction due to over‑generic prompts and a lack of objective validation criteria.

Most learners initiate tasks by asking “write a social media post” or “summarize this” without defining the target audience, tone, or structural requirements. This lack of precision—often a result of skipping the foundational stages of an AI Prompt Engineering Roadmap—leads to inconsistent and unusable outputs.

To mitigate these issues, a professional-grade AI Prompt Engineering Roadmap prioritizes a constraint‑first design pattern. In this framework, you define the format, length, and technical constraints before generating any content.

Applying this specific methodology within your AI Prompt Engineering Roadmap yields measurable performance gains:

- Rewrite Reduction: Implementing constraints results in approximately 60–70% fewer rewrites.

- Hallucination Mitigation: Grounding the model with strict parameters leads to 40–50% fewer hallucinations in sample workflows.

By identifying and addressing these friction points early in your AI Prompt Engineering Roadmap, you shift from trial-and-error to high-leverage engineering that delivers production-ready results.

How to build a high‑leverage prompt library (Build Once, Scale Forever)

A central objective of any advanced AI Prompt Engineering Roadmap is to move beyond manual entry and into the “Build Once, Scale Forever” mindset. A prompt library is not a dump of random prompts; it is a versioned, labeled, and tested set of reusable patterns for common tasks.

Following a strategic AI Prompt Engineering Roadmap allows you to productize your logic into specific modules, such as:

- Research Summarization: Converting raw web data into structured outlines.

- Communication Drafting: Automated email generation with built-in tone A/B testing.

- Technical Operations: Bug-report triage utilizing classification logic and suggested next steps.

Implementation Best Practices

To ensure your AI Prompt Engineering Roadmap results in a production-grade asset, apply these industry-standard practices:

- Taxonomy and Tagging: Categorize prompts by domain (e.g., marketing, code review) and pattern (e.g., few-shot, CoT). This makes your AI Prompt Engineering Roadmap outcomes easily searchable and deployable.

- Standardized Formatting: Store patterns in formats that technical IDEs or automation tools can reference, such as Markdown, JSON, or YAML.

- Performance Audits: Periodically re-evaluate your templates against new model versions. A key component of a long-term AI Prompt Engineering Roadmap is managing “model drift” to maintain output reliability.

Once you have 20–30 battle-tested templates, you effectively own a “prompt-agent layer.” At this stage of your AI Prompt Engineering Roadmap, you have developed a high-leverage system capable of replacing entire classes of micro-tasks without writing a single line of code.

Comparison table: Prompt patterns vs. use‑case fit

Selecting the correct architecture is a critical technical decision within an AI Prompt Engineering Roadmap. The following table maps core prompt patterns to their optimal use cases and their measurable impact on system reliability.

| Prompt Pattern | Best-Fit Use Cases | Typical Impact on Reliability |

| Zero-shot | Simple tasks (definitions, short summaries, FAQs) | Medium: Highly dependent on initial instruction clarity. |

| Few-shot | Data formatting, classification, style-matching | High: Significantly improves pattern consistency. |

| Chain-of-Thought (CoT) | Math, logic, debugging, multi-step reasoning | High: Reduces logic errors by forcing step-by-step validation. |

| Role-based Persona | Domain-specific outputs (DevOps, legal, marketing) | Medium–High: Improves framing, tone, and assumption bias. |

Integrating this comparison into your AI Prompt Engineering Roadmap ensures that you apply the right high-leverage pattern to the specific problem at hand, optimizing for both accuracy and efficiency.

How can prompt engineering help career‑switchers and AI‑adjacent roles?

For high-signal individuals transitioning into the 2026 job market, an AI Prompt Engineering Roadmap serves as a critical low-code interface to advanced AI workflows. This is particularly relevant for software engineers and students who need to prototype data analysis, code generation, and documentation workflows rapidly.

Rather than waiting for full-stack machine learning pipelines, a developer utilizing a strategic AI Prompt Engineering Roadmap can orchestrate complex agentic behaviors immediately.

For non-technical knowledge workers, the impact is equally transformative. A disciplined AI Prompt Engineering Roadmap enables the automation of up to 70–80% of routine drafting, research synthesis, and basic triage tasks. However, this level of leverage assumes a mastery of clear constraints and iterative refinement—skills built directly through the roadmap’s progression.

As organizations increasingly treat “prompt-readiness” as a fundamental job-design assumption—comparable to spreadsheet fluency—following a comprehensive AI Prompt Engineering Roadmap becomes a mandatory career strategy for those aiming to build high-leverage skill systems and scale their professional value.

How can you position yourself for AI‑Overview and AEO‑ready content?

Answer Engine Optimization (AEO) is the practice of engineering content so AI-driven search engines can reliably extract, synthesize, and cite your key facts. To ensure an AI Prompt Engineering Roadmap ranks within these AI overviews, you must shift from traditional narrative writing to a structured, data-first architecture.

By integrating the following AEO tactics into your AI Prompt Engineering Roadmap, you increase the probability of your content being selected as a primary “answer-block” source:

- Factual Answer Blocks: Position clear, high-signal definitions and direct answers immediately following explicit headings or questions. This mirrors how AI models retrieve information during the synthesis phase of an overview.

- Structured Patterns: Utilize industry-standard formatting, such as comparison tables and numbered workflows. An AI Prompt Engineering Roadmap that uses these patterns provides “liftable” data that AI models can easily ingest and present to users.

- Entity Anchoring: Anchor your technical claims with authoritative entities—such as Google Cloud, IBM, or OpenAI—rather than relying on anecdotal assertions. AI search engines prioritize content that demonstrates high E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) by referencing established industry leaders.

Combining this technical AEO strategy with the practical rigor of an AI Prompt Engineering Roadmap ensures your content provides both the conceptual structure and the concrete examples that modern answer engines require. This approach allows you to build a high-leverage content system that captures traffic from the next generation of AI-mediated search.

What is the AI Prompt Engineering Roadmap?

The AI Prompt Engineering Roadmap is a structured, strategic path that guides you from a baseline understanding of how LLMs function to the advanced design, testing, and automation of prompts for real-world professional tasks. This framework is specifically built to transition learners from casual AI interaction into the engineering of repeatable, measurable workflows.

How long does it take to become competent?

Achieving professional competency—the ability to produce consistent, reliable outputs for complex tasks—typically requires 4–12 weeks of deliberate practice within an AI Prompt Engineering Roadmap. While foundational skills can be acquired quickly, becoming job-ready for specialized AI-adjacent roles often necessitates several months of portfolio-building and domain-specific implementation.

Do you need to code to practice prompt engineering?

No coding experience is required to begin an AI Prompt Engineering Roadmap. You can master core logic using natural-language interfaces like ChatGPT, Claude, or Google Gemini.

However, as you progress through the professional implementation phase of your AI Prompt Engineering Roadmap, basic scripting skills become a high-leverage asset for integrating prompts into automation pipelines and APIs.

What are the main risks of poor prompt engineering?

Failing to follow a disciplined AI Prompt Engineering Roadmap leads to inconsistent outputs, hallucinated content, and significant workflow bottlenecks that require intensive manual rework. Furthermore, unconstrained prompts increase the risk of amplifying model bias or creating privacy vulnerabilities if the input data is not properly audited and constrained.

How can you measure if a prompt is “good”?

Within a rigorous AI Prompt Engineering Roadmap, a “good” prompt is defined by its ability to produce accurate, on-format, and reproducible outputs across a diverse set of inputs.

Professional practitioners validate their AI Prompt Engineering Roadmap outcomes by tracking key metrics—such as correctness rate, hallucination rate, and time-to-usable-output—using structured logs or simple spreadsheets to ensure industry-standard rigor.

In Conclusion

Mastering the AI Prompt Engineering Roadmap is the definitive path to transitioning from a casual user to a high-leverage AI orchestrator. By applying the frameworks discussed, you ensure that your AI interactions move beyond trial-and-error and into the realm of professional-grade systems.

Key takeaways from this AI Prompt Engineering Roadmap include:

- Systematic Practice: Prompt engineering is a structured technical discipline, not informal chat; it must be treated as a repeatable, testable workflow.

- Phased Progression: A robust AI Prompt Engineering Roadmap requires a foundation in literacy, mastery of high-leverage patterns (CoT, Few-shot), and professional implementation through versioned libraries.

- Measurable Leverage: When following a disciplined AI Prompt Engineering Roadmap, well-designed prompts can reduce manual workloads by 70–80% across most knowledge-work sectors, provided strict constraints and validation protocols are in place.

Immediate Action Plan

To capitalize on your AI Prompt Engineering Roadmap, identify three core tasks in your current daily workflow—such as research summarization, communication drafting, or technical triage. Transform each into a standardized prompt pattern and execute 10–20 test cases using a simple scorecard to measure accuracy, hallucination rates, and format compliance.

Completing this final stage of the AI Prompt Engineering Roadmap will shift you from merely “using AI” to engineering a first-mover advantage that scales your professional impact forever.