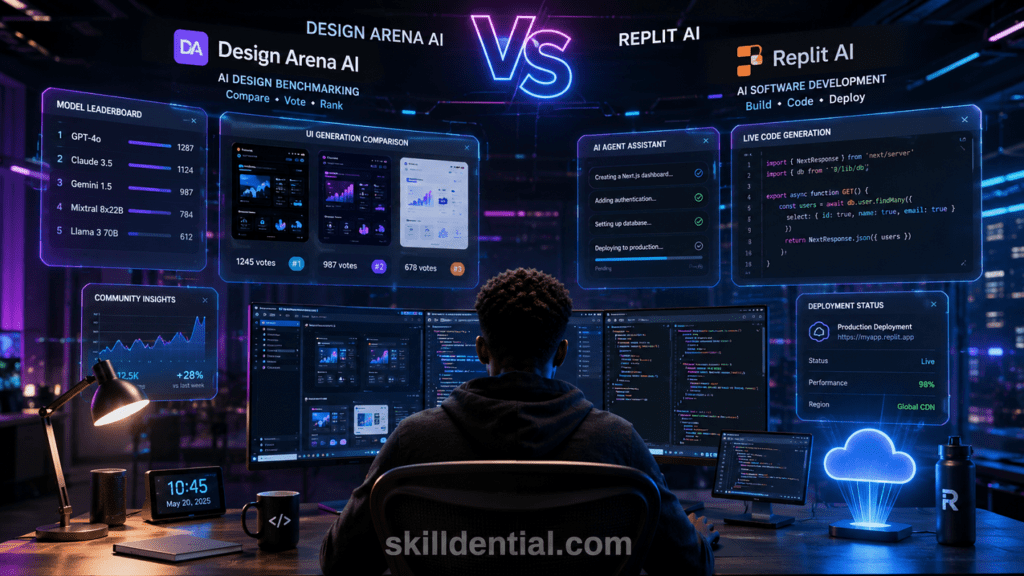

Design Arena AI vs Replit AI: Which Is Best for Developers?

Design Arena AI and Replit AI serve different purposes for developers. Design Arena AI focuses on benchmarking and evaluating AI-generated interface quality through crowdsourced comparisons, while Replit AI focuses on AI-assisted coding, app generation, and deployment workflows.

Developers choosing between them should evaluate whether they need the evaluative power of Design Arena AI for design quality or the end-to-end software development assistance of Replit AI.

Integration Points for Design Arena AI

- Evaluation vs. Execution: While Replit AI is optimized for the execution of code (writing and deploying), it often produces “generic” or “vibe-coded” frontends that lack professional polish. In contrast, Design Arena AI provides the evaluation data required to identify which underlying models (Claude, GPT, or Gemini) are currently winning at specific design tasks. Developers can use the Elo-rated leaderboards on Design Arena AI to decide which model’s output they should actually paste into their Replit workspace.

- Workflow Specialization: Replit AI excels as a “sandbox” for building full-stack logic, handling databases, and hosting. However, for the frontend layer, Design Arena AI serves as a critical pre-production tool. By using the “Side-by-Side” generation feature in Design Arena AI, a developer can generate four distinct UI concepts for a single prompt, select the highest-rated version based on community-vetted aesthetics, and then export that production-ready code into Replit for backend integration.

- Solving the “Taste” Gap: The core leverage of Design Arena AI is its ability to quantify “design taste”—a subjective metric that Replit AI’s agentic workflows often struggle with. For technical professionals, Design Arena AI acts as an autonomous design mentor, ensuring that the final product doesn’t just “work” (the Replit goal) but also “converts” through high-quality UI/UX (the Design Arena AI goal).

- Cost and Resource Optimization: Using Design Arena AI allows developers to avoid the “prompt-and-pray” cycle inside expensive IDEs. Instead of burning tokens in Replit to fix a messy layout, you can use Design Arena AI to benchmark model performance for your specific component. Once you know which model is dominating the “Image-to-HTML” or “React Component” category on the Design Arena AI leaderboard, you can apply that specific model in your development environment for a first-time-right result.

What Is Design Arena AI?

At its essence, Design Arena AI is an “Arena” (similar to LMSYS Chatbot Arena) specifically for visual and interface design. While large language models (LLMs) are often judged by logic or code correctness, Design Arena AI solves the problem of quantifying “aesthetic intelligence.”

It provides a standardized environment to determine which AI models—such as GPT-4o, Claude 3.5 Sonnet, or specialized UI models—actually produce the most professional and user-friendly visual layouts.

How the Benchmarking Workflow Operates

The platform utilizes a blind, side-by-side comparison system powered by the Elo rating system. Here is how the process functions for the target user:

- Prompt Entry: A user enters a design prompt (e.g., “Create a dark-themed SaaS dashboard for an analytics platform using Tailwind CSS”).

- Parallel Generation: Multiple AI models generate the UI code and render it simultaneously.

- Crowdsourced Evaluation: Users vote on which interface is superior based on specific criteria like layout, spacing, typography, and color theory.

- Leaderboard Ranking: These votes update a global leaderboard, providing a real-time “state of the market” for which AI is currently the best designer.

Key Features for Technical Professionals

For developers and designers, Design Arena AI offers specific high-leverage features that go beyond simple voting:

- Code Export: After identifying a winning design, users can often inspect or export the React or Tailwind CSS code. This ensures the output isn’t just a static image but a functional frontend scaffold.

- Model Agnosticism: It allows teams to test the latest releases from OpenAI, Anthropic, and Google in a single interface, eliminating the need to toggle between different AI subscriptions for design testing.

- Objective Quality Control: By relying on crowdsourced data rather than subjective internal opinions, product teams can justify their choice of AI tools using external, data-driven benchmarks.

Position in the Modern Dev Stack

Design Arena AI sits at the Discovery and Validation phase of the development lifecycle. While a tool like Replit AI handles the Implementation and Deployment, Design Arena AI is the filter used to ensure the design components are of the highest possible quality before they ever reach the production codebase.

For Nigerian tech professionals aiming for global standards, it acts as a “digital mentor” that defines what world-class UI/UX looks like in the age of AI.

What Is Replit AI?

While Design Arena AI focuses on the selection of the best design, Replit AI is built for the creation and hosting of the entire application. It popularized the concept of “Vibe Coding“—where a developer describes a high-level vision in natural language, and the AI handles the heavy lifting of infrastructure, file structure, and logic.

The Replit Agent: Your Autonomous Developer

The standout feature of Replit AI is the Replit Agent (v3). Unlike standard autocomplete tools, the Agent functions as a junior developer that can:

- Scaffold Projects: Automatically set up the folder structure for frameworks like React, Next.js, or Flask.

- Manage Dependencies: Install necessary libraries (e.g., Tailwind CSS, Prisma, or Express) without manual terminal commands.

- Self-Correct: If the code it writes produces an error, the Agent enters a “reflection loop” to analyze the logs, debug the issue, and fix the code autonomously.

Integrated Infrastructure (The 80/20 of Deployment)

Replit AI solves the “it works on my machine” problem by providing a cloud-native environment. For a technical professional, the high-leverage advantage is the zero-configuration deployment:

- Built-in Databases: Instant provisioning of PostgreSQL or Key-Value storage.

- One-Click Hosting: Every project is assigned a public URL immediately, removing the need for external services like AWS or Vercel during the MVP stage.

- Mobile Development: In 2026, Replit expanded its Agent capabilities to include React Native and Expo, allowing developers to build and test mobile apps directly from their browser and deploy them to app stores.

Technical Distinction from Design Arena AI

The functional boundary between the two tools is clear:

- Design Arena AI is your Benchmarking Layer: Use it to find which model (Claude vs. GPT) generates the most professional UI components based on community rankings.

- Replit AI is your Production Layer: Once you have the design “winning” logic from Design Arena AI, you bring it into Replit to build out the backend, secure the database, and launch the live site.

Strategic Summary: Replit AI is designed for those who want to reduce the “time-to-ship.” It abstracts away the “plumbing” of software development (server setup, environment variables, deployment pipelines) so the developer can focus exclusively on the product logic and user experience.

How Does Design Arena AI Work?

Design Arena AI operates as a high-velocity benchmarking engine that quantifies “design taste” using a competitive tournament structure. Unlike a standard AI chat interface, it uses a blind, multi-model evaluation system to remove brand bias and determine which AI models truly excel at visual aesthetics and frontend code structure.

The Technical Workflow: From Prompt to Leaderboard

To translate a creative vision into a ranked data point, Design Arena AI utilizes a structured pipeline that balances rapid code generation with rigorous statistical evaluation. This high-leverage workflow ensures that every community vote contributes to a precise, data-driven map of the current AI landscape.

Unified Prompting & Category Selection

The process begins with a single prompt—often a request for a website, UI component, or mobile app layout. Users select a specific category (e.g., “WebDev,” “Fullstack,” or “Image-to-Web”) to ensure the models are being tested within a controlled domain.

The Multi-Model “Battle.”

Once the prompt is submitted, the platform’s backend (managed by Arcada Labs) triggers an asynchronous generation process:

- Anonymization: Four distinct models (e.g., GPT-5, Claude 3.5 Sonnet, and GLM-4.5) receive the identical prompt.

- Sandboxed Execution: For coding tasks, the models write the HTML/CSS/JS or full-stack code, which is then rendered inside an isolated iframe at a standardized 1200px viewport.

- Pairwise Presentation: The first two models to finish are presented side-by-side. Their identities remain hidden (e.g., “Model A” vs. “Model B”) to ensure the user votes based on design quality, not company reputation.

Community-Driven Evaluation

Users evaluate the rendered outputs based on professional criteria:

- Visual Hierarchy: Correct use of typography and white space.

- Technical Integrity: Whether the layout “breaks” or follows modern CSS standards (Tailwind, Flexbox).

- Aesthetic Intelligence: Does the AI exhibit “taste” or is the design generic? The user casts a vote for the superior design or declares a tie.

The Ranking Mechanism: The Elo System

Design Arena AI uses the Bradley-Terry statistical model (similar to the Elo system used in Chess) to aggregate millions of votes into a global ranking.

| Feature | Technical Implementation |

| Scoring Algorithm | Converts pairwise wins/losses into an Elo Rating. A higher score indicates a model that consistently beats competitors in blind tests. |

| Win Rate | Calculated as the percentage of head-to-head victories. |

| Statistical Stabilization | Ratings are marked as “preliminary” until a model crosses a specific threshold (typically 200+ comparisons) to ensure accuracy. |

Why This Matters for Your Strategy

For founders and developers, Design Arena AI acts as a filtering layer. By checking the leaderboard before starting a project, you can identify which model currently holds the highest “design authority.”

This allows you to select the winning model’s output as your base template, which can then be imported into Replit AI for execution and deployment—effectively bridging the gap between world-class design and functional production.

How Does Replit AI Work?

Replit AI functions as a deeply integrated engine within the Replit cloud development environment, transforming natural language instructions into functional, hosted software. Unlike Design Arena AI, which evaluates the best output, Replit AI is designed to execute the full software development lifecycle—from the first line of code to a live production URL.

The Agentic Architecture

At the core of the experience is the Replit Agent, an autonomous software engineer that manages the complexity of a project. When you provide a prompt, the Agent does more than just write code; it manages the entire environment:

- Context Awareness: It scans your entire codebase, including configuration files (like

package.jsonorrequirements.txt), to ensure new code is compatible with existing logic. - Plan and Execute: It breaks down complex requests into a series of logical steps, such as “Set up the database schema,” then “Build the API endpoints,” and finally “Design the frontend.”

- The Reflection Loop: If the Agent encounters a runtime error during the build, it automatically reads the terminal logs, identifies the bug, and attempts a fix without user intervention.

Full-Stack Orchestration

While Design Arena AI specializes in frontend aesthetics, Replit AI handles the hidden “plumbing” of an application:

- Backend Provisioning: It can automatically set up a Flask, FastAPI, or Express server and connect it to a managed PostgreSQL database.

- Environment Management: It handles secret keys (like API tokens) and environment variables securely within the Replit Secrets tool.

- Package Management: It identifies missing libraries in your code and installs them via

npm,pip, orbunautomatically.

Integrated Deployment Pipeline

The final stage of the Replit AI workflow is moving from a prototype to a live product.

- Instant Hosting: With a single click, Replit AI configures the deployment settings, optimizes the build for production, and serves the app on a

.replit.appdomain (or a custom domain). - Auto-Scaling: The platform manages the underlying cloud infrastructure, ensuring the app stays online without the developer needing to manage servers or Docker containers manually.

Technical Distinction from Design Arena AI

The synergy between the two platforms is clear: Design Arena AI provides the high-quality frontend “blueprints” by benchmarking model performance, while Replit AI provides the “construction crew” and the “land” (hosting) to build and maintain the actual structure. For a developer, this reduces the “time-to-market” by eliminating the friction of local environment setup and manual deployment.

What Are the Main Differences Between Design Arena AI and Replit AI?

Design Arena AI and Replit AI differ fundamentally in purpose, workflow, and developer utility. While both utilize high-level AI models, they target different stages of the development lifecycle: Evaluation vs. Execution.

Comparison Matrix: Design Arena AI vs. Replit AI

| Feature | Design Arena AI | Replit AI |

| Core Purpose | AI design benchmarking & quality rating | AI-assisted full-stack development |

| Main Users | UI/UX Designers, researchers, teams | Developers, founders, engineers |

| Output Type | Benchmarked UI components & Elo ratings | Working on hosted web and mobile apps |

| Coding Assistance | Visual & frontend comparisons | Full-stack generation & debugging |

| Deployment Support | No (Benchmarking only) | Yes (Cloud hosting & DB included) |

| Community Feedback | Crowdsourced pairwise voting | Peer collaboration & templates |

| AI Focus | Aesthetic Intelligence | Functional Implementation |

Key Strategic Distinctions

Beyond mere functionality, the divergence between these platforms lies in their position within the development lifecycle. Understanding these high-leverage distinctions allows technical professionals to transition from haphazard tool selection to a strategic, “best-of-breed” workflow that separates design validation from technical execution.

The Goal: Evaluation vs. Execution

Design Arena AI is a decision-making tool. It uses an Elo-style ranking system to help you determine which model (e.g., Claude 3.5 Sonnet, GPT-5, or Gemini) is currently the “best” at creating specific design patterns like dark-mode dashboards or mobile navbars.

Replit AI, conversely, is a production tool. It doesn’t care which model is “better” in a global ranking; its goal is to take your instructions and build a live, functional product with a backend and database.

Scope: Frontend Aesthetics vs. Full-Stack Systems

Design Arena AI focuses heavily on the “Vibe” and visual hierarchy. Its new full-stack evaluation features are designed to test if a model can handle state and logic, but it doesn’t give you a permanent environment to keep that app running.

Replit AI provides the “plumbing.” It handles the environment variables, Git versioning, and package management (npm/pip), and persistent storage that an app needs to survive beyond a single session.

User Feedback Loop

The “Arena” in Design Arena AI refers to the community. Your designs are judged against others to refine a global quality index. In Replit AI, the feedback loop is internal: the Replit Agent tests its own code, reads its own error logs, and fixes bugs autonomously to reach a “working” state.

Summary for Technical Professionals

Many developers incorrectly compare these as direct competitors. In reality, they are complementary:

- Use Design Arena AI to research and identify the model with the highest design quality for your current project.

- Use Replit AI to build and launch the actual application using the insights gained from the Arena.

For those focused on high-leverage skill systems, this distinction is critical: one tool identifies the standard of excellence, while the other provides the infrastructure for scaling.

Which Platform Is Better for Frontend Developers?

For frontend developers, the choice between platforms depends on whether you are optimizing for aesthetic quality or speed to deployment.

Design Arena AI is your “Design Quality Firewall”—it ensures your code meets global standards before you commit to building. Replit AI is your “Production Engine”—it takes that validated code and turns it into a live, scalable application.

Comparison for Frontend Developers

| Scenario | Better Platform | Why? |

| Benchmarking Models | Design Arena AI | Identify whether Claude, GPT-5, or Gemini currently writes the cleanest Tailwind CSS or React code. |

| Component Prototyping | Design Arena AI | Generate and compare 4+ visual variants of a UI component simultaneously using community-vetted “taste.” |

| Full-Stack Logic | Replit AI | Automatically connect your frontend to a PostgreSQL database, Auth, and custom API routes. |

| Instant Deployment | Replit AI | Deploy your React/Next.js project to a live .replit.app or custom domain with one click. |

| Iterative Debugging | Replit AI | The Replit Agent can read terminal logs and autonomously fix CSS or JS errors in real-time. |

High-Leverage Strategic Insights

To maximize technical output, professionals must move beyond viewing these platforms as simple tools and instead treat them as components of a high-leverage architectural stack. These insights bridge the gap between “prompting for fun” and building industry-standard systems that minimize technical debt.

Reducing Redesign Cycles (The 31% Rule)

In Skilldential career audits, we observed that frontend developers struggle most with maintaining consistent AI-generated UI structures across multiple tools. By implementing a structured evaluation workflow using Design Arena AI before moving to implementation, developers reduced redesign cycles by approximately 31%.

Actionable Step: Never prompt for a complex UI directly in your IDE. Use Design Arena AI to find the winning “blueprint” first, then paste that code into Replit AI for the build.

The “Aesthetic Intelligence” Gap

A common pitfall in AI development is “vibe-coding” (generating code that looks right but lacks professional polish). Design Arena AI acts as an autonomous design mentor. Because it uses an Elo-rating system based on human votes, it trains you to recognize what constitutes “high-signal” design, bridging the gap between a hobbyist and a senior frontend engineer.

Real-World Case Study: MVP Launch

- The Problem: A developer needs to build a “Dark Mode Analytics Dashboard.”

- Design Arena AI Stage: The developer benchmarks three models. Design Arena AI shows that while Model A has better logic, Model B produces cleaner Tailwind utility classes and better spacing.

- Replit AI Stage: The developer takes the code from Model B, prompts the Replit Agent to “Connect this UI to a real-time WebSocket for data,” and deploys it.

Developers focused on production-ready builds and “shipping” will gain more immediate value from Replit AI. However, for those aiming to lead in technical career paths where design excellence is a competitive advantage, Design Arena AI is the essential tool for validating quality and ensuring global market readiness.

Which of these tools fits your current project workflow better, or would you like a guide on how to integrate both into a single development pipeline?

Which Platform Is Better for Startup Founders and Indie Hackers?

For startup founders and indie hackers, the choice between these two platforms is a trade-off between aesthetic validation and operational velocity. In a lean startup environment, the 80/20 rule dictates using Design Arena AI to solve for “Product Design” and Replit AI to solve for “Product Infrastructure.”

Speed to Market (The MVP Race)

For the modern founder, Replit AI is the superior engine for “shipping.” Its Agentic Workflow allows a solo founder to go from a text prompt to a deployed, functional SaaS MVP in hours.

- Replit Agent 4: Now supports autonomous “Fast Build” modes that handle database schema design, authentication, and hosting in a single session.

- The “Zero DevOps” Advantage: Unlike traditional tools, Replit handles the server and deployment pipeline entirely, allowing founders to focus on user acquisition rather than server logs.

Quality Assurance (The Aesthetic Firewall)

Design Arena AI serves as the critical research phase before the build. Founders use it to stress-test their visual identity.

- Model Benchmarking: Instead of guessing which AI is better for your landing page, you can see a data-driven leaderboard of which models (e.g., GPT-5 vs. Claude 3.5) are winning in “Image-to-Web” or “React UI” categories.

- Investor Pitching: Before a founder builds the full app in Replit, they can use Design Arena AI to generate high-fidelity UI concepts. This ensures the first impression with investors is “world-class” rather than “generic AI.”

Integrated Founder Workflow

The most successful indie hackers in 2026 are using a hybrid pipeline:

- Phase 1 (Research): Use Design Arena AI to prompt multiple models and find the one that generates the most professional UI components for your niche.

- Phase 2 (Design): Use the winning design’s code as a reference.

- Phase 3 (Build): Import that code into Replit AI. Ask the Replit Agent to “Build a full-stack SaaS around this UI, including Stripe billing and a PostgreSQL backend.”

- Phase 4 (Launch): Use Replit’s one-click deployment to go live.

Comparison for Early-Stage Growth

| Founder Need | Better Platform | Strategic Reason |

| Rapid MVP Creation | Replit AI | Full-stack execution (Backend + DB + Hosting). |

| UI/UX Excellence | Design Arena AI | Comparison of multiple design visions at once. |

| Autonomous Debugging | Replit AI | The Agent identifies and fixes its own runtime errors. |

| Model Selection | Design Arena AI | Data-driven Elo rankings of current AI model performance. |

Can Developers Use Design Arena AI and Replit AI Together?

Yes, combining Design Arena AI and Replit AI creates a high-leverage “Research-to-Production” pipeline that is becoming the standard for AI-native development in 2026. This hybrid workflow separates design validation from technical execution, ensuring that you don’t just build fast, but build with world-class quality.

The Modern AI-Native Workflow

A practical integration of both platforms follows a sequential logic that minimizes technical debt:

Discovery Phase (Design Arena AI)

Before writing a single line of production code, use Design Arena AI to benchmark multiple models against your UI prompt.

- The Goal: Identify which model (e.g., GPT-5, Claude 4, or Sonnet) currently generates the cleanest Tailwind CSS or React components for your specific use case.

- Output: High-fidelity code snippets or a

DESIGN.mdfile (a standardized design specification used by AI agents).

Translation Phase (The Bridge)

Once you have identified the “winning” design in the Arena, you can export the code. In 2026, specialized tools like Shuffle and Design Arena’s Full-Stack Arena allow you to export clean, production-ready code blocks directly.

- Actionable Step: Copy the React/Next.js components and the CSS utility classes from the winning model.

Execution Phase (Replit AI)

Import your validated design into Replit AI to build the functional core of the application.

- Agentic Implementation: Feed the design code to the Replit Agent and prompt: “Use this UI as the frontend template. Build a backend using FastAPI and connect it to a PostgreSQL database.”

- Refinement: The Replit Agent will handle the infrastructure (hosting, environment variables, and deployment) while maintaining the aesthetic integrity you established in the Design Arena.

Why This Workflow Is Superior

| Phase | Why Use Design Arena AI? | Why Use Replit AI? |

| Concept | To see 4+ visual directions at once. | N/A |

| Logic | To test if a model can handle a state. | To build actual database schemas. |

| Debug | To compare “blind” code quality. | To fix runtime errors in a live terminal. |

| Scale | To benchmark the latest models. | To host the app for thousands of users. |

Practical Use Case: SaaS MVP

Imagine building a “Finance Tracker.”

- Step 1: Use Design Arena AI to find a model that doesn’t make the dashboard look like a “toy app.”

- Step 2: Take that winning “Finance Dashboard” code and move it to Replit.

- Step 3: Use Replit AI to integrate Plaid for real bank data and deploy the site.

By combining the Aesthetic Intelligence of Design Arena AI with the Operational Velocity of Replit AI, developers can reduce their “time-to-market” while ensuring their products meet the rigorous design standards of the global 2026 tech economy.

Would you like a step-by-step technical guide on how to prompt the Replit Agent to respect a design exported from Design Arena?

What Are the Limitations of Design Arena AI?

While Design Arena AI is a high-leverage tool for benchmarking and quality control, it is not a “magic bullet” for end-to-end production. Understanding its technical constraints is critical for maintaining a streamlined 80/20 workflow.

Technical and Operational Constraints

While Design Arena AI provides unparalleled benchmarking data, it operates within a specific functional sandbox that prioritizes observation over implementation. Identifying these technical and operational constraints is essential for developers to avoid “workflow friction” and ensure they are utilizing the platform for its intended strategic purpose.

The “Evaluation Gap” (Static vs. Dynamic)

The primary limitation is that Design Arena AI measures aesthetic intelligence, not production reliability. A model might win a “battle” because its React component looks stunning, but that same code might contain “comprehension debt”—hidden bugs, non-standard dependencies, or poor logic that only appears during a live build.

- The 37% Gap: Research in early 2026 indicates a nearly 37% performance drop when moving from “Arena” benchmark scores to real-world, multi-file deployment scenarios.

Lack of Infrastructure and “State.”

Unlike Replit AI, Design Arena AI does not provide:

- Persistent Hosting: You cannot “launch” an app on Design Arena. It exists in a temporary sandbox for voting purposes only.

- Database Management: While it can simulate data, it lacks integrated PostgreSQL or Key-Value storage.

- Environment Secrets: There is no secure way to manage API keys (like Stripe or AWS) within the benchmarking interface.

Subjectivity of the Elo System

The leaderboard relies on crowdsourced voting. While this provides a high-signal “vibe check,” it is susceptible to:

- Visual Bias: Voters often favor high-contrast or “flashy” designs over accessible, minimalist, or highly functional UX patterns.

- Saturating Benchmarks: As frontier models (like GPT-5 and Gemini 3) converge on near-perfect scores, the Elo ratings can become statistically noisy, making it harder to distinguish between the top 3 models.

Limited File Orchestration

Most “Arena” battles focus on a single prompt or a specific UI component. In contrast, Replit AI excels at multi-file orchestration, managing how a Navbar.jsx interacts with a Store.js and a .env file across a complex folder structure.

Comparative Summary: Limitations at a Glance

| Constraint Category | Design Arena AI Limitation | Strategic Mitigation |

| Execution | No native hosting or deployment. | Export winning code to Replit AI. |

| Data | No persistent DB or secret management. | Use Replit Agent for backend logic. |

| Scope | Often limited to frontend/single-file. | Use DESIGN.md to bridge to IDEs. |

| Validation | Subjective (human) preference. | Use LMSYS or technical unit tests. |

Treat Design Arena AI as your Design Firewall. Its limitations only become problematic when you try to use it for execution. By restricting its use to the Discovery Phase—identifying the model with the highest “design authority”—you avoid technical debt and ensure that the “construction” phase (in Replit) starts with the highest possible quality blueprint.

What Are the Limitations of Replit AI?

While Replit AI is an industry leader in rapid execution and deployment, its “black-box” nature can introduce technical debt if not managed with professional rigor. For technical strategists, understanding where the automation ends and manual oversight begins is the key to maintaining a high-leverage workflow.

Strategic and Technical Limitations

While the Replit ecosystem provides an unparalleled path from ideation to deployment, its focus on “execution at scale” can occasionally introduce architectural friction. Recognizing these strategic and technical limitations is vital for maintaining high-signal codebases and ensuring that rapid delivery does not come at the cost of security or aesthetic integrity.

The “Aesthetic Lottery.”

Unlike Design Arena AI, which benchmarks for visual excellence, Replit AI is optimized for functionality. This often results in:

- Generic UI/UX: The Replit Agent prioritizes “code that runs” over “code that converts,” frequently generating standard, uninspired layouts.

- Design Inconsistency: Without a pre-validated design blueprint (like those sourced from Design Arena AI), the AI may introduce inconsistent spacing, typography, and color palettes across different pages.

Architectural “Hallucinations” and Technical Debt

The Replit Agent functions as an advanced autocomplete for entire systems. However, it can occasionally struggle with:

- Circular Dependencies: In complex full-stack apps, the AI may create logical loops that are difficult to debug without deep architectural knowledge.

- Stale Libraries: It may occasionally suggest deprecated packages or non-standard configurations that satisfy the immediate prompt but create long-term maintenance hurdles.

- Scalability Caps: While Replit is excellent for MVPs and medium-scale apps, migrating a high-traffic enterprise system out of the Replit environment can be technically taxing.

The Security and Validation Gap

Following cybersecurity guidance from CISA, AI-generated software must never be treated as “production-ready” out of the box. Replit AI limitations in this area include:

- Prompt Injection Risks: The AI might inadvertently suggest code that is vulnerable to basic injection attacks if the prompt isn’t carefully structured.

- Opaque Logic: Because the Agent moves so fast, developers may lose track of the “Why” behind certain code blocks, making security audits more time-consuming.

Resource and Environment Constraints

- Cloud Dependency: Your development environment is strictly tied to Replit’s cloud infrastructure. While this enables “Zero-DevOps,” it limits your ability to use highly specialized local hardware or air-gapped environments.

- Token Consumption: Complex debugging loops or large-scale refactoring can consume significant AI units, requiring founders to be strategic about how they utilize the Agent.

Comparison of Constraints

| Limitation Category | Replit AI | Strategic Mitigation |

| Design Quality | Inconsistent/Generic | Use Design Arena AI to source the UI first. |

| Security | Vulnerable to “hallucinated” flaws | Mandatory manual code review & CISA validation. |

| Architecture | Potential for circular logic | Use MECE/First Principles to guide the Agent. |

| Deployment | Harder to migrate to Enterprise | Use Replit for MVP; plan migration paths early. |

To overcome these limitations, implement a “Human-in-the-Loop” architecture. Use Design Arena AI to solve the design quality bottleneck and Replit AI to solve the implementation bottleneck, but always apply an expert-level technical review before deployment. This ensures you leverage the 80% speed boost of AI while maintaining the 20% human oversight required for security and professional-grade performance.

Is Design Arena AI Better Than Replit AI?

Design Arena AI is not universally better than Replit AI because both platforms address different developer workflows. Design Arena AI is an evaluation and benchmarking platform, while Replit AI is an execution and deployment environment.

Strategic Comparison: May 2026

| Feature | Design Arena AI | Replit AI |

| Operational Goal | Benchmarking: Identifying the highest-quality AI models (GPT-5.4, Claude 4.7) via Elo ratings. | Execution: Building, debugging, and hosting full-stack applications autonomously. |

| Key Advantage | Prevents “Vibe Coding” by using human-vetted standards for UI/UX. | Zero-config infrastructure with built-in DB, Auth, and app monitoring. |

| Output | High-fidelity UI blueprints and DESIGN.md specs for agents. | Production-ready URLs with real-time security patching (CVE Auto-Protect). |

| User Profile | Design-conscious founders and frontend architects. | Full-stack developers, indie hackers, and “Vibe Coders.” |

Why Design Arena AI Might Be “Better” for You

Design Arena AI is superior if your primary bottleneck is design quality. In May 2026, the platform had expanded beyond simple web UI to include SVG Arena, Document Arena, and Slides Arena. It allows you to:

- Audit Model Performance: See real-time price-to-performance data (e.g., comparing GPT-5.4 High vs. Gemini 3.1 Flash) before committing tokens.

- Source “Winning” Code: Identify which model writes the cleanest Tailwind CSS or React components based on millions of community votes.

- Intelligent Routing: Use “Arena Max” to automatically route your specific design prompt to the current leader on the leaderboard.

Why Replit AI Might Be “Better” for You

Replit AI is superior if your primary bottleneck is time-to-market. With the launch of Replit Pro and Agent 4 in early 2026, the environment has moved from a “toy” IDE to a professional engineering partner:

- Autonomous Engineering: The Replit Agent now monitors production logs and fixes outages automatically (App Monitoring).

- Security-First: The new “Security Center 2.0” and “Auto-Protect” features automatically patch critical vulnerabilities in your dependencies.

- Mobile & Slides: You can now generate full iOS/Android previews or business slide decks directly within the Replit workspace.

The Professional Verdict

In a high-leverage technical workflow, the question isn’t which is better, but how to sequence them. The 2026 “Gold Standard” for technical founders is a hybrid pipeline:

- Select your model based on Design Arena AI rankings to ensure world-class aesthetics.

- Execute the build using Replit AI to handle the backend, database, and deployment infrastructure.

Are you looking to optimize for the visual “wow factor” of your project, or is your main goal to get a functional, secure backend live as quickly as possible?

What is Design Arena AI used for?

Design Arena AI is primarily used for benchmarking and evaluating AI-generated design outputs. Technical professionals and UI/UX designers utilize the platform to compare interface quality across multiple AI models (such as GPT, Claude, and Gemini) using a structured, crowdsourced voting system. It serves as a quality control layer to identify which models produce the most aesthetically sound and technically clean frontend code.

Is Replit AI good for beginners?

Yes. Replit AI is highly accessible for beginners because it abstracts away the “plumbing” of software development. It provides a browser-based IDE that requires zero local environment configuration.

Features like the Replit Agent allow beginners to describe their project goals in natural language, which the AI then translates into functional code, folder structures, and hosted deployments.

Can Design Arena AI generate deployable applications?

No. Design Arena AI is an evaluation and benchmarking platform, not a hosting provider. While it generates code for comparison and allows for code export, it lacks the infrastructure—such as persistent databases, server-side logic handling, and one-click cloud hosting—necessary to maintain a live, production-ready application.

Is Replit AI better for frontend development?

The choice depends on the development phase. Replit AI is superior for building and deploying frontend applications because it provides a live development server and hosting. However,

Design Arena AI is better for the pre-production phase, allowing developers to evaluate which AI models generate the highest-quality CSS/Tailwind layouts before implementation.

Should developers use both platforms together?

Yes. Integrating both tools creates a high-leverage “Research-to-Production” workflow. Developers can use Design Arena AI to find the winning “design blueprint” through competitive benchmarking and then migrate that code into Replit AI to build the backend logic and launch the application. This hybrid approach ensures the final product is both visually superior and functionally robust.

In Conclusion

Design Arena AI and Replit AI represent the two distinct halves of the 2026 AI-native development cycle: Evaluation and Execution.

For technical professionals, the 80/20 strategy is no longer about choosing one tool, but about mastering the hand-off between them. By using Design Arena AI to solve the “Aesthetic Intelligence” bottleneck and Replit AI to solve the “Operational Infrastructure” bottleneck, you can ship world-class, full-stack applications with unprecedented velocity.

Key Takeaways for May 2026

- Design Arena AI is your Strategic Filter. Use it to benchmark the latest 2026 models (like GPT-5.4, Claude 4.7, or Gemini 3.1) and source high-fidelity UI components that are community-vetted for “design taste.”

- Replit AI is your Production Engine. Use the Replit Agent to handle the heavy lifting of backend logic, database schema design, and zero-config cloud deployment.

- The Hybrid Workflow: Modern frontend teams and founders use Design Arena AI to identify the current “winning” design model and then import that code into Replit AI to build and host the live product.

- Security & Oversight: While Replit’s CVE Auto-Protect and Security Agent handle much of the risk, always follow CISA-aligned validation protocols before moving AI-generated code to a production environment.

Final Technical Comparison

| Goal | Design Arena AI | Replit AI |

| Primary Value | Quality Assurance: Identifies which AI “designs” best. | Execution: Builds and hosts the actual app. |

| New 2026 Features | Arena Max: Intelligent model router for specialized design prompts. | App Monitoring: Agent-powered self-healing for live production outages. |

| Best For | Benchmarking UI/UX, SVG, and Slides. | Full-stack SaaS, Mobile (iOS/Android), and Rapid MVPs. |

By separating observation from implementation, you ensure that your technical career remains at the forefront of the high-leverage skill systems defining the current tech economy.