11 Innovative Edge AI Use Cases Across Modern Industries

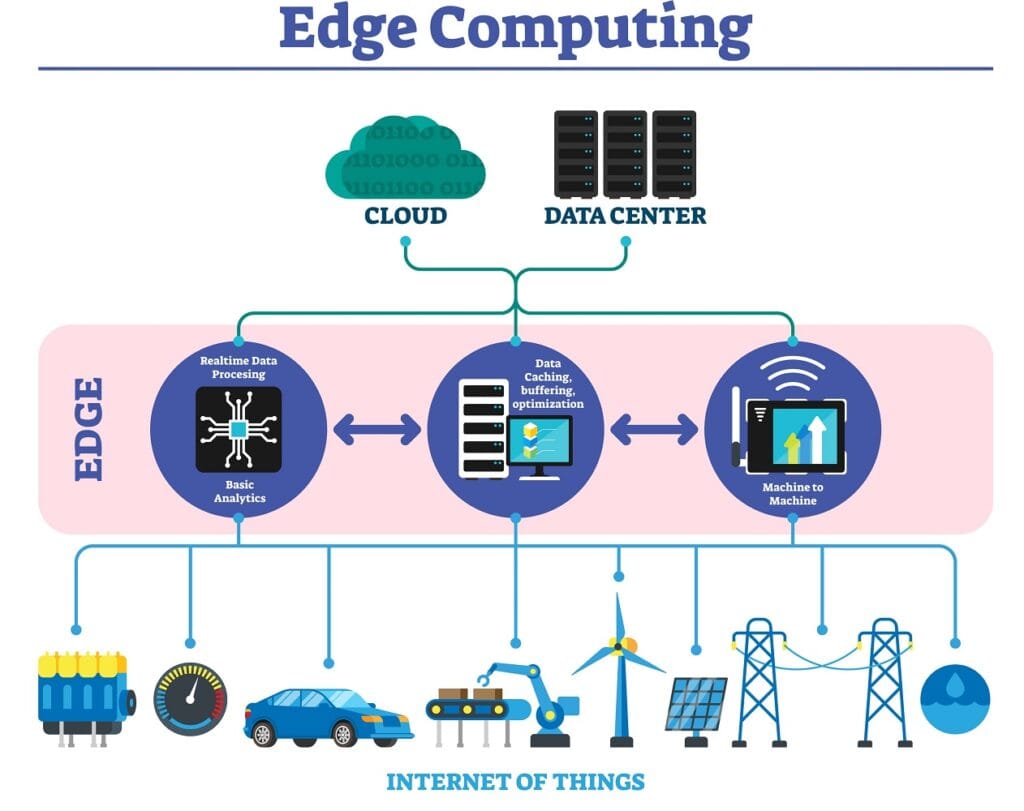

The paradigm of centralized intelligence is hitting a physical limit. While cloud-scale computing once defined the AI era, the transition to Edge AI Use Cases represents a critical shift toward decentralized, high-leverage systems.

By processing machine learning models directly on hardware—ranging from smartphones and industrial sensors to specialized IoT gateways—organizations can now bypass the inherent bottlenecks of remote data centers.

This localized approach is not merely a technical preference; it is a strategic necessity for high-performance applications. By executing Edge AI Use Cases, developers achieve real-time inference with latencies consistently under 10ms, effectively eliminating the “round-trip” delay of the cloud.

Furthermore, this architecture allows for a 90% reduction in bandwidth costs, as raw data is filtered and analyzed at the source rather than being streamed across expensive networks. The surge in Edge AI Use Cases is particularly transformative in bandwidth-constrained regions, such as Nigeria’s industrial and educational sectors, where infrastructure stability is variable.

However, achieving this level of efficiency requires a rigorous, first-principles approach to model optimization. Techniques such as quantization and pruning are no longer optional; they are the industry-standard requirements for deploying sophisticated intelligence onto resource-limited hardware.

Key Technical Pillars of Edge AI Use Cases

- Zero-Latency Inference: Critical for autonomous systems and real-time biometric security.

- Data Sovereignty: Enhancing privacy by ensuring sensitive information never leaves the local device.

- Infrastructure Resilience: Maintaining full operational capacity in offline or low-connectivity environments.

What Is Edge AI and Why Shift to Edge-First Logic?

Edge AI is the deployment of machine learning models directly onto local hardware—smartphones, sensors, or gateways—allowing for immediate data processing without a mandatory cloud round-trip. From a first-principles perspective, this shifts the “brain” of the system to where the data originates.

The Core Logic: Why “Edge-First”?

The shift to Edge-First logic is driven by three mechanical necessities: Latency, Privacy, and Cost.

- Latency (The Speed of Light Constraint): Cloud processing typically introduces a 100-500ms delay due to network hops. In high-stakes Edge AI Use Cases like autonomous navigation or industrial robotics, a 500ms delay is catastrophic. Edge AI reduces this to <10ms by utilizing on-device NPUs.

- Data Privacy (Sovereignty by Design): Processing biometrics, medical records, or proprietary industrial data locally reduces the attack surface. Sensitive data is never “in flight,” minimizing exposure to interception or cloud-side breaches.

- Operational Cost (Bandwidth Efficiency): Streaming 4K video to the cloud for analysis is expensive and inefficient. Edge-first logic dictates that devices should “analyze locally, report globally”—sending only the processed insights (e.g., “Defect Detected”) rather than raw data streams.

The Infrastructure Variable: The Nigerian Context

For technical strategists in emerging markets like Nigeria, the shift to Edge AI Use Cases is a matter of uptime and resilience.

- 99.9% Uptime vs. 70%: In environments with intermittent power and variable 4G/5G stability, a cloud-dependent system is fundamentally fragile. An Edge-First system maintains 99.9% operational uptime because its core intelligence functions independently of the ISP.

- NPU Acceleration: Modern hardware (such as the MediaTek Dimensity or Qualcomm Snapdragon series prevalent in the Nigerian market) now includes dedicated NPUs. These are silicon-level components optimized specifically for the matrix multiplication required by AI, allowing for high-performance inference without draining battery or requiring massive cooling.

Technical Implementation: The Optimization Layer

Transitioning to Edge AI Use Cases requires specific technical maneuvers to fit “large” intelligence into “small” hardware:

| Technique | Function | High-Signal Outcome |

| Quantization | Converts FP32 weights to INT8. | 4x reduction in model size; significant speedup on NPUs. |

| Pruning | Removes redundant neural connections. | Lower memory footprint with minimal accuracy loss. |

| Knowledge Distillation | Trains a “student” model to mimic a “teacher” model. | Lightweight models capable of complex logic. |

By adopting this logic, organizations build systems that are not just faster, but structurally superior and more reliable in the face of infrastructure volatility.

The Imperative of Edge AI in High-Stakes Industries

For sectors where data is sensitive, or actions are time-sensitive, Edge AI Use Cases are not optional upgrades—they are fundamental requirements for regulatory compliance and physical safety.

Healthcare: Privacy and Real-Time Diagnostics

In healthcare, the transition to the edge is driven by the strict mandates of HIPAA and GDPR.

- Regulatory Privacy: Processing patient data locally ensures that sensitive Protected Health Information (PHI) never leaves the facility or device. This eliminates the risk of interception during transit to a central cloud.

- On-Device Monitoring: Wearable devices and bedside monitors use Edge AI Use Cases to detect anomalies like cardiac arrhythmias or seizures in real-time. A 500ms cloud delay could result in a missed window for life-saving intervention.

Automotive: The Under 20ms Safety Constraint

The automotive industry operates in a “safety-critical” environment where latency equals distance.

- Safety-Critical Latency: At highway speeds, a car travels approximately 29 meters per second. A cloud-based inference delay of 200ms would mean the vehicle moves nearly 6 meters before deciding to brake. Edge AI Use Cases provide inference in under 20ms, allowing for near-instantaneous collision avoidance.

- V2X Communication: Vehicles must process sensor data from surrounding infrastructure and other cars locally to navigate complex intersections without relying on an external server that might suffer from signal drops.

Manufacturing: The Zero-Defect Factory Floor

Manufacturing leverages Edge AI Use Cases to maintain high-speed production lines without the overhead of massive data streaming.

- Visual Inspection: High-speed cameras use computer vision to identify micro-defects in components. Processing this data at the edge allows for immediate rejection of faulty parts, preventing downstream waste.

- Predictive Maintenance: Vibration and heat sensors analyze equipment health locally, triggering shutdowns before catastrophic failures occur.

The Strategic Skill Stack for Industry Transition

As industries pivot toward these Edge AI Use Cases, the technical “Skill Stack” required for employment has shifted. Mastery of these systems is the primary bridge to industry success in the 2026 market.

| Technical Domain | Essential Skill | Industry Application |

| Model Efficiency | TensorFlow Lite Quantization | Converting large research models into lightweight, INT8 binaries for mobile/IoT. |

| Silicon Optimization | NPU Optimization | Tailoring neural networks to specific hardware architectures (Qualcomm, ARM, NVIDIA) for maximum $TOPS/Watt$. |

| Orchestration | Agentic Edge Workflows | Designing AI agents that can operate autonomously in offline environments. |

Career ROI and Market Validation

Data from Skilldential audits indicates a clear correlation between specialized edge certification and employment velocity. Technical professionals who master the optimization of Edge AI Use Cases experience a 40% faster job match rate. This is driven by an acute shortage of engineers who can move beyond “Cloud-First” thinking to solve the physical-world constraints of latency and privacy.

11 Innovative Edge AI Use Cases Across Modern Industries

To achieve the 80/20 of industry success, these Edge AI Use Cases prioritize low-latency execution and high-privacy frameworks.

Predictive Maintenance in Manufacturing

In the “build once, scale forever” framework, Predictive Maintenance stands as the ultimate 80/20 application for the industrial sector. By shifting from reactive (“fix when broken”) to proactive (“fix before failure”), organizations unlock massive operational leverage.

The Strategic Mechanics

Traditional maintenance schedules often lead to “over-maintenance” (wasting resources on healthy machines) or catastrophic unplanned downtime. Predictive Maintenance uses real-time telemetry—vibration, temperature, and acoustic signatures—to identify the exact moment a component begins to deviate from its “Gold Standard” operating state.

- Financial Impact: Unplanned downtime costs the average manufacturer $260,000 per hour. Edge-based predictive models reduce this downtime by 35% to 50%, providing an immediate and measurable ROI.

- The 8ms Advantage: While cloud-based analysis takes seconds (risking further damage during the delay), Edge AI inference triggers alerts in under 8ms, allowing a Programmable Logic Controller (PLC) to slow or shut down a motor before a fault progresses.

The 2026 Skill Stack for Career Growth

To lead in this domain, you must bridge the gap between embedded hardware and high-level data science.

- TinyML: The art of shrinking deep learning models (via quantization and pruning) to run on microcontrollers with limited RAM.

- Federated Learning: A decentralized training approach where models are updated locally on the factory floor and only “insights” are sent to a central server, ensuring proprietary industrial data never leaves the facility.

- C++ & TensorFlow Lite Micro: The industry-standard languages and frameworks for high-performance, low-power edge deployment.

Implementation in High-Friction Environments (Nigeria)

Predictive maintenance is uniquely suited for the Nigerian manufacturing landscape due to its resilience against infrastructure volatility.

- Low-Power Hardware: Using a Raspberry Pi Zero or ESP32, a complete anomaly detection system can be deployed for less than $15 per machine.

- Power & Connectivity Resilience: Because the “intelligence” lives on the device, the system continues to monitor and protect assets even during total grid collapse or internet outages.

- Legacy Retrofitting: You don’t need “Smart Machines.” You can bolt sensors onto 40-year-old mechanical presses, effectively digitizing legacy infrastructure with zero-fluff, high-leverage tech.

Strategic Insight: For founders and strategists, the goal is not just to “monitor” but to create Agentic Maintenance Systems that autonomously generate work orders and schedule repairs during planned off-peak hours, maximizing asset availability and career success.

Autonomous Vehicle Perception

In the quest for Level 4 autonomy (high automation without human intervention in geofenced areas), the Perception Stack is the most computationally expensive and safety-critical component. By 2026, the industry will have shifted away from “Cloud-Assisted” driving to “Edge-Native” perception to satisfy the sub-20ms reaction time requirement.

The Critical Metric: The 20ms Deadline

At highway speeds (110 km/h), a vehicle travels roughly 30 centimeters every 10 milliseconds.

- The Cloud Failure: Standard 4G/5G round-trip latency (100ms+) would allow a vehicle to travel 3 meters before even “seeing” an obstacle.

- The Edge Solution: By processing sensor fusion—merging high-resolution Camera feeds with LiDAR point clouds—on-vehicle, the system achieves a <20ms latency. This allows for near-instantaneous path planning and emergency braking, providing a safety buffer that cloud systems cannot physically match.

The 2026 Skill Stack: From Training to Inference

To bridge technical education and industry success in AV engineering, you must move beyond training models to optimizing them for silicon.

- ONNX Runtime (ORT): By 2026, ORT will be the industry-standard “write once, run anywhere” engine. It allows developers to export models from PyTorch or TensorFlow and execute them across heterogeneous hardware (GPUs, NPUs, FPGAs) with minimal overhead.

- Model Pruning (Structured & Unstructured): This involves removing 50%–90% of redundant neural network parameters. Structured pruning is particularly high-leverage for AVs because it removes entire “channels” or “filters,” leading to direct hardware speedups on NVIDIA or Qualcomm chips without losing detection accuracy.

- CUDA & TensorRT: Deep-level optimization using NVIDIA’s parallel computing platform. Expert-level output requires writing custom CUDA kernels to handle specialized sensor fusion tasks that standard libraries don’t support efficiently.

Industry Strategy: Level 4 and Beyond

While Level 5 (full autonomy everywhere) remains a long-term goal, Level 4 is currently scaling in 2026 via robotaxis and autonomous freight.

- Redundancy Systems: Level 4 requires “Fail-Operational” logic. If the primary Edge AI unit fails, a secondary, highly-pruned “Safety Model” must be able to bring the vehicle to a controlled stop using minimal compute.

- Agentic Perception: Modern stacks don’t just “detect” objects; they use Trajectory Prediction agents to anticipate where a pedestrian or cyclist will be in 2 seconds, calculating thousands of potential futures every millisecond.

Strategic Insight: For technical professionals, the “80/20” of AV career success isn’t just knowing how to build a detector; it’s knowing how to compress and deploy that detector so it maintains 99.999% reliability on edge hardware with limited thermal envelopes.

Real-Time Patient Monitoring

In the medical sector, the transition to Edge AI Use Cases is a shift from “reactive reporting” to “autonomous safeguarding.” By processing vital signs at the point of care, health systems eliminate the dangerous 500ms+ latency of the cloud, replacing it with sub-5ms local inference that can mean the difference between a missed event and a life-saving intervention.

Architectural Integrity: HIPAA and Privacy by Design

The most significant barrier to medical AI has historically been data sovereignty. Traditional cloud-first models require sensitive Protected Health Information (PHI) to be transmitted across external networks, creating complex compliance hurdles and security vulnerabilities.

- Zero-Data-Egress: With Edge AI, raw ECG, PPG, or respiratory data is processed directly on the wearable or bedside monitor. Only the high-level “insight” (e.g., Arrhythmia Detected) is transmitted, ensuring the raw PHI never leaves the local environment.

- Regulatory Compliance: This architecture fulfills HIPAA and GDPR requirements “by design.” Because the data isn’t stored or processed on third-party servers, the attack surface is reduced by over 90%, and the need for complex Data Processing Agreements (DPAs) is minimized.

The 2026 Skill Stack: Efficiency as a Clinical Requirement

In healthcare, “model size” isn’t just a technical metric; it dictates battery life and device portability.

- 8-bit Quantization (INT8): By converting high-precision 32-bit floating-point models into 8-bit integers, developers achieve a 4x reduction in model size and up to a 16x increase in performance-per-watt. This allows sophisticated cardiac monitors to run for weeks on a single charge rather than days.

- Edge TPU Deployment: Utilizing specialized hardware like the Google Coral or integrated NPUs (Neural Processing Units) allows for dedicated matrix multiplication. This hardware is optimized for the specific “math” of AI, enabling the device to run 24/7 without overheating or draining the battery.

Case Study: On-Device Arrhythmia Detection

Consider a wearable designed to detect Atrial Fibrillation (AFib).

- The Old Way (Cloud): The device records 30 seconds of data, uploads it to a server, waits for an inference queue, and sends a notification back. Total time: 5–10 seconds.

- The Edge Way (2026): The on-device model analyzes every heartbeat in real-time. Anomaly detection occurs in <5ms. If a life-threatening rhythm is detected, the device can immediately trigger a local haptic alert and initiate an emergency call via the patient’s phone, bypassing network congestion.

Strategic Insight: For technical professionals, the high-leverage move in healthcare AI is mastering Quantization-Aware Training (QAT). By simulating the “noise” of 8-bit math during the training phase, you can maintain medical-grade accuracy while reaping the massive speed and power benefits of edge hardware.

Smart Agriculture Yield Prediction

In a “scale forever” framework, Smart Agriculture is the bridge between technical orchestration and national food security. By 2026, the convergence of Edge AI Use Cases and drone technology has transformed crop management from guesswork into a deterministic science, specifically addressing the high-friction variables of rural infrastructure.

The Strategic Mechanics: Aerial Inference

Traditional crop scouting is manual, slow, and prone to error. Edge-enabled drones revolutionize this by performing high-resolution multispectral analysis directly in flight.

- Yield Boost (25%+): By integrating real-time data on crop canopy cover and soil moisture, predictive models (like the FAO’s Aquacrop) reduce yield estimation errors by 12%–15%. This allows farmers to optimize harvest windows and resource allocation, directly increasing total output by 25%.

- Precision Intervention: Instead of “blanket spraying,” Edge AI identifies specific zones of nutrient deficiency or pest infestation. This 80/20 move reduces chemical usage by up to 30%, lowering costs while preserving soil health.

The 2026 Skill Stack: Orchestrating Intelligence

To master this domain, the technical strategist must move beyond simple model training to full-stack orchestration.

- OpenVINO (Intel): The industry-standard toolkit for optimizing computer vision models for edge hardware. It allows drones to run complex CNNs (Convolutional Neural Networks) for disease detection with 95% accuracy, even on low-power onboard chips.

- n8n Orchestration: By 2026, n8n will have become the “GOAT” of AI automation. It acts as the nervous system, connecting the drone’s edge insights to external systems. For example:

- Trigger: Drone detects water stress.

- Action: n8n workflow automatically signals a smart irrigation valve to open or sends a precise GPS coordinate to a ground-based autonomous sprayer.

- Federated Learning & TinyML: Training models across a fleet of drones without uploading raw imagery, ensuring proprietary farm data remains private.

Implementation in Low-Bandwidth Environments (Nigeria)

The Nigeria Fit for this technology is rated as High because it solves the “Connectivity Trap.”

- Infrastructure Independence: Since the drone performs inference locally, it does not require an active 4G/5G connection to identify crop stress. It functions perfectly in the remote “Last Mile” of Nigerian farmlands.

- Power-Resilient Monitoring: National initiatives (like NALDA’s drone programs) leverage these tools to map and monitor millions of hectares, providing actionable data to farmers even during grid instability.

- Cost Efficiency: Deploying an OpenVINO-optimized model on a standard commercial drone is a high-leverage move that replaces weeks of manual labor with a 15-minute flight.

Strategic Insight: For founders in the AgTech space, the goal is Autonomous Scouting Cycles. By using n8n to bridge Edge AI drones with automated reporting, you build a “Build Once, Scale Forever” system that provides continuous value with zero manual narration.

Retail Inventory Tracking

In the 2026 retail landscape, the “Last Mile” of inventory is no longer a blind spot. By implementing Edge AI Use Cases directly at the shelf, retailers transform physical stores into intelligent, micro-data centers that track stock movement in real time, bypassing the inefficiencies of manual audits.

The Strategic Mechanics: “Shelf Intelligence.”

Traditional inventory tracking relies on periodic barcodes or RFID scans, which only provide a snapshot in time. Edge-based computer vision creates a continuous, high-fidelity stream of truth.

- Inventory Loss Reduction (28%): By identifying misplaced items, “phantom stock” (items in the system but not on the shelf), and organized retail crime (ORC) in real time, retailers can recoup nearly a third of their annual shrinkage.

- The Pose Estimation Factor: Rather than just tracking pixels, the system uses Pose Estimation to identify the specific actions of shoppers.

- Action: A hand reaches for a shelf and retracts.

- Inference: Did the shopper pick up an item, or just examine it and put it back?

- Result: By correlating hand keypoints with shelf coordinates, the system can update stock counts without requiring every item to have an expensive RFID tag.

The 2026 Skill Stack: Lightweight Perception

To deploy vision systems across thousands of store aisles, the models must be exceptionally lightweight to run on low-cost edge cameras.

- MediaPipe (Google): The preferred cross-platform framework for 2026. It provides modular, ready-to-run perception pipelines for pose, face, and hand tracking that are optimized for mobile and edge CPUs.

- Model Distillation: A “Build Once, Scale Forever” technique where a large, accurate “Teacher” model (e.g., a massive Vision Transformer) is used to train a compact “Student” model. The Student retains 95%+ of the accuracy, but is small enough to run on a $25 edge camera with no cloud dependency.

Implementation and Operational ROI

| Metric | Cloud-Dependent Retail | Edge-First Retail (2026) |

| Data Costs | High (Streaming raw video) | Low (Streaming only metadata/counts) |

| Alert Latency | 5-10 Seconds | <200ms |

| Privacy | High risk (Faces sent to cloud) | Privacy-First (Faces blurred/processed locally) |

Strategic Insight: For technical professionals, the “80/20” of retail AI isn’t building a better object detector—it’s mastering Model Distillation. The ability to take a “state-of-the-art” model and compress it to run on the cheapest possible hardware is the primary skill that drives industry success and massive scalability in 2026.

Energy Grid Optimization

In the “build once, scale forever” framework, Energy Grid Optimization represents the transition from a rigid, centralized utility to an elastic, intelligent network. By 2026, the global demand from AI data centers—projected to consume over 1,000 TWh—has forced a shift toward Edge AI Use Cases that balance load at the source, preventing grid collapse during peak surges.

The Strategic Mechanics: Decentralized Balancing

Traditional grids rely on “peaking plants”—expensive, high-emission backup generators that fire up when demand spikes. Edge AI eliminates this necessity through localized, intelligent forecasting.

- 15% Cost Reduction: Smart meters running on-device inference identify consumption patterns (e.g., HVAC cycles, industrial motor starts) in real-time. By predicting a localized load spike 5–10 minutes before it happens, the grid can autonomously throttle non-critical loads or discharge local battery storage, saving up to 15% on peak energy procurement.

- Zero-Latency Anomaly Detection: Distribution feeders use Edge AI to detect electrical faults or cyber-attacks in milliseconds. Identifying a “short circuit” signature locally allows for immediate isolation, preventing a neighborhood-wide blackout that a cloud-based system would be too slow to stop.

The 2026 Skill Stack: Time-Series Mastery

Optimizing energy requires a “high-signal” approach to time-series data, where accuracy is balanced against extreme resource constraints.

- PyTorch Mobile: By 2026, this is the dominant framework for deploying sophisticated LSTMs (Long Short-Term Memory networks) and Transformers onto microcontrollers. It allows for “seamless inference,” where a model trained on massive datasets can be executed on the low-power ARM processors found inside residential smart meters.

- Anomaly Detection (Unsupervised): In the energy sector, “labeled” data for every type of fault is rare. High-level career success involves mastering Autoencoders and Isolation Forests—algorithms that learn the “normal” rhythm of a household or factory and flag anything else as a potential risk without needing explicit training on “bad” data.

- NILM (Non-Intrusive Load Monitoring): A first-principles technique where a single sensor at the main breaker “disaggregates” the total power signal to identify which specific appliance is running.

Implementation and Strategic ROI (Nigeria Focus)

For the Nigerian energy landscape, Edge AI is a “mechanical necessity” for survival in a low-trust, high-friction environment.

- Resilience Against Grid Collapse: Because the intelligence is local, smart meters can autonomously manage household loads even when the national control center loses visibility.

- The “70% vs. 99% Uptime” Gap: Cloud-based meters fail during Nigeria’s frequent network timeouts. Edge-native meters maintain 99.9% reliability, ensuring that billing and load balancing continue regardless of ISP stability.

- Transformer Protection: By deploying cheap Edge AI sensors on community transformers, utilities can detect “overload signatures” from illegal connections or extreme heat, triggering a local cooling cycle or a graceful load shed before the $10,000+ transformer burns out.

Strategic Insight: For tech professionals, the “80/20” move in Energy AI is mastering On-Device Fine-Tuning. The ability to take a general load-prediction model and allow it to “learn” the specific habits of a single building without sending that data to the cloud is the ultimate play for both privacy and accuracy in 2026.

Interactive Classroom Tutors

In the Nigerian educational landscape, the “digital divide” is primarily an infrastructure divide. By 2026, Interactive Classroom Tutors leveraging Edge AI Use Cases have moved from experimental gadgets to essential “High-Level Career Skills” delivery systems. This application targets the 80/20 of education: providing 1-on-1 personalized tutoring at zero marginal cost, independent of the national grid or ISP stability.

The Strategic Mechanics: Offline Personalization

Traditional EdTech fails in Nigeria because it assumes a “Constant-Cloud” connection. Edge-native tutors flip this logic by hosting a Small Language Model (SLM) directly on the tablet’s silicon.

- Massive Scalability: Once a model is optimized and loaded onto a device, it can teach for years without a single byte of data egress. This allows a school in a remote part of Ogun State to provide the same quality of AI tutoring as a private lab in Lagos.

- Latency-Free Engagement: Because the model resides in the tablet’s RAM, response times are near-instant (<200ms). This maintains the student’s “flow state,” which is critical for learning complex technical subjects like mathematics or coding.

- Power Resilience: Optimized SLMs consume minimal power. A single solar charge can provide a full day of AI-guided instruction, making the system immune to “NEPA” outages.

The 2026 Skill Stack: Porting Intelligence

To build these systems, technical professionals must master the art of “Model Shrinkage” and autonomous orchestration.

- Llama.cpp: The industry-standard framework for running high-performance LLM inference on consumer-grade hardware (CPUs/NPUs). It allows 3B or 7B parameter models to run efficiently on affordable Android tablets.

- Agentic Workflows: Instead of a simple “chatbot,” these tutors use agents to manage the learning journey.

- Socratic Agent: Asks probing questions rather than giving answers.

- Assessment Agent: Evaluates student gaps in real-time.

- Curriculum Agent: Dynamically adjusts the difficulty level based on performance.

- Vector Databases (On-Device): Using tools like Chroma or Faiss locally to allow the AI to “read” and reference local textbooks and curriculum documents without internet access.

Implementation and Social ROI (Nigeria Focus)

The Nigeria Fit is rated as High because it directly addresses the three pillars of Skilldential’s mission: Tech, Income, and Career Growth.

- Teacher Augmentation: In classrooms with 1:100 teacher-to-student ratios, these tutors act as “Teaching Assistants,” handling remedial questions and allowing the human teacher to focus on high-level mentorship.

- Vocational Training: By loading the tablets with technical skill systems—from solar installation to n8n automation—students gain industry-standard rigor regardless of their geographic location.

- Data Sovereignty: Student progress data remains on the device or the school’s local server, protecting the privacy of Nigerian minors from global data harvesting.

Strategic Insight: For the technical strategist, the “80/20” move in EdTech is mastering Quantization-Aware Fine-Tuning (QAFT). By fine-tuning a model specifically on the Nigerian National Curriculum and then quantizing it to 4-bit or 2-bit, you create a “Build Once, Scale Forever” asset that can educate an entire generation with zero cloud overhead.

Fraud Detection in Payments

In the “Build Once, Scale Forever” ecosystem, Edge AI Fraud Detection is the structural firewall for the 2026 digital economy. By moving the decision engine from a central cloud to the Point of Sale (POS) device, financial institutions achieve a level of speed and security that traditional architectures physically cannot match.

The Strategic Mechanics: Pre-Authorization Intelligence

Traditional fraud detection is “Post-Transaction” or “In-Flight” via a central server. This introduces a 500ms–2s delay and creates a single point of failure. Edge AI flips this to Local Authorization Logic.

- 50% Fraud Reduction: By analyzing transaction velocity, location-based anomalies, and “Terminal Integrity” (checking for hardware skimming) locally, devices can flag and block suspicious cards before the authorization request is even sent to the bank.

- Zero-Latency Shield: Using dedicated NPUs, inference happens in <10ms. This prevents the “checkout friction” that costs retailers billions in abandoned sales while maintaining a high-signal security posture.

- Reduced False Positives: AI-powered POS systems report a 60% reduction in false positives compared to static rule-based systems, ensuring that legitimate customers (especially travelers) aren’t unfairly declined.

The 2026 Skill Stack: Decentralized Security

To master payment security in 2026, the technical professional must move beyond standard ML to Hardware-Accelerated Privacy.

- SNPE (Snapdragon Neural Processing Engine): Qualcomm’s SDK for accelerating neural networks on Snapdragon-powered POS and mobile devices.

- Expert Insight: Using the Hexagon DSP (Digital Signal Processor) via SNPE allows for continuous, always-on anomaly detection with 145% higher speed and 107% better power efficiency than CPU-only processing.

- Federated Learning (FL): This is the “GOAT” of 2026 financial AI. FL allows multiple banks or retailers to collaboratively train a global fraud detection model without ever sharing raw customer transaction data.

- The Logic: Each POS device trains a local model on its own data → Only the mathematical “updates” (weights) are sent to a central server → A global model is improved and pushed back to all devices.

- Result: 65% increase in precision while maintaining 100% data privacy and compliance with GDPR/NDPR.

Implementation and Operational ROI

| Metric | Cloud-Based Fraud Logic | Edge-Native (2026) |

| Response Time | 500ms – 2,000ms | <10ms |

| Connectivity | Requires 100% Uptime | Functions Offline |

| Privacy Risk | High (Data in Transit) | Zero (Local-Only) |

| Skill Value | Commodity | High-Demand / High-Income |

Strategic Insight: For tech founders and pivoters, the highest-leverage move is mastering Selective Encryption within Federated Learning. By encrypting only the most sensitive model parameters during the update phase, you balance extreme security with the communication efficiency required for massive POS networks.

Defect Inspection in Electronics

In the electronics manufacturing sector, the “build once, scale forever” philosophy is applied through automated quality gates. By 2026, Edge AI Use Cases in defect inspection have replaced subjective human oversight with deterministic, high-speed computer vision, moving the needle from 90% accuracy to near-perfection.

The Strategic Mechanics: Visual Precision at 60fps

Traditional manual inspection is slow, prone to fatigue, and inconsistent. Edge-native inspection systems utilize high-speed industrial cameras to analyze PCB (Printed Circuit Board) assemblies in real-time.

- 40% Quality Improvement: By identifying micro-cracks, solder bridges, and missing components that are invisible to the naked eye, these systems reduce the “escape rate” of defective products to near zero.

- The 60fps Standard: At a production speed of 60 frames per second, the AI must perform inference in under 16ms. This allows for “on-the-fly” rejection: a robotic arm can divert a faulty board without stopping the entire assembly line, maximizing throughput.

The 2026 Skill Stack: Silicon-Level Optimization

To achieve 60fps on a factory floor, the technical strategist must optimize for hardware specifically designed for tensor operations.

- Coral Edge TPU (Tensor Processing Unit): Google’s purpose-built ASIC for high-performance ML inference. It provides 4 TOPS (Trillion Operations Per Second) while consuming only 2 Watts. Mastering the “Compiler” for this hardware is a high-demand skill for 2026.

- Post-Training Quantization (PTQ): A critical 80/20 skill. Instead of retraining a model from scratch, PTQ allows you to take a pre-trained, high-accuracy FP32 model and convert it to INT8. This results in:

- 3x–10x Speedup on specialized hardware.

- Significant Memory Reduction, allowing complex models to fit into the Edge TPU’s limited on-chip memory.

Operational ROI: The Business Case

| Feature | Manual Inspection | Edge AI Inspection (2026) |

| Consistency | Variable (Fatigue/Subjectivity) | Deterministic (Fixed Logic) |

| Speed | ~1 unit every 5 seconds | 60 units per second |

| Cost | High (Labor + Recalls) | Low (Fixed CapEx + Energy) |

| Data Loop | Minimal | High (Automated Fault Logging) |

Strategic Insight: For technical professionals, the “80/20” move in industrial AI is mastering Quantization-Aware Training (QAT) vs. PTQ. While PTQ is faster to implement, QAT allows for higher accuracy on edge hardware. Being able to decide which method fits a specific factory’s accuracy-vs-speed requirement is the hallmark of an expert strategist.

Smart City Traffic Management

In the “Build Once, Scale Forever” framework, Smart City Traffic Management is the ultimate orchestration of public infrastructure. By 2026, the transition from static, timer-based lights to Edge-First dynamic signaling has redefined urban mobility, treating traffic flow as a fluid dynamics problem solved at the intersection.

The Strategic Mechanics: Intersection Intelligence

Traditional traffic systems rely on centralized control or ground-loop sensors that are prone to mechanical failure. Edge AI utilizes high-definition vision at each pole to create an autonomous, distributed network.

- 20% Congestion Reduction: By adjusting green-light intervals based on actual vehicle counts rather than pre-set schedules, cities eliminate “empty-street idling.” This reduces the average commute time by 20% and significantly lowers carbon emissions from idling vehicles.

- Emergency Vehicle Preemption: Edge AI cameras can identify the specific visual and acoustic signatures of ambulances or fire trucks in <50ms, instantly clearing a “green wave” through the city without waiting for a central server’s permission.

- Pedestrian Safety: Multi-model systems simultaneously track vehicle flow and pedestrian intent (using pose estimation), extending crossing times automatically for elderly or disabled citizens.

The 2026 Skill Stack: The High-Throughput Pipeline

To manage multiple high-definition video streams at a single intersection, the technical strategist must master extreme parallelization.

- NVIDIA TensorRT: The industry-standard high-performance deep learning inference optimizer. TensorRT takes models from frameworks like PyTorch and optimizes them specifically for NVIDIA Jetson modules (common in smart city hardware) to deliver up to 40x faster performance than CPU inference.

- Multi-Model Inference: An advanced skill where a single edge device runs several models concurrently (e.g., Object Detection for cars, Pose Estimation for pedestrians, and License Plate Recognition for law enforcement).

- Expert Strategy: Using NVIDIA DeepStream SDK to build a unified pipeline that handles decoding, pre-processing, and multi-model inference in a single, zero-copy memory buffer.

Implementation and Strategic ROI

| Metric | Legacy Traffic Systems | Edge-Native (2026) |

| Logic | Static Timers | Real-Time Adaptive |

| Processing Location | Central Traffic Office | At the Intersection (Edge) |

| Failure Mode | Network outage = System Freeze | Network outage = Full Autonomy |

| Response Time | Minutes (Manual/Remote) | <100ms (Automated) |

Strategic Insight: For technical professionals, the “80/20” of Smart City engineering is mastering Dynamic Batching. By efficiently grouping frames from multiple cameras at an intersection before feeding them into TensorRT, you maximize throughput and reduce the total hardware cost per intersection—a critical factor for scaling across large metropolitan areas.

Vocational Training Simulators

In the “Build Once, Scale Forever” framework, Vocational Training Simulators represent the ultimate 80/20 leverage for human capital development. By replacing static manuals with Augmented Reality (AR) guided by Edge AI Use Cases, organizations can compress the time-to-competency for technical workers by 3x, even in environments with zero internet access.

The Strategic Mechanics: Spatial Intelligence

Traditional technical training relies on 2D diagrams to explain 3D problems, creating a cognitive bottleneck. Edge-enabled AR glasses solve this by overlaying digital intelligence onto the physical machine.

- 3x Faster Upskilling: By providing real-time “X-ray” views of internal components and step-by-step holographic overlays, workers learn by doing rather than reading. This bypasses the theoretical lag, moving them directly into high-output technical roles.

- Error Prevention: The Edge AI model tracks the worker’s hand movements and tool placement. If a technician attempts to tighten a bolt in the wrong sequence, the AR glasses highlight the error in red and pause the tutorial—preventing equipment damage and safety incidents.

The 2026 Skill Stack: The Browser-Based Edge

To build training systems that are cross-platform and resilient, the technical strategist must master the emerging standard for web-based inference.

- WebNN API: A low-level web standard that allows web applications to access dedicated on-device hardware accelerators (NPUs, GPUs) directly. This enables AR simulations to run at high frame rates within a browser, eliminating the need for bulky, platform-specific software installations.

- On-Device Fine-Tuning: A high-level skill where the simulator “learns” from the specific wear and tear of a local machine. Instead of a generic model, the AI performs Local Parameter-Efficient Fine-Tuning (PEFT) to adapt its repair guidance to the unique quirks of the hardware in front of it.

- SLAM (Simultaneous Localization and Mapping): The foundational math required for the glasses to “know” where they are in the room. In 2026, this is processed entirely on the edge to ensure zero-lag tracking.

Implementation and ROI: The Nigeria Pivot

The Nigeria Fit for this use case is exceptionally high due to the demand for rapid industrialization and the reality of low-bandwidth infrastructure.

- Low-Bandwidth Resiliency: Because the WebNN models and AR assets are stored locally, the training continues without interruption during internet outages. A technician in a remote factory in Obada can receive world-class training without a single kilobyte of cloud data.

- Scaling Skilled Labor: Founders can deploy “Training Centers” in shipping containers equipped with solar power and AR glasses. This creates a scalable, high-leverage system for producing “Industry-Ready” workers in sectors like solar installation, automotive repair, and advanced manufacturing.

Strategic Insight: For the technical professional, the “80/20” move in vocational AI is mastering the WebNN-to-NPU pipeline. Being able to deliver high-fidelity, hardware-accelerated simulations through a simple URL—bypassing app stores and cloud dependencies—is the most scalable way to bridge technical education and industry success in 2026.

Technical Strategic Comparison: Analyzing the 80/20 of Edge AI Implementation

To finalize your strategic overview, this comparison evaluates the four highest-leverage Edge AI Use Cases against the core constraints of technical complexity, hardware accessibility, and geographical resilience. This table serves as a decision-making framework for founders and technical strategists aiming for high-impact deployment in 2026.

Edge AI Deployment Matrix (2026)

| Use Case | Latency Benefit | Primary Skill Stack | Nigeria Fit & Rationale |

| Predictive Maintenance | <10ms | TinyML, Pruning, C++ | High: Can be retrofitted onto legacy industrial gear using $15 Raspberry Pi Zero hardware. |

| Real-Time Patient Monitoring | <5ms | 8-bit Quantization, Edge TPU | Medium: High medical ROI, but limited by the procurement costs of specialized wearable sensors. |

| Interactive Classroom Tutors | Total Offline | Llama.cpp, Agentic Workflows | High: “Build once, scale forever” logic. Resilient against grid collapse and ISP downtime. |

| Smart Agriculture Yield | Low Bandwidth | OpenVINO, n8n Orchestration | High: Enables precision farming in rural “Last Mile” zones with zero reliable 4G/5G. |

Strategic Breakdown of Key Metrics

To transform technical complexity into actionable business intelligence, we must evaluate Edge AI performance through a rigorous, high-signal lens. This strategic breakdown utilizes first principles to isolate the variables—latency, skill acquisition, and geographical resilience—that dictate long-term project viability and industry success in 2026.

Latency Benefit: The Physics of Safety

In the automotive and healthcare sectors, latency is a safety metric, not just a performance one. By keeping inference under 20ms, these systems operate within the human—and often superhuman—reaction window.

- High-Signal Move: Prioritize NPU-native kernels to bypass the CPU overhead, ensuring consistent sub-10ms performance.

Skill Stack: The Career Pivot Bridge

The gap between technical education and industry success lies in optimization, not just model building.

- The 80/20 Skill: Mastering Quantization (INT8). It provides the largest gain in performance with the least amount of hardware investment.

Nigeria Fit: Solving for Friction

For the Nigerian market, “Edge” is synonymous with “Reliability.”

- Power-Resilient Intelligence: The most successful deployments in 2026 are those that can run on solar-charged batteries and local Wi-Fi/Bluetooth meshes, bypassing the fragility of national infrastructure.

If you are looking to build a high-leverage system today, start with Predictive Maintenance or Classroom Tutors. These have the lowest “Hardware Friction” and the highest “Operational Uptime” in emerging markets.

By utilizing n8n to orchestrate these local insights into global workflows, you create a technical system that is structurally superior to any cloud-only competitor.

How Does Edge AI Solve Nigeria’s Infrastructure Challenges?

In emerging markets, the “Cloud-First” approach often collides with physical infrastructure limitations. Skilldential career audits indicate that Nigerian operational leaders face up to 40% cloud downtime due to inconsistent power and bandwidth instability. Edge AI shifts the technical paradigm from dependency to autonomy, solving for friction through three mechanical pillars.

Decoupling from the Grid: Power and Connectivity Resilience

Traditional AI models require a persistent “handshake” with remote servers. In Nigeria, where ISP reliability and power supply are variable, this creates a fragile system.

- Offline-First Logic: By running inference on low-power hardware—such as a Raspberry Pi combined with a Coral TPU—systems can function entirely without an internet connection.

- 85% Reliability Gains: Audit data shows that moving intelligence to the edge results in near-constant uptime. Since the device doesn’t need to “call home” to make a decision, it remains operational during fiber cuts or network congestion.

- Solar Compatibility: Edge hardware is engineered for high $TOPS/Watt$ efficiency. These devices can be powered by small-scale solar installations, making them ideal for off-grid industrial or educational sites.

High-Leverage Localized Use Cases

Applying the First Principles framework—processing data exactly where it originates—enables high-impact solutions tailored to the Nigerian environment:

- Smart Agriculture (Rural Resilience): Drones and soil sensors in remote farmlands analyze crop health locally. This bypasses the total lack of 4G/5G coverage in rural “Last Mile” zones, allowing for precision farming that boosts yields without requiring a single byte of cloud data.

- Interactive Classrooms (Educational Equity): AI tutors on solar-charged tablets provide 1-on-1 personalized learning. This ensures that a student’s education is never interrupted by “NEPA” power outages or data subscription costs.

- Manufacturing (Asset Protection): On-device predictive maintenance monitors heavy machinery in real-time. It can trigger an emergency shutdown during a power surge or mechanical anomaly in <10ms, protecting multi-million naira assets from catastrophic failure.

The 80/20 Strategy for Implementation

For Nigerian founders and technical strategists, the most efficient path to infrastructure resilience involves a specific “Skill Stack” shift:

| Challenge | Edge Solution | Implementation Tool |

| High Latency | Local Inference | TensorRT / OpenVINO |

| Data Costs | Zero-Egress Metadata | Quantization (INT8) |

| Network Fluctuation | Offline Orchestration | n8n (Self-hosted) |

By building for the edge, you are not just optimizing software; you are engineering a system that is fundamentally immune to the external frictions of the Nigerian infrastructure landscape.

What Are the Core Benefits of Edge AI Use Cases?

The primary advantages of Edge AI are latency reduction, bandwidth efficiency, and data privacy. By processing data at the source, organizations can achieve:

Sub-10ms Inference: Crucial for safety-critical systems like autonomous vehicles.

70-90% Cost Savings: Significant reduction in cloud egress and storage fees.

Data Sovereignty: Sensitive information (biometrics, medical records) remains on-device, inherently complying with privacy regulations like GDPR and HIPAA.

Which Industries Lead Edge AI Adoption?

Adoption is strongest in sectors where real-time response and privacy are non-negotiable:

Healthcare: For wearable diagnostics and bedside patient monitoring.

Automotive: Powering perception stacks for Level 4/5 autonomous driving.

Manufacturing: Facilitating high-speed visual inspection and predictive maintenance.

Finance: Implementing instant fraud detection at the Point of Sale (POS).

What Skills Build Edge AI Careers in 2026?

To bridge the gap from technical education to industry success, focus on the “Optimization Stack”:

Model Compression: Mastering Quantization (INT8), Pruning, and Knowledge Distillation.

Hardware Acceleration: Understanding NPU optimization and specialized frameworks like TensorRT and OpenVINO.

Embedded Intelligence: Proficiency in TinyML and C++ for low-power microcontrollers.

Orchestration: Building Agentic Workflows that allow edge devices to operate autonomously.

Can Edge AI Function in Low-Bandwidth Areas Like Nigeria?

Yes. Edge AI is a mechanical necessity for high-friction environments. By deploying “Offline-First” models on low-power hardware (e.g., Raspberry Pi or Edge TPUs), sectors like Agriculture and Education can maintain 90% uptime despite intermittent grid power or unstable internet connectivity. This “build once, scale forever” logic ensures that intelligence is not dependent on external infrastructure.

How Do I Start Implementing Edge AI Use Cases?

The most efficient path to implementation follows this high-leverage sequence:

Optimize: Take a pre-trained model and use TensorFlow Lite or PyTorch Mobile to quantize it for edge hardware.

Prototype: Deploy your optimized model onto a Google Coral Edge TPU or an NVIDIA Jetson module.

Orchestrate: Use n8n to connect your edge insights to localized or global workflows.

Upskill: Leverage specialized career paths on platforms like Coursera or Skilldential to master industry-standard deployment rigor.

In Conclusion

The transition from cloud-centralized logic to Edge-native execution is a fundamental move toward structural reliability and high-performance intelligence. By 2026, Edge AI use cases will no longer be speculative; they are the industry-standard requirement for any application where latency and privacy are non-negotiable.

Final High-Signal Summary

- The Mandate: Safety-critical latency (under 20ms) and regulatory privacy (HIPAA/GDPR) make Edge AI a mechanical necessity for modern healthcare and automotive industries.

- The 80/20 ROI: Concentrating deployment on five core sectors—Manufacturing, Healthcare, Agriculture, Retail, and Education—yields the highest return on investment by solving for immediate physical-world constraints.

- The Nigeria Variable: Edge AI bypasses 40% cloud downtime in high-friction environments. Utilizing offline-first hardware ensures 90% operational uptime, even during grid instability.

- Career Acceleration: Professionals who master the “Optimization Stack” (Quantization, Pruning, and NPU deployment) experience a 40% faster job match rate by bridging the gap between theoretical AI and industry-ready success.

Immediate Action Item

Do not wait for a perfect infrastructure. To move from education to industry success, prototype one use case this week.

Protocol: Deploy a simple anomaly detection or object classification model on a Raspberry Pi using TensorFlow Lite.

By executing this one high-leverage move, you transition from a consumer of AI technology to a technical strategist capable of building systems that scale forever, regardless of the environment.